Machine learning developers gained new abilities to develop and run their ML programs on the framework and hardware of their choice thanks to the OpenXLA Project, which today announced the availability of key open source components.

Data scientists and ML engineers often spend a lot of time optimizing their models to work on each hardware target. Whether they’re working in a framework like TensorFlow or PyTorch and targeting GPUs or TPUs, there was no way to avoid this manual effort, which consumed precious time and made it difficult to move applications at a later date.

This is the general problem targeted by the folks behind the OpenXLA Project, which was founded last fall and today includes Alibaba, Amazon Web Services, AMD, Apple, Arm, Cerebra Systems, Google, Graphcore, Hugging Face, Intel, Meta, and NVIDIA as its members.

By creating a unified machine learning compiler that works with a range of ML development frameworks and hardware platforms and runtimes, OpenXLA can accelerate the delivery of ML applications and provide greater code portability.

Today, the group announced the availability of three open source tools as part of the project. XLA is an ML compiler for CPUs, GPUs, and accelerators; StableHLO is an operation set for high-level operations (HLO) in ML that provides portability between frameworks and compilers; while IREE (Intermediate Representation Execution Environment) is an end-to-end MLIR (Multi-Level Intermediate Representation) compiler and runtime for mobile and edge deployments. All three are available for download from the OpenXLA GitHub site

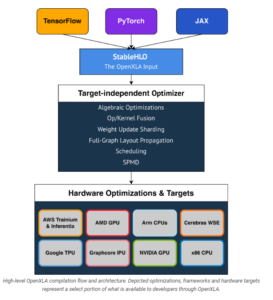

Initial frameworks supported by OpenXLA including TensorFlow, PyTorch, and JAX, a new Google framework JAX is designed for transforming numerical functions, and is described as bringing together a modified version of autograd and TensorFlow while following the structure and workflow of NumPy. Initial hardware targets and optimizations include Intel CPU, Nvidia GPUs, Google TPUs, AMD GPU, Arm CPUs, AWS Trainium and Inferentia, Graphcore’s IPU, and Cerebras Wafer-Scale Engine (WSE). OpenXLA’s “target-independent optimizer” targets albebraic functions, op/kernel fusion, weight update sharding, full-graph layout propagation, scheduling, and SPMD for parallelism.

The OpenXLA compiler products can be used with a variety of ML use cases, including full-scale training of massive deep learning models, including large language models (LLMs) and even generative computer vision models like Stable Diffusion. It can also be used for inference; Waymo already uses OpenXLA for real-time inferencing on its self-driving cars, according to a post today on the Google open source blog.

The OpenXLA compiler ecosystem provides portability between ML development tools and hardware targets (Image source OpenXLA Project)

OpenXLA members touted some of their early successes with the new compiler. Alibaba, for instance, says it was able to train a GPT2 model on Nvidia GPUs 72% faster using OpenXLA, and saw an 88% speedup for a Swin Transformer training task on GPUs.

Hugging Face, meanwhile, said it saw about a 100% speedup when it paired XLA with its text generation model written in TensorFlow. “OpenXLA promises standardized building blocks upon which we can build much needed interoperability, and we can’t wait to follow and contribute!” said Morgan Funtowicz, head of machine learning optimization for the Brooklyn, New York, company.

Facebook was able to “achieve significant performance improvements on important projects,” including using XLA on PyTorch models running on Cloud TPUs, said Soumith Chintala, the lead maintainer for PyTorch.

The chip startups are pleased for XLA, which reduces the risks of adopting relatively new, unproven hardware for customers. “Our IPU compiler pipeline has used XLA since it was made public,” said David Norman, Graphcore’s director of software design. “Thanks to XLA’s platform independence and stability, it provides an ideal frontend for bringing up novel silicon.”

“OpenXLA helps extend our user reach and accelerated time to solution by providing the Cerebras Wafer-Scale Engine with a common interface to higher level ML frameworks,” says Andy Hock, a vice president and head of product at Cerebras. “We are tremendously excited to see the OpenXLA ecosystem available for even broader community engagement, contribution, and use on GitHub.”

AMD and Arm, which are battling bigger chipmakers for pieces of the ML training and serving pies, are also happy members of the OpenXLA Project.

“We value projects with open governance, flexible and broad applicability, cutting edge features and top-notch performance and are looking forward to the continued collaboration to expand open source ecosystem for ML developers,” Alan Lee, AMD’s corporate vice president of software development, said in the blog.

“The OpenXLA Project marks an important milestone on the path to simplifying ML software development,” said Peter Greenhalgh, vice president of technology and fellow at Arm. “We are fully supportive of the OpenXLA mission and look forward to leveraging the OpenXLA stability and standardization across the Arm Neoverse hardware and software roadmaps.”

Curiously absent are IBM, which continues to innovate on chips with its Power10 processor, and Microsoft, the world’s second largest provider behind AWS.

Related Items:

Google Announces Open Source ML Compiler Project, OpenXLA

AMD Joins New PyTorch Foundation as Founding Member

Inside Intel’s nGraph, a Universal Deep Learning Compiler

April 26, 2024

- Google Announces $75M AI Opportunity Fund and New Course to Skill One Million Americans

- Elastic Reports 8x Speed and 32x Efficiency Gains for Elasticsearch and Lucene Vector Database

- Gartner Identifies the Top Trends in Data and Analytics for 2024

- Satori and Collibra Accelerate AI Readiness Through Unified Data Management

- Argonne’s New AI Application Reduces Data Processing Time by 100x in X-ray Studies

April 25, 2024

- Salesforce Unveils Zero Copy Partner Network, Offering New Open Data Lake Access via Apache Iceberg

- Dataiku Enables Generative AI-Powered Chat Across the Enterprise

- IBM Transforms the Storage Ownership Experience with IBM Storage Assurance

- Cleanlab Launches New Solution to Detect AI Hallucinations in Language Models

- University of Maryland’s Smith School Launches New Center for AI in Business

- SAS Advances Public Health Research with New Analytics Tools on NIH Researcher Workbench

- NVIDIA to Acquire GPU Orchestration Software Provider Run:ai

April 24, 2024

- AtScale Introduces Developer Community Edition for Semantic Modeling

- Domopalooza 2024 Sets a High Bar for AI in Business Intelligence and Analytics

- BigID Highlights Crucial Security Measures for Generative AI in Latest Industry Report

- Moveworks Showcases the Power of Its Next-Gen Copilot at Moveworks.global 2024

- AtScale Announces Next-Gen Product Innovations to Foster Data-Driven Industry-Wide Collaboration

- New Snorkel Flow Release Empowers Enterprises to Harness Their Data for Custom AI Solutions

- Snowflake Launches Arctic: The Most Open, Enterprise-Grade Large Language Model

- Lenovo Advances Hybrid AI Innovation to Meet the Demands of the Most Compute Intensive Workloads

Most Read Features

Sorry. No data so far.

Most Read News In Brief

Sorry. No data so far.

Most Read This Just In

Sorry. No data so far.

Sponsored Partner Content

-

Get your Data AI Ready – Celebrate One Year of Deep Dish Data Virtual Series!

-

Supercharge Your Data Lake with Spark 3.3

-

Learn How to Build a Custom Chatbot Using a RAG Workflow in Minutes [Hands-on Demo]

-

Overcome ETL Bottlenecks with Metadata-driven Integration for the AI Era [Free Guide]

-

Gartner® Hype Cycle™ for Analytics and Business Intelligence 2023

-

The Art of Mastering Data Quality for AI and Analytics

Sponsored Whitepapers

Contributors

Featured Events

-

AI & Big Data Expo North America 2024

June 5 - June 6Santa Clara CA United States

June 5 - June 6Santa Clara CA United States -

CDAO Canada Public Sector 2024

June 18 - June 19

June 18 - June 19 -

AI Hardware & Edge AI Summit Europe

June 18 - June 19London United Kingdom

June 18 - June 19London United Kingdom -

AI Hardware & Edge AI Summit 2024

September 10 - September 12San Jose CA United States

September 10 - September 12San Jose CA United States -

CDAO Government 2024

September 18 - September 19Washington DC United States

September 18 - September 19Washington DC United States