Nvidia Jetson Orin

Amid the flood of news coming out of Nvidia’s GPU Technology Conference (GTC) today were pair of announcements aimed at accelerating the development of AI on the edge and enabling autonomous mobile robots, or AMRs.

First, let’s cover Nvidia’s supercomputer for edge AI, dubbed Jetson. The company today launched Jetson AGX Orin, its most powerful GPU-powered device designed for AI inferencing at the edge and for powering AI in embedded devices.

Armed with an Ampere-class Nvidia GPU, up to 12 Arm Cortex CPU, and up to 32 GB of RAM, Jetson AGX Orin is capable of delivering 275 trillion operations per second (TOPS) on INT8 workloads, which is more than an 8x boost compared to the previous top-end device, the Jetson AGX Xavier, Nvidia said.

Jetson AGX Orin is pin and software compatible to the Xavier model, so the 6,000 or so customers that have rolled out products with the AI processor in them, including John Deere, Medtronic, and Cisco, can basically just plug the new device into the solutions they have been developing over the past three or four years, said Deepu Talla, Nvidia’s vice president of embedded and edge computing.

The developer kit for Jetson AGX Orin will be available this week at a starting price of $1,999, enabling users to get started with developing solutions for the new offering. Delivery of production-level Jetson AGX Orin devices will start in the fourth quarter, and the units will start at $399.

Recent developments at Nvidia will accelerate the creation of AI applications, Talla said.

“Until a year or two ago, very few companies could build these AI products, because creating an AI model has actually been very difficult,” he said. “We’ve heard it takes months if not a year-plus in some cases, and then it’s…a continuous iterative process. You’re not done ever with the AI model.”

However, Nvidia has been able to reduce that time considerably by doing three things, Talla said.

The first one is including pre-trained models for both computer vision and conversational AI. The second is the ability to generate synthetic data on its new Omniverse platform. Lastly, transfer learning gives Nvidia customers the ability to take those pre-trained models and customize them to a customer’s exact specifications by training with “both physical real data and synthetic data,” he said.

“We are seeing tremendous amount of adoption because just make it so easy to create AI bots,” Talla said.

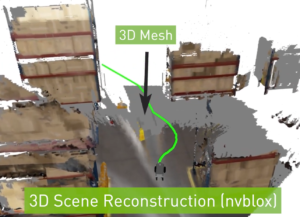

Nvidia is developing simulation tools to help developers create AMRs that can navigage complex real-world environments (Image courtesy Nvidia)

Nvidia also announced the release of Isaac Nova Orin, is a reference platform for developing AMRs trained with the company’s AI tech.

The platform combines two of the new Jetson AGX Orin discussed above, giving it 550 TOPS of compute capacity, along with additional hardware, software, and simulation capabilities to enable developers to create AMRs that work in specific locations. Isaac Nova Orin also will be outfitted with a slew of sensors, including regular cameras, radar, lidar, and ultrasonic sensors to detect physical objects in the real world.

Nvidia will also ship new software and simulation capabilities to accelerate AMR deployments. A key element there is another offering called Isaac Sim on Omniverse, which will enable developers to leverage virtual 3D building blocks that simulate complex warehouse environments. The developer will then train and validate a virtual version of the AMR to navigate that environment.

The opportunity for AMRs is substantial across multiple industries, including warehousing, logistics, manufacturing, healthcare, retail, and hospitality. Nvidia says research from ABI Research forecasts the market for AMRs to grow from under $8 billion in 2021 to more than $46 billion by 2030.

“The old method of designing the AMR compute and sensor stack from the ground up is too costly in time and effort, says Nvidia Senior Product Marketing Manager Gerard Andrews in an Nvidia blog post today. “Tapping into an existing platform allows manufacturers to focus on building the right software stack for the right robot application.

Related Items:

Models Trained to Keep the Trains Running

Nvidia’s Enterprise AI Software Now GA

Nvidia Inference Engine Keeps BERT Latency Within a Millisecond

April 25, 2024

- Salesforce Unveils Zero Copy Partner Network, Offering New Open Data Lake Access via Apache Iceberg

- Dataiku Enables Generative AI-Powered Chat Across the Enterprise

- IBM Transforms the Storage Ownership Experience with IBM Storage Assurance

- Cleanlab Launches New Solution to Detect AI Hallucinations in Language Models

- University of Maryland’s Smith School Launches New Center for AI in Business

- SAS Advances Public Health Research with New Analytics Tools on NIH Researcher Workbench

- NVIDIA to Acquire GPU Orchestration Software Provider Run:ai

April 24, 2024

- AtScale Introduces Developer Community Edition for Semantic Modeling

- Domopalooza 2024 Sets a High Bar for AI in Business Intelligence and Analytics

- BigID Highlights Crucial Security Measures for Generative AI in Latest Industry Report

- Moveworks Showcases the Power of Its Next-Gen Copilot at Moveworks.global 2024

- AtScale Announces Next-Gen Product Innovations to Foster Data-Driven Industry-Wide Collaboration

- New Snorkel Flow Release Empowers Enterprises to Harness Their Data for Custom AI Solutions

- Snowflake Launches Arctic: The Most Open, Enterprise-Grade Large Language Model

- Lenovo Advances Hybrid AI Innovation to Meet the Demands of the Most Compute Intensive Workloads

- NEC Expands AI Offerings with Advanced LLMs for Faster Response Times

- Cribl Wins Fair Use Case in Splunk Lawsuit, Ensuring Continued Interoperability

- Rambus Advances AI 2.0 with GDDR7 Memory Controller IP

April 23, 2024

Most Read Features

Sorry. No data so far.

Most Read News In Brief

Sorry. No data so far.

Most Read This Just In

Sorry. No data so far.

Sponsored Partner Content

-

Get your Data AI Ready – Celebrate One Year of Deep Dish Data Virtual Series!

-

Supercharge Your Data Lake with Spark 3.3

-

Learn How to Build a Custom Chatbot Using a RAG Workflow in Minutes [Hands-on Demo]

-

Overcome ETL Bottlenecks with Metadata-driven Integration for the AI Era [Free Guide]

-

Gartner® Hype Cycle™ for Analytics and Business Intelligence 2023

-

The Art of Mastering Data Quality for AI and Analytics

Sponsored Whitepapers

Contributors

Featured Events

-

AI & Big Data Expo North America 2024

June 5 - June 6Santa Clara CA United States

June 5 - June 6Santa Clara CA United States -

CDAO Canada Public Sector 2024

June 18 - June 19

June 18 - June 19 -

AI Hardware & Edge AI Summit Europe

June 18 - June 19London United Kingdom

June 18 - June 19London United Kingdom -

AI Hardware & Edge AI Summit 2024

September 10 - September 12San Jose CA United States

September 10 - September 12San Jose CA United States -

CDAO Government 2024

September 18 - September 19Washington DC United States

September 18 - September 19Washington DC United States