Four Ways to Visualize Big Streaming Data Now

(lassedesignen/Shutterstock)

Every day, the data deluge continues to grow. From clickstreams and transaction logs to mobile apps and the IoT, big data threatens to overwhelm customers relying on traditional BI tools to analyze it. That’s creating fertile ground for new class of visualization tools that promise to help analysts take action on emerging data streams.

Streaming analytics isn’t new. Stream processing engines like Apache Spark, Apache Storm, Apache Samza, Apache Kafka, and Amazon Kinesis were built to provide the distributed platform upon which streaming applications can be built. But these product lack intuitive user interfaces. That’s where products from vendors like Zoomdata, Alooma, Datawatch, and Splunk are aiming to make a difference.

Visual analysis of streaming data isn’t the only thing that Zoomdata does. For example, the Zoomdata Server (which is largely based on Spark) can pull data from Hadoop, NoSQL stores, cloud apps, and traditional data warehouses, and make it available in the visual data discovery and query interface. Its use of “push-down” processing helps ensure better use of distributed computing resources.

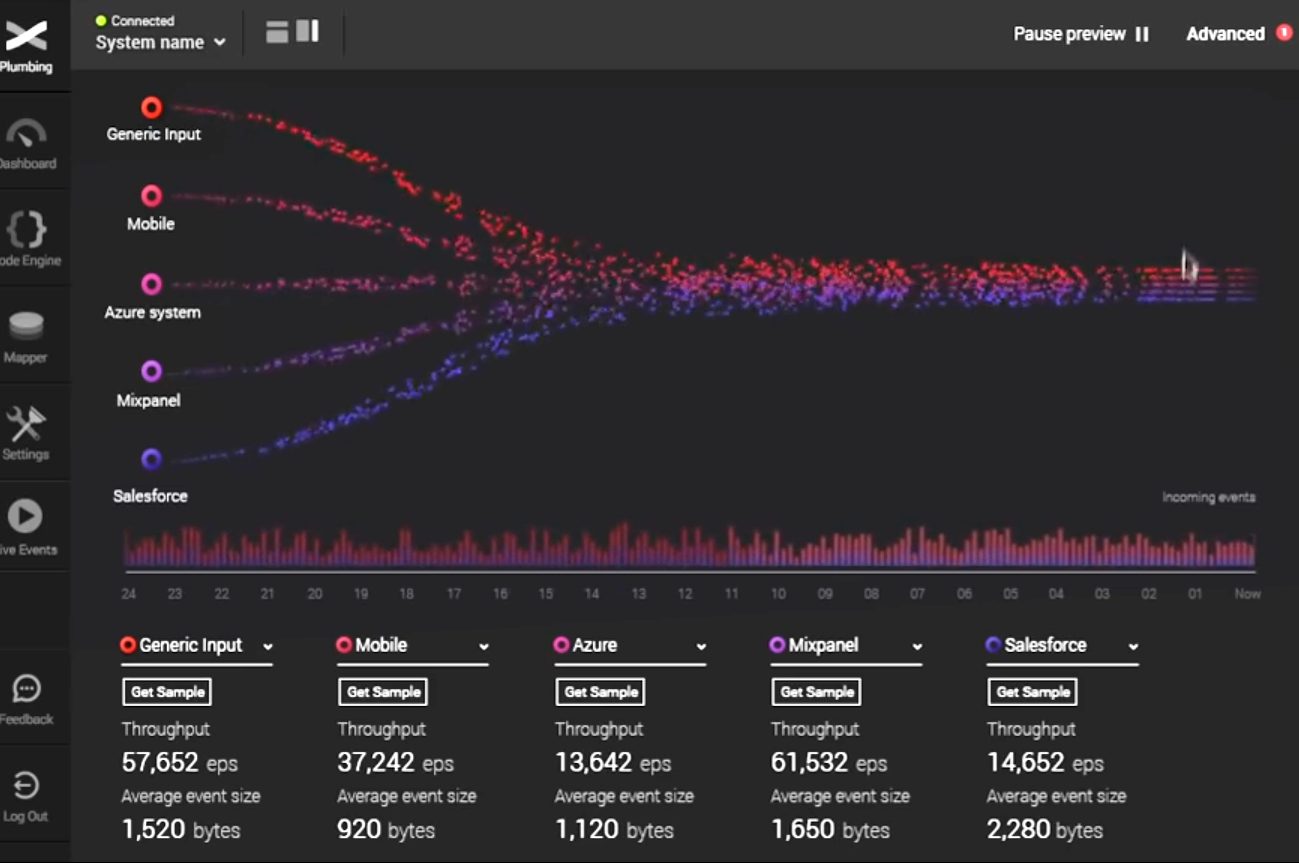

ZoomData uses a “data sharpening” technique to help process billions of rows of data in real time

But the Reston, Virginia company can also hook into Spark Streaming and bubble up visualizations of “micro-batch” data as they flow through Spark. This, combined with the “data sharpening” feature of the software and the “Data DVR” capability to pause, rewind, and fast-forward the state of data flows, provides a very compelling experience for users who need to pull insights from the freshest data possible.

This sort of feature is likely what attracted investments by In-Q-Tel (the venture capital arm of the CIA) and attention from Gartner in the latest Magic Quadrant for Business Intelligence and Analytics Platforms, where Zoomdata debuted this month in the “Visionaries” quadrant.

“Zoomdata is well-suited to business users and data scientists that need real-time insights from streaming data across a range of big data sources, or for developers that need to embed these insights in applications,” wrote Gartner analysts, who lauded the company’s native streaming capability as its number one strength.

Alooma is another company making waves in the big data lake. The Israeli company has done the hard work of cobbling together open source software like Apache Kafka, Apache Storm, Redis, and Zookeeper into a shrink-wrapped product that gives users the capability to visualize and query streaming data.

Alooma Live lets users combine and visualize data in a Web-based GUI

“Real-time data is becoming more and more important,” Alooma CTO Yair Weinberger told Datanami recently. “When we see companies building something for real-time or the cloud, they’re not using the data integration tools of the past.”

While modern tools like Kafka, Storm, and Spark provide building blocks for experienced data engineers to work with, finding people with those skills is difficult, not to mention expensive. “Building something in house based on open source tools is going to be hard,” Weinberger says. “That’s very hard to do.”

Recently the company announced Alooma Live, a new product that enables users to view data flows in real time. The cloud-based software allows users to visualize individual data streams separately, which is useful when data flows differ for different sources.

“Alooma Live makes it possible for data scientists and engineers to gain unprecedented visibility into their streams, so they can correct problems and be confident the intelligence being derived from their data is accurate, reliable and actionable,” Yoni Broyde, Co-founder and CEO of Alooma, says in a press release.

Datawatch’s Panopticon software provides a real-time window atop a streaming OLAP database

Another real-time visualization vendor to keep an eye on is Massachusetts-based Datawatch. The company that began life extracting data from mainframe spool files so it can be consumed by analysts today is providing new ways to visualize streaming data via its Panopticon product.

Originally developed in Sweden (ancestral home of Qlik, TIBCO‘s Spotfire, and other visualization leaders), Panopticon uses a streaming OLAP approach to enable users to visualize large streams of data. The software, which Datawatch bought 3.5 years ago, was originally aimed at companies in the telecom, utility, and financial services industries, but today it’s primarily aimed at financial services outfits.

Panopticon gives traders and brokers a way to extract insight from data to take immediate action, says Peter Simpson, vice president of visualization strategy for Datawatch. “With a visual representation of the massive volumes of data coming from trading and execution systems, exchanges and other sources in real time, analysts can process and identify anomalies, trends, clusters and relationships that were previously missed with traditional number views,” he says.

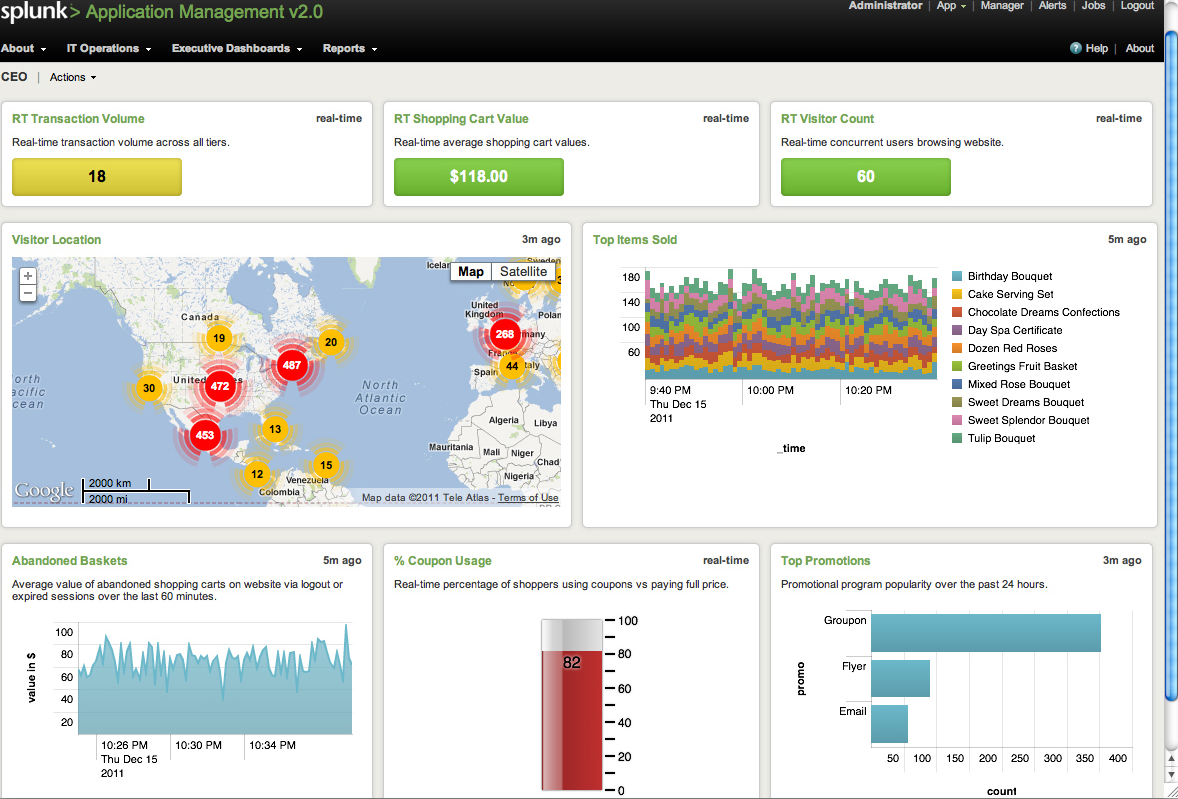

Splunk may be best known for analyzing log files, which is decidedly a batch affair. But the San Francisco company is also moving to build real-time analytic capabilities into its product as it looks to broaden its use cases in the new machine data age.

Splunk is getting into the real-time visualization tool market

The company has recently started to offer the capability with its “real-time searches and reports” functionally, which allows users to analyze log data as it sits in memory when it just arrives in the system, before it’s written to disk on the NoSQL database that underlies the product.

“When you kick off a real-time search,” the company writes. “Splunk software scans for incoming events that contain index-time fields that indicate they could be a match for your search. As the real-time search runs, the software periodically evaluates the scanned events against your search criteria to find actual matches within the sliding time range window that you have defined for the search.”

These aren’t the only ways to tap into the real-time data stream. If you like to build things, there’s an array of building blocks available that you can cobble together into a workable product. If you like to use products instead of building them, though, the packaged software approach may be a better bet.

Analytics tool makers are moving to address the demand for real-time tools, which Gartner says is still in its infancy. You can expect this market to grow in the years to come.

Related Items:

Microsoft Surges in Gartner Quadrant with Power BI

Exactly Once: Why It’s Such a Big Deal for Apache Kafka

Wanted (Badly): Big Data Engineers