A Wave of Purpose-Built AI Hardware Is Building

Google last week unveiled the third version of its Tensor Processing Unit (TPU), which is designed to accelerate deep learning workloads developed in its TensorFlow environment. But that’s just the start of a groundswell of new processors and processing architectures, including Wave Computing, which claims its soon-to-be-launched processor will dramatically lower the barrier of entry for running artificial intelligence workloads.

Compared to traditional machine learning algorithms, deep learning models offer superior accuracy and the potential to achieve human-like precision across a range of tasks. That’s true for both major branches in the deep learning family tree, including convolutional neural networks (CNNs), which are mostly geared toward solving computer vision-type problems, and recurrent neural network (RNNs), which are geared toward language-oriented problems.

While deep learning offers better results, those results come at a cost in the form of two key ingredients that must be present to get the benefits: large amounts of data and large amounts of computing power. Without those two things, the costs likely outweigh the benefits. So it should come as no surprise that the Web giants (i.e. “the hyperscalers”) – which store most of the words and pictures we generate on the Web in their mammoth data centers — have led the way in the development and use of deep learning.

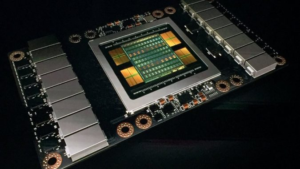

At first the hyperscalers loaded up on NVidia GPUs, which offered the processing oomph required to train big and complex deep neural networks on huge amounts of data. This stocking up on GPUs by hypescalers and high performance computing installations has been a great boon to NVidia, whose stock has risen by nearly 1,200% over the last three years.

However, not everybody is content with the performance offered by Nvidia GPUs. Intel moved strongly to bolster its deep learning chops by acquiring several firms, including spending a reported $400 million on Nervana Systems in 2016 and the $16.7-billion acquisition of FPGA maker Altera in 2015. Intel has promised a 100x improvement in deep learning training, and released the Knights Mill generation of Xeon Phi processors in December as part of that strategy. Not to be outdone, AMD is also pursuing the deep learning market with its Radeon Instinct line of GPUs.

Google unveiled its first TPU back in 2015, but the ASIC wasn’t much more than an accelerator for a traditional X86 computer. Last week at the company’s Google I/O conference, it launched TPUv3, which it claims is a vast improvement on previous processors. The hyperscaler is close-lipped about the design internals of its processors, which can only be accessed through Google Cloud Platform. What we do know about the processor, per HPCWire’s John Russell, is that it’s so hot that it needs water cooling and that a “pod” of TPUv3s offers in excess of 100 petaflops of performance (of unknown precision), which is apparently an 8x improvement over TPUv2.

We’ll undoubtedly hear more about the exhilarating performance of TPUv3 in the weeks to come. But Google isn’t the only outfit heads-down on development of novel hardware platforms designed to tackle today’s emerging deep learning workloads. There are several startups in Silicon Valley that are aiming to claim their piece of the AI pie.

Many of these startups, like Cerebras Systems, are still in stealth mode. The Los Altos company was founded by former AMD and SeaMicro executives and has attracted $112 million in funding so far. It’s unclear if Cerebras is developing an ASIC, a GPU, or an FPGA, which all have the potential to accelerate deep learning workloads beyond traditional X86 CPU architectures.

A little more is known about Groq, the Palo Alto-based firm that hired most of the team from Google that developed the first TPU. Last year Groq stated that its TPU will offer 400 trillions of operations per second, which was twice Google’s TPUv2. Groq, which has raised $10 million, has not yet announced when it will ship its first product.

Another startup, named Graphcore, is further along. The UK-based firm has created what it calls an Intelligent Processing Unit (IPU) card that plugs into the PCIe bus of a traditional X86 server. The company, which has raised more than $110 million, claims that it can speed up machine learning and deep learning workloads by 10x to 100x. However, it too has not yet shipped any product.

Wave Computing, which is based in Campbell is angling to beat all of these companies to market with its custom ASIC designed to run deep learning workloads (including training and inference) at massive scale. Lee Flanagin, senior vice president and chief business officer for the eight-year-old firm, says the company will ship its first product later this year.

“We’ll probably be the first company to introduce product into the market compared to our peer group,” Flanagin tells Datanami.

Wave Computing completed the design on its AI chip, which is based on the Dataflow architecture and which it calls a Dataflow Processing Unit (DPU), and it’s now putting the finishing touches on a workstation-sized computer that will be the DPU’s first route to market.

The company has released some details about the workstation and the chip. Each DPU has 16,000 processing elements and runs at a speed of 6 GHz. Other information about the product has not yet been released.

The workstation is currently part of Wave’s early access program. A formal benchmark that will be published when the workstation is launched later this year will show that it is “surprisingly faster” that Nvidia’s Volta-class GPUs, Flanagin says. “It’s certainly way faster than CPU-only server-based training, and it’s heck of a lot faster than GPUs,” he says.

While GPUs have delivered the goods for the first part of the current wave of AI, they will be insufficient to keep the AI performance bar going up, Flanagin says. He refers to a graphic in a Wave Computing paper that was created by Google that depicts the pattern of instructions sent between GPUs and CPUs.

“There are large dead spaces in the GPU section because it’s waiting to be told what to do,” he says. “It goes back to the master-slave relationships. It’s just the standard computer architecture called Von Neumann architecture , which has been around 50 years. It’s not necessarily a new problem. It’s just that it manifests itself in certain areas in the AI, ML, DL world.”

Nvidia, Intel, and AMD are trying to address the issue, but they can only take it so far with traditional architectures, Flanagin says. “They’re incrementally getting better but they’re not cutting the mustard, and it’s causing investment in us and it’s causing companies like Google to go off and do their own custom ASIC,” he says. “This is why companies like Wave have been funded to the tune of $115 million.”

Related Items:

New AI Chips to Give GPUs a Run for Deep Learning Money

Graphcore Touts 100x ML Speedup with PCIe Plug-In