New AI Chips to Give GPUs a Run for Deep Learning Money

CPUs and GPUs, move over. Thanks to recent revelations surrounding Google’s new Tensor Processing Unit (TPU), the computing world appears to be on the cusp of a new generation of chips designed specifically for deep learning workloads.

Google has been using its TPUs for the inference stage of a deep neural network since 2015. It credits the TPU for helping to bolster the effectiveness of various artificial intelligence workloads, including language translation and image recognition programs. It also says TPU helped power its widely reported victory in the game of Go.

While TPUs aren’t new to Google data centers, the company started talking about them publicly only recently. Earlier this month, the Alphabet subsidiary opened up about the TPU, which it called “our first machine learning chip,” in a blog post. The company also released a technical paper, titled “In-Datacenter Performance Analysis of a Tensor Processing Unit,” that details the design and performance characteristics of the TPU.

According to the paper, Google’s TPU was 15 to 30 times faster at inference than NVidia’s K80 GPU and Intel Haswell CPU in a Google benchmark test. On a performance per watt scale, the TPUs are 30 to 80 times more efficient than the CPU and GPU (with the caveat that these are older designs). You can read more details on the TPU comparisons over at HPCwire.

While Google has been mum on possible commercial ventures around the TPU, some recent developments indicate that Google itself may not be aiming to compete directly with traditional chip manufacturers. Last week CNBC reported that a group of the original Google engineers who designed the TPU recently left the Web giant to found their own company, called Groq.

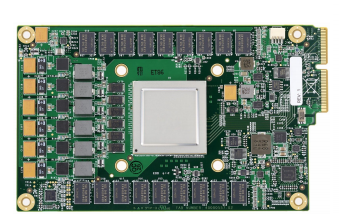

Google’s TPU chip (Source: Google)

According to an SEC document filed for Groq’s incorporation, the company has raised about $10 million. Leading the way is Chamath Palihapitiya, a prominent Silicon Valley venture capitalist. Other ex-Googlers named in the SEC document include Jonathan Ross, who helped invent the TPU, and Douglas Wightman, who worked on the Google X “moonshot factory.”

But that’s not all. “We have eight of the 10 original people that built that chip building the next generation chip now,” Palihapitiy said in a March interview with CNBC. Groq is playing its cards close to the vest, and isn’t disclosing exactly what it’s working on—although by all indications, it would appear to have something to do with machine learning chips.

There are many other groups chasing this new market opportunity, including traditional chip bigwigs Intel and IBM.

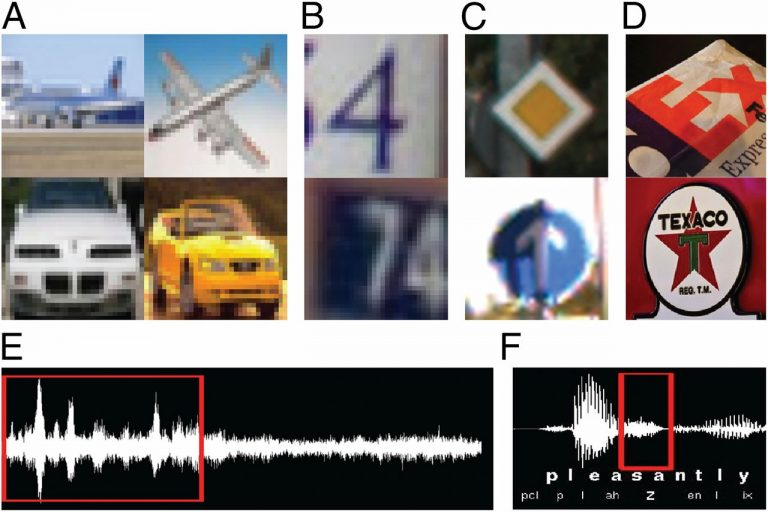

While Big Blue pushes a combination of its RISC Power chips and NVidia GPUs in its Minsky AI server, its research arm is exploring other chip architectures. Most recently, the company’s Almaden Lab has discussed the capabilities of its “brain-inspired” TrueNorth chip, which features 1 million neurons and 256 million synapses. IBM says TrueNorth has delivered “deep networks that approach state-of-the-art classification accuracy” on several vision and speech datasets.

“The goal of brain-inspired computing is to deliver a scalable neural network substrate while approaching fundamental limits of time, space, and energy,” IBM Fellow Dharmendra Modha, chief scientist of Brain-inspired Computing at IBM Research, said in a blog post.

Intel isn’t standing still, and is developing its own chip architectures for next-generation AI workloads. Last year the company announced that its first AI-specific hardware, code-named “Lake Crest,” which is based on technology Intel acquired with $400-million acquisition of Nervana Systems, would debut in the first half of 2017. That is to be followed later this year with Knights Mill, the next iteration of its Xeon Phi co-processor architecture.

IBM’s TrueNorth training set (image source: IBM Research)

For its part, NVidia will be looking to solidify its hold on the emerging machine learning market. While energy-hungry GPUs aren’t as efficient on the inference side of the equation, they’re tough to be beat for the compute-intensive training of neural networks, which is why Web giants like Google, Facebook, Microsoft and others are using so many of them for AI workloads.

However, NVidia isn’t giving up on the inference side of the market, and recently published a benchmark that showed how much better its latest Pascal GPU architectures, most notably the P40, is at inferring than its older Kepler GPU architecture (check out the HPCWire story here). The K80 also out-performed the Google TPU, although Google has probably advanced its TPU since 2015, which is when it calculated the benchmark figures it recently shared. NVidia’s recent hiring of Clément Farabet (formerly of Twitter) also could also portend a shift to more real-time workloads too.

Qualcomm could also be involved in the inference side of the equation. The mobile chipmaker has been working with Yann LeCun, Facebook’s Director of AI Research, to develop new chips for real-time inference, according to this Wired story. LeCun developed one of the first AI-specific chips for inference more than 25 years ago while working at Bell Labs.

The San Diego company recently announced plans to spend $47 billion to buy NXP, a Dutch company that makes chips for cars. NXP was working on deep learning and computer vision problems before the acquisition was announced, and it appears that Qualcomm will be looking to NXP to give it an edge in developing systems for autonomous driving.

Self-driving cars are one of the most prominent areas where deep learning and AI will have an impact. Beyond that, there are many other places where having an on-board AI chip to react to real-world conditions, including in mobile phones and virtual reality headsets. The technology is moving very quickly at the moment, and we’ll soon see other practical uses that will impact our lives.

Related Items:

Intel Details AI Hardware Strategy for Post-GPU Age

Meet Ray, the Real-Time Machine-Learning Replacement for Spark