Five Steps to Fix the Data Feedback Loop and Rescue Analysis from ‘Bad’ Data

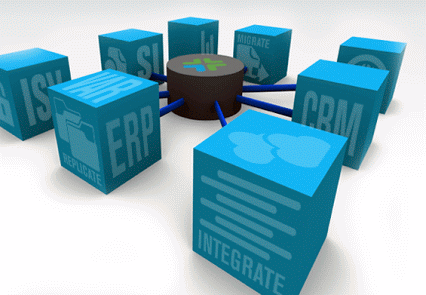

Despite enterprises’ best intentions in enforcing top-down standardization of data sets, non-compliant data can easily seep in and, through aggregations, transformations, and standardizations, spread throughout the organization. In a typical enterprise, inventory data from multiple regions and divisions across product lines could easily result in dozens of data sources being used for one analysis.

It’s easy to imagine any data set with origins this diverse would have problems. Does that new acquisition account for inflation the same way we do? Are those US dollars or Canadian dollars? Such ingrained technicalities might not get discovered until the data is used in reporting or analysis, often after several iterations of data cleansing and processing. Further, identifying data as critically wrong is only the first hurdle. How do you identify the source of this unreliable data and determine the appropriate steps toward correcting it? When crucial business decisions and your team’s reputation depend on data accuracy and completeness, it is imperative to pinpoint and fix problems as they arise.

It’s a hard reality that institutional knowledge of data is scattered across any organization. Quite often, those with the right experience to recognize a data quality issue do not have the knowledge required to fix it. Knowledge distribution happens naturally as companies grow. It isn’t feasible or even desirable to reverse specialization in the organization, but you can invest in shortening the feedback loop — the effort and time required to get from spotting an issue to fixing it.

The Plumbers Have No Tools

It starts with a sinking feeling. A marketing analyst wants to gauge how well a recent promotion did but the May sales numbers don’t reflect the bump from an incentive program she saw in a company-wide videoconference. The reports are at an aggregate level, so she can’t look through all the details to find the discrepancy herself. Were the sales data sources improperly integrated? Are there sources missing entirely? Or is this a problem of data quality? She needs to go to the database managers, explain the problem, and ask them to look into it.

The person who understands the problem in context – the marketing analyst – and the people who know about the data’s origins – the sales teams – are not managing the process. The gatekeeping database manager is doing the investigation without helpful insight from either side. This data quality  management scenario happens constantly in corporations, creating delays, false reporting and many other obstacles to sound decision-making. Worse, data disconnects create distrust between teams.

management scenario happens constantly in corporations, creating delays, false reporting and many other obstacles to sound decision-making. Worse, data disconnects create distrust between teams.

The data, in the eyes of the person who created it, likely isn’t even bad data. It simply carries a different label, or uses a non-standard conversion, or sits behind an additional field that no one else uses. Building feedback loops into the data pipeline creates opportunities to fix the issues where they’re found using trustworthy institutional knowledge from experts. This bottom-up approach solves data discrepancies faster and with shared appreciation across divisions, empowering analysts, data scientists, and business developers while maintaining data governance all along the way.

Adding Bottom-Up to Top-Down Data Unification

It is possible to implement this bottom-up feedback loop system alongside top-down database management. Doing so requires adoption of policies and systems that put power in the hands of individuals to solve problems themselves.

These policies also help, but don’t require, organizations to embrace data diversity. Long the bane of database managers and CIOs, data diversity hasn’t yet been dispelled. Disorder is data’s natural state, and while common practices are helpful in ensuring consistent data collection, top-down policies have never created reliably consistent data. When data is easy to fix, it may become easier to leave data as it is and cull intelligence on demand, rather than to enforce order and hope for perfect compliance.

The following strategies are being used today to put people who identify issues in control of fixing them. Working together, these strategies can create a system that enables data users to unify disparate and unique data sources on demand.

Integrate Expert Sourcing

The first step is the most obvious, but takes serious commitment to pursue with brute force effort. Finding and publishing a current and tested list of experts on various topics takes more resources than most can dedicate to the initiative, regardless of its acknowledged importance. There are tools available tha t employ machine learning to find and rank experts, improving the quality of answers while reducing the number of people queried as time goes on. Answering a few simple “yes or no” questions can correct and refine the data and identify those that hold domain expertise in the various data sets throughout the enterprise. Without this human element to speed intelligence-based answers to pressing questions, non-experts are left searching for needles in haystacks.

t employ machine learning to find and rank experts, improving the quality of answers while reducing the number of people queried as time goes on. Answering a few simple “yes or no” questions can correct and refine the data and identify those that hold domain expertise in the various data sets throughout the enterprise. Without this human element to speed intelligence-based answers to pressing questions, non-experts are left searching for needles in haystacks.

Leverage All Relevant Data

Teams and departments within organizations live on data islands, and analysis can reflect those islands in two ways. First, within the department, parochial views have to suffice where global views of the entire enterprise’s data would yield far better results. An example of this is a review of vendors supplying the company. Finding the best vendors that serve a department would likely be far less helpful than finding the best that serve the enterprise. Second, enterprise-wide analysis is impeded by homegrown databases within departments that, while similar in scope, vary widely in shape. Connecting peers in related roles across all departments and data experts across the organization makes both of these scenarios less painful and more fruitful.

Preserve Provenance

Changing data to solve one problem could create others when analysis and documents become out of synch with the current data. For example, a de-duplication effort across several databases could cut the customer count by 20 percent. A record of the change history provides the shortest path to the most common cause of data disconnects. It identifies the current version of the truth, as well as helps to identify the origin of data that isn’t falling in line, along with the data creator that created or changed it.

Streamline ETL by Predicting Transformations

Hundreds or thousands of data sources can go into an analysis. The ETL process alone can take days, and serves as another common step where useful data can get discarded. Does “part number” in one database indicate the same data as “model number” in another? Is “Q2 2017 forecast” the same as “17Q2 proj.”? Finding the data columns or sources that are difficult for ETL systems to process can uncover the underlying data disconnect. It could be as simple as forcing transformation in certain cases or changing the model if that’s what is needed.

Gain Feedback Throughout the Consumption Stage

Many people only work with data at the final stage: consumption. Whether the data is displayed in a Tableau dashboard, as an SQL query, or even as a single number in an email, there are still opportunities to find quality problems. In fact, the farther downstream from your data sources that you identify quality issue, the more complex communication and cooperation becomes in order to isolate and resolve the issue.

In each case, the focus is building a direct connection between the sources of disagreeable data and the points where disconnects are identified. Database managers can stop chasing down other people’s “bad” data and focus on managing the underlying systems. Addressing known issues — such as similar reports from different data sets, ETL challenges and data source aging — by empowering data users throughout the pipeline to find errors and experts themselves means organizations can match knowledge of the problem and knowledge of the solution in an airtight feedback loop.

About the author: Margaret Soderholm is a Field Engineer at Tamr, Inc. She attended Carnegie Mellon University in Pittsburgh, PA, where she achieved a BS in Statistics. Before Tamr, Margaret worked as an analyst at Evernote, giving her a keen insight into the work that goes into data preparation and the pain points stemming from data quality. Tamr catalogs, connects and publishes the vast reserves of underutilized internal and external data using a combination of machine learning with human guidance so enterprises can use all their data for analytics. Margaret hails from San Diego and lives in San Francisco, CA.

Related Items:

Connecting the Dots on Dark Data