Google Cloud Overhauls AI with Vertex Launch

Google Cloud today unveiled Vertex AI, a fundamental redesign of its automated machine learning stack. In addition to integrating the individual components of the stack more closely together, Vertex AI also introduces new tools to help data teams monitor the models they put into production, as Google Cloud makes a push into MLOps.

The cloud providers haven’t done us any favors when it comes to managing the machine learning model deployment lifecycle, says Craig Wiley, the director of product management for Google Cloud AI.

“The clouds all came through with a story that said, hey use a notebook, train a model, put it behind this auto-scaling endpoint that we’ve got, and guess what? You’re done with machine learning!” Wiley tells Datanami. “We know that that’s not the case.”

Google Cloud is just as guilty as others in the industry of providing a series of disconnected capabilities in data science, Wiley says. The primary focus of the tools was getting the models built and trained, but it left users on their own for what came next. That just isn’t good enough anymore, Wiley says.

“We realized that we had to get to a point where we could really help with customers with…the end-to-end journey of, hey you’ve deployed it, and now you have to manage the deployments,” he says. “That ultimately is what we’re doing with Vertex.”

The grand plan with Vertex AI, according to Andrew Moore, vice president and general manager of Cloud AI and Industry Solutions at Google Cloud, is to “get data scientists and engineers out of the orchestration weeds, and create a industry-wide shift that would make everyone get serious about moving AI out of pilot purgatory and into full-scale production.”

To that end, Vertex AI brings a greater focus on machine learning operations, or MLOps. The company says that Vertex AI can reduce the number of lines of code that data scientists and ML engineers have to write by up to 80%.

The vast majority of Google Cloud’s existing AI and machine learning tools, including the AI Platform and AutoML, will become part of the Vertex AI offering, which is accessible through a single user interface (UI), Wiley says. The exceptions are industry-specific Google Cloud offerings, like anti money laundering or the contact center AI offering, he says. Analytics focused offerings, like Google Cloud BigQuery, will not become part of Vertex AI, although there will be connections.

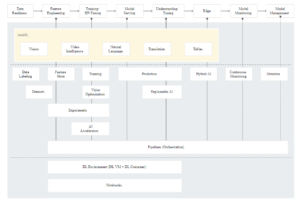

Among the new tools that Google Cloud will be providing with Vertex AI include:

Vertex Feature Store, a repository to help data science practitioners organize, store, and serve machine learning features;

Vertex Model Monitoring, a self-service model tool that lets users monitor the quality of machine learning models over time;

Vertex Matching Engine, which delivers a vector-based similarity search;

Vertex ML Metadata, which allows users to record the metadata and artifacts produced by the machine learning system;

Vertex TensorBoard, which lets users track, visualize, and compare machine learning experiments;

And Vertex Pipelines, a Kubeflow-based offering for automating machine learning pipelines.

All told, Vertex AI includes more than 15 individual components, many of which existed before and have been rebranded under the Vertex name. Besides the new components mentioned above, the current Vertex AI lineup includes: AutoML, Deep Learning VM Images, Notebooks, Vertex Data Labeling, Vertex Deep Learning Containers, Vertex Vizier, Vertex Edge Manager, Vertex Explainable AI, Vertex Neural Architecture Search, Vertex Prediction, and Vertex Training. There is also a Vertex SDK for Python, as well as client libraries for Java and Node.js.

Vertex AI includes the same AI tools that Google developers use to create machine learning applications, specifically around computer vision, text analytics, and structured data. According to Wiley, this includes things like Google’s neural architecture search-as-service and its approximate nearest neighbors services.

Vertex also includes new governance and metadata monitoring capabilities to make sure that customers models not only comply with regulations, “but also you can be comfortable [that they] are doing the things you want them to be doing in production,” he says.

Google Cloud’s embrace of MLOps with Vertex is unique among the hyperscalers, Forrester analyst Kjell Carlsson says.

“It lowers the barrier for MLOps, the creation and deployment of AI applications, and, ultimately, AI-fueled digital transformation,” Carlsson says. “Before this, there were no good solutions out there that provided all the professional-data scientist-and-data engineer-grade capabilities in an integrated platform. Enterprises had to stitch together a stack of disjointed tools and technologies making it hard for nearly all enterprises to get the full value from their AI initiatives.”

Vertex AI is the culmination of two years of work, according to Wiley. The process began with a back-to-basics approach: by defining what nouns and verbs they would use. For example, what is a model? Once the group had a solid answer to that, it moved forward with defining higher-level abstractions.

“With Vertex, what we’re launching at this point is a foundation that a lot of these services can now be built on top of, as we move forward,” Wiley says. “I expect in the coming weeks and months for usability to improve, particularly cross-product usability, as well as puling in some additional services from data analysis and potentially database themselves, as first class citizens.”

Be re-architecting the various components with a common foundation, Vertex will improve the compatibility of existing and future Google Cloud AI products, Wiley says.

“Like others in the industry, we quickly built a whole series of capabilities, none of which worked with each other,” he says. “If you’re doing something in Auto ML Vision and wanted to now use custom code to continue the process? It’s like, nope. These things were entirely incompatible with each other, for the most part.”

Vertex AI will allow data scientists to move more freely across Google Cloud offerings, which now share a common foundation with Vertex. But the program will also allow users to move up and down the stack, and replace things at their leisure. That’s all part of the Vertex plan, Wiley says.

“You can sit in our AutoML workflow now and say, hey I don’t want to use your AutoML algorithm. I want to use my custom code,’” Wiley says. “And [you can] drop it in and continue on. Or you can drop down into a notebook. Or you can move over to BigQuery and orchestrate it all from BigQueryML.”

As mentioned, BigQuery also has a role to play in the current (and future) Vertex offerings, according to Wiley. “Part of Vertex is we’re bringing BigQuery closer and closer. We continue to be amazed by the growth of BigQuery ML,” Wiley says. “We see people building both relatively unsophisticated and quite sophisticated models, and doing a lot of batch processing on these models directly in BigQuery.”

Google Cloud will be discussing Vertex AI during Google I/O, a developer event that’s taking place this week. You can find more information about Vertex AI at the Google Cloud Vertex AI page.

Related Items:

Google Cloud Extends BigQuery to AWS, Azure

Google Cloud Unveils Slew of New Data Management and Analytics Services

Google to Automate Machine Learning with AutoML Service