Microsoft Beefs Up Bing With Intel FPGAs

Long-time technology partners Intel Corp. and Microsoft are again collaborating on an effort to advance deep learning capabilities in Microsoft’s search engine using the chip maker’s FPGAs.

The partners said this week Microsoft’s Bing search engine is demonstrating real-time AI capabilities running on Intel’s Arria and Stratix FPGAs. The goal of the “intelligent search” effort launched by Microsoft last summer is to “provide answers instead of [links to] web pages,” Reynette Au, an Intel marketing vice president, noted in a blog post.

The FPGA-backed search engine is designed for understanding key words, their meaning and “the context and intent of a search,” Au added. Intel’s FPGAs enable real-time AI via tuned hardware acceleration that complements the chip maker’s Xeon line of CPUs. The combination is applied to computationally heavy deep neural networks to boost search engine results.

At the same time, the FPGA approach allows evolving AI models to be tuned to boost performance required to deliver search results in real time, Intel (NASDAQ: INTC) said.

Microsoft (NASDAQ: MSFT) announced new AI-based search features in December with the goal of providing relevant results across multiple sources. Underpinning advanced search features are tasks such as machine reading comprehension, a computational-heavy capability. “You actually have to run these machine-reading comprehension models across lots of [web] pages, then aggregate them” to deliver a range of search results, said Rangan Majumder, a Bing program manager.

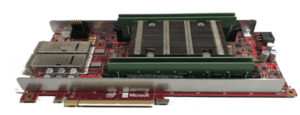

Hence, Microsoft said it built these search tasks on a deep learning acceleration platform called Project Brainwave which runs deep neural networks on Arria and Stratix FPGAs.

According to a Microsoft blog post, “Intel’s FPGAs have enabled us to decrease the latency of our models by more than 10x while also increasing our model size by 10x.”

The Brainwave platform unveiled in August 2017 uses a distributed system architecture that includes a hardware-based deep neural network engine integrated onto FPGAs along with a compiler and runtime for accelerating deployment of trained deep learning models. Microsoft noted when it unveiled the real-time AI project that it has rolled out an FPGA infrastructure over the last several years, directly attaching the processors to its datacenter network where deep neural networks are served as micro-services.

Microsoft demonstrated Project Brainwave at an industry conference last summer running on Intel’s new 14-nm Stratix 10 FPGA. This week’s announcement expands the AI partnership.

Project Brainwave supports Microsoft’s Cognitive Toolkit and Google’s (NASDAQ: GOOGL) Tensorflow, and the company said it plans to support others machine learning frameworks.

Recent items:

AWS Partner Ryft Leverages Cloud FPGAs

FPGA System Smokes Spark on Streaming Analytics