The Growing Menace of Data Hoarding

One of the downsides of living and working in a data-rich environment is the desire to squirrel away every last bit and byte for future use. Thanks to cheap storage systems such as Amazon S3 and Hadoop, it’s technically possible to store every piece of data you’ve collected. But going too far down that path can lead to a perilous condition known as data hoarding.

While data hoarding may not be as great a threat as physically hoarding real-world items, there is a similar psychology at play. A physical hoarder who stores every edition of the New York Times from the last 25 years may do so out of a misplaced idea that they’ll need to reference that paper at some point in the future. Similarly, a digital hoarder may hold onto every last keyword report from Google under the misplaced notion that it will boost marketing efforts.

It should come as no great surprise that episodes of data hoarding are on the upswing. After all, thanks to the big data boom, we have abundant and affordable storage, much of it residing on the cloud. For the same amount of money, you can store 50 times as much data in a Hadoop-based data lake than in a traditional data warehouse, according to EMC data evangelist Bill Schmarzo. That’s a big advantage.

The data hoarding problem is exacerbated by the fact that some big data solution providers are telling their clients to not throw data away. When you combine that with the mentality that competitive advantages can be easily mined from data exhaust, as well as the momentum that hoarding itself generates, you can see how data hoarding has the potential to become a serious problem.

From One Extreme to Another

Over the past 20 years, we’ve bounced between two extremes on the data storage front. Back in the old days (i.e. 1995), when storage costs were much higher, companies would warehouse only the data that was critical to their operations. Typically the data originated in operational data stores, and the data would be heavily transformed to closely conform to pre-set schemas. Insights could then be extracted and reports run from these tightly controlled data warehouses.

(Graphicworld/Shutterstock)

But big data lakes have flipped the script, so to speak, on data warehousing. Instead of storing only data that has a proven business value, companies are now storing any piece of data that has a remote chance of providing value in the future. Much of this is raw data, or “data exhaust,” that was previously discarded because it didn’t provide immediate business value.

We’ve gone from one extreme to another, says Yaniv Mor, the CEO and co-founder of a data integration startup called Xplenty, who has seen this type of data hoarding get worse over the years.

“Companies these days tend to simply store data just to be on the safe side, just in case someone wants to use that data in the future,” Mor says. “Storage is cheap these days, relatively, so they’ll just put everything on Amazon S3 or Google Cloud storage. But when the analysts come and need to extract some information out of it, it’s becoming a big challenge. This is something we see all the time.”

Apache Hadoop and cloud storage are enablers to data hoarding, according to Mor. While these platforms bring advantages when it comes to storage costs, they also expose the lack of a specialized skillset for extracting useful information from data.

“It’s a big challenge,” Mor says. “It’s not easy to comb through that data and get insights. You have to have data scientists and very specialized analysts who have the skillset to sift through that data.”

Growth of Data ROT

Big companies and other organizations, such as government agencies, are among those succumbing to data hoarding. According to Jody Houck, executive director of the U.S. DoD and U.S. Intelligence Business Sector for Veritas, federal agencies find it easier to just add more storage instead of facing their data hoarding problems head on.

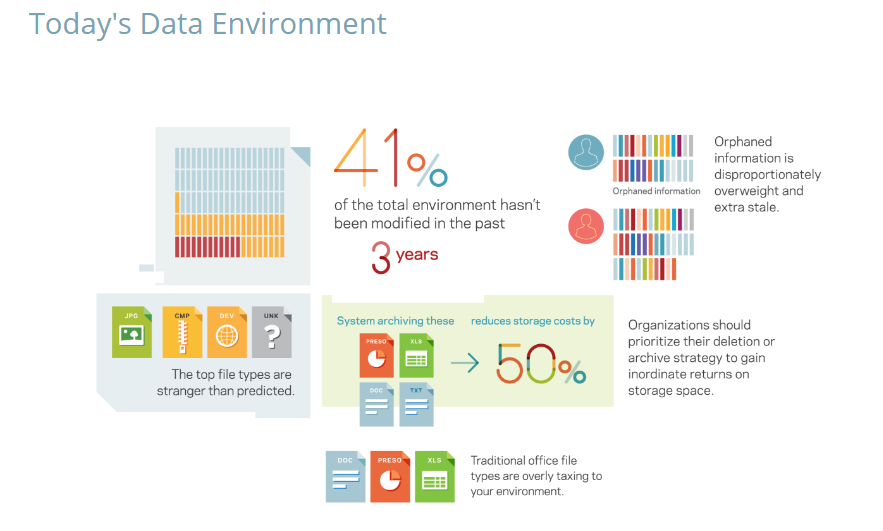

Source: Veritas inaugural Data Genomics Index 2016

“There’s a lot of myths,” Houck says in an April interview with Federal News Radio. “They believe storage is cheap, that all data has value, that all data has equal value, and that they’re going to move this data into the cloud. So that’s free storage, so why shouldn’t I keep it?”

In reality not all data is information, Houck says. In fact, 40 to 60 percent of the data an average organization stores these days is redundant, obsolete, or trivial (ROT), according to Veritas’ Data Genomics Index 2016.

What’s more, Veritas found that upwards of 40 percent of organization’s data is stale (i.e. it hasn’t been touched in three years). Organizations are spending huge amounts of money to store millions of individual files that nobody is using anymore. “They’re spending $5 million per petabyte to store ROT,” Houck says.

Eye on Marketing

While data hoarding is an equal opportunity offender, there is one segment of the business that Xplenty’s Mor says may be particularly prone to its siren call: marketing.

“Marketers just collect evening but they don’t necessarily know what to do with it,” Mor says. “Marketers need to understand that not all data is created equal. They don’t necessarily have to collect every bit and byte that the marketing services out there are providing them with. Marketers are a great example for creating data swamps.”

Keeping track of things (i.e. “governance”) also becomes a big issue for hoarders. Just like people who hoard physical items may have trouble finding a particular item in a house filled to the ceiling with copious amounts of stuff, data hoarders will also find themselves struggling under the weight of data. When tight schema control breaks down and an “anything goes” mentality takes over a data lake, it can quickly devolve into a murky data swamp.

Marketers may be more prone to the data hoarding problem, according to Xplenty’s Mor

There’s no clear definition of data hoarding, and the syndrome may exist in varying degrees in a wide variety of institutions. It should also be disambiguated from legally mandated archives. Banks, for instance, may be legally required to hold onto data for many years, while some healthcare organizations must keep medical data for decades.

Internal data was the source for most data warehouse initiatives 20 years ago, but today’s big data hoarders tend to gorge themselves on readily available external data. Social media data, in particular, is often stored in data lakes with the idea that it can be blended with other data to yield meaningful signals. But social media data is often quite “noisy” and contains questionable business value.

Data Hoarding Solutions

The first step in solving the data hoarding problem is to admit that there’s a problem. After that, there are several strategies one can take.

Veritas’ Houck advocates a top-down data governance solution that begins with gaining visibility into data and its value. After creating a better model to classify data, then it’s up to a data professional, or perhaps a chief data officer, to take ownership and implement better data governance policies.

“We believe there’s a much better way to go about supporting our missions and driving out costs if we implement an information governance strategy today and start around ROT and stale data, and then move forward with coming up with solutions to create dispensations projects so we can move data that has no value off our systems,” she says in the interview with Federal News Radio. “It’s a culture change. It’s a technology change. We can’t do it by manually looking at every piece of data but there is the capability to automatically crawl around, document what you have and then take action.”

There is an urgent need to educate people about the data hoarding problem, Xplenty’s Mor argues. “You have to educate people about what they need to do with the data that’s available to them, especially in terms of the evaluating architecture of data, particularly on the cloud,” he says. “People don’t know how to build data architectures on the cloud.”

Ultimately, the data hoarding problem must be addressed from the bottom-up, and that means getting individuals to change how they view data. “It’s not about how much data you collect at the end of the day–it’s a matter of what value you’re going to get from the data,” he says. “That’s the question every analyst, every data professional, should ask himself or herself every day.”

Related Items:

Human Insight Is Key To Utilizing Data

What Lies Beneath the Data Lake

Data Hoarders In Need of Quality Treatment