Human Insight Is Key To Utilizing Data

(Image: Shutterstock)

For some, the big data revolution represents a clear progression toward a new (and inevitable) way of seeing the world. Like the driverless cars that pilot themselves down I-280 during morning rush hour in San Mateo County, big data seems to offer a tantalizing glimpse of a world free from nearly all human error. The organizational theorist Geoffrey Moore goes so far as to argue that “Without big data, you are blind and deaf and in the middle of a freeway.”

But consider the recent, real-life instance of John Gass, a middle-aged driver from Massachusetts with a nearly flawless driving record who received an automated notice informing him that his license was being revoked without further explanation, effective immediately.

As Luke Dormehl relates in The Formula: How Algorithms Solve Our Problems … and Create More (Perigee Books, 2014), the Massachusetts Registry of Motor Vehicles (MRV), in an effort to keep dangerous drivers off the road, was using facial-recognition algorithms to scan their vast database in search of criminals. Mr. Gass’s image was flagged because it happened to resemble the face of another Massachusetts driver… one whose record wasn’t so squeaky clean as Gass’s.

As luck would have it, the facial-recognition algorithm automatically triggered another algorithm that instantly delivered a letter to Mr. Gass informing him of the RMV’s decision to revoke his license. Now it was up to Mr. Gass to convince the good human beings at the RMV that their machine had in fact made a mistake.

Using big data to identify criminals quickly and at scale is just one among the galaxy of improvements big data can offer our society. But consider the all-too-human case of John Gass. Consider the individual left standing in the wake of an algorithm.

Where do we draw the line?

Scientific Data Usage

What gives big data the power to do social good and harm isn’t so much its scale. Rather, it’s the scale that we as humans allow big data to orchestrate our lives without our slightest consent. If we consider big data in terms of its effect at the individual level, we arrive at three fundamental categories: Scientific, Automatic and Mediated. While these three categories often blend together into hybrid combinations, it’s important we examine each as its own discrete entity.

(Maksim Kabakou/Shutterstock)

Essentially all data usage that informs human context, scientific knowledge, or knowledge of ourselves falls into the Scientific category. Scientific usage of big data has the potential to transform our way of seeing the world over the long term. It’s the stuff of scientific revolutions, but it also tends to have little discrete effect on our immediate lives.

The Large Hadron Collider (LHC) might well be the world’s most powerful particle accelerator, requiring an enormous amount of data and many hundreds of hours of data analysis. But as exciting as the knowledge of the Higgs boson particle’s existence is, the LHC’s importance to the average person is fairly minimal, especially when weighed against the daily to-do lists of our lives (unless of course one happens to be particle physicist in France or Switzerland).

Automatic Data Usage

On the other side of the data usage spectrum, there’s the Automatic category, the one Mr. Gass ran afoul of. As its name implies, human control over Automatic data usage is slim to none, while its impact on our everyday lives can be life-changing indeed. By entrusting our well-being to algorithms without direct human mediation, the resulting lack of agency can be liberating for some, bewildering for others and deeply troubling for others still. Case in point: if you type “when algorithms” into Google, top autofill suggestions include “go wrong,” “control the world” and “rule the world.” For “big data,” one autofill suggestion is simply “dangerous.”

But automated algorithms needn’t provoke images of feral snippets of JavaSript escaping their cages. The fact is that the vast majority of automated algorithms perform their functions ably and without a hint of controversy.

Take the clean-cut case of the Nest Learning Thermostat, a self-learning home thermostat that falls very much under the Automatic category of data usage. Allowing Nest to track when a person leaves for work in the morning or in what room a family eats dinner seem like reasonable tradeoffs for having a home that sets itself to the perfect temperature in the coldest part of winter. And judging by Nest’s popularity with consumers, it’s clear that Automatic algorithms are beneficial.

As we increase our reliance on Automatic data usage, we need to take great care to ensure that the scope of change in individuals’ realities is ethical and proportional to the benefit. We’re most comfortable with Automatic data usage that doesn’t affect our day-to-day lives much beyond increasing indoor temperature incrementally, or which requires our consent and participation.

An example of the latter: whenever we browse the Internet, the ads we see are tailored to our interests, based on our recent online histories. While an Automatic algorithm might be feeding us these ads, we’re still directing the algorithms with our every click and purchase – and consent is just a matter of allowing browser cookies.

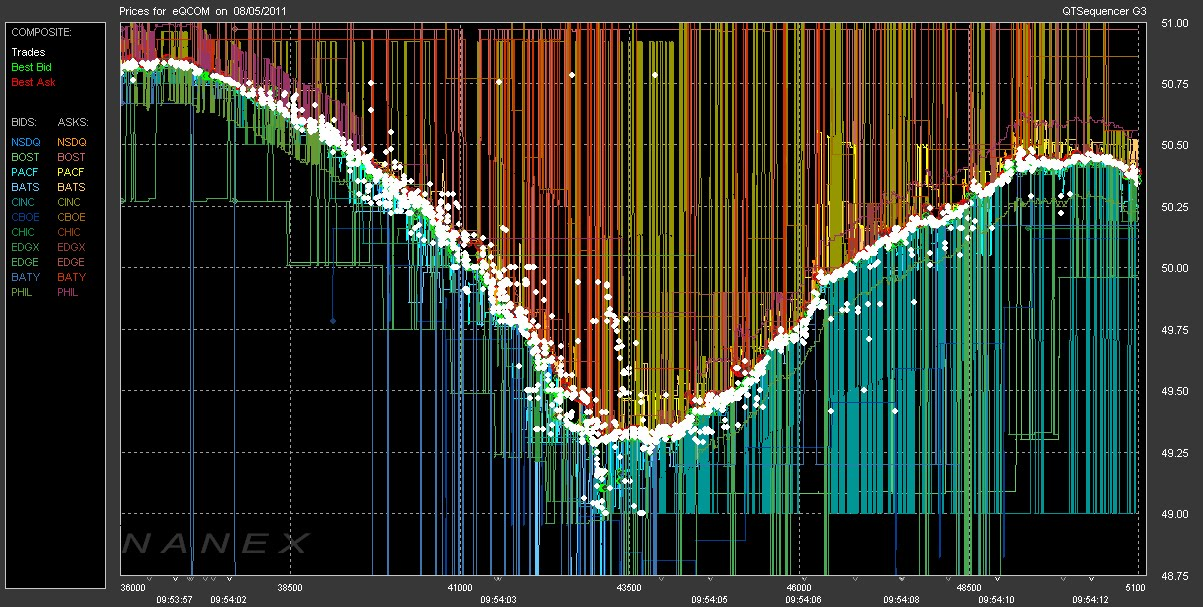

Thermostats are one thing; automatic, algorithm-driven pricing systems for global markets are quite another. But in recent times, that’s just what’s happened. Traders have developed sophisticated algorithms that crunch market data to determine pricing. By and large, the system has worked handsomely. New algorithms have played a part in helping global marketplaces grow more efficient – and soar to record heights.

But allowing pricing to fall solely into the purview of Automatic data usage is problematic. High Frequency Trading (HFT) in the stock market removes a considerable degree of human control over global banking and economic systems. The net result of HFT is that humans can’t explain how or why the algorithms act the way they do to produce a particular dip or spike in the market, causing many, including Federal Reserve governor Lael Brainard, to fight for closer scrutiny over the role of HFT in Treasuries.

Automatic data usage can be even more insidious than HFT. A recent article in the New York Times, When Algorithms Discriminate, points to a 2013 Harvard study which found that “ads for arrest records were significantly more likely to show up on searches for distinctively black names or a historically black fraternity.” Viewing an individual’s search results alongside ads for arrest records could have a wide range of repercussions, ranging from perpetuating unconscious bias to deterring potential employers from hiring based on skin color.

If even algorithms can develop biases, other worrying hypothetical possibilities arise: what if there were automatic loan approval/rejection algorithms, which were just as biased? What if there was an automatic no-fly list generated based on PRISM data?

The point here is twofold. Obviously, we should work to wipe every trace of bias from our algorithms. Furthermore, we need to be mindful about when and how we weave Automatic algorithms into the fabric of our reality.

Mediated Data Usage: Bridging the Divide between Algorithm and Individual?

Somewhere on the spectrum between the Scientific category (high degree of human involvement via human culture, low impact on everyday life) and Automatic (minimal human involvement, tangible impact on everyday life) is the Mediated category, which balances human control with harnessing the power of data.

In the Mediated category, data is collected, aggregated, and analyzed with the intent to feed back and influence human behavior and reality, but it always requires human mediation. In other words, it’s a potential big data sweet spot.

Like Automatic data usage, Mediated usage of big data affects us powerfully on an everyday level – but to a lesser degree. Why? The answer is simple: because we get to be the ones who activate and enable it.

(cherezoff/Shutterstock)

Fitbit algorithms monitor our health only so much as we choose to wear them. Online dating algorithms “match” us with new possible partners only so far as we actually sign up for them and type out a dating profile. And Amazon can provide us with new, insightful book recommendations based on our previous reading histories, provided we even choose to have an Amazon account. In all these cases, Mediated data usage offers us a safe entrance and a safe exit.

The key to each type of data usage is proportionality, and finding that proportional balance is no mean feat. But by considering big data-driven algorithms through the lens of these categories, practitioners can ensure that they’re addressing head-on the central ethical issue at the heart of the big data revolution: the freedom of the individual.

Automatic, data-driven algorithms stand to improve our society in ways we’re only beginning to fathom. They can predict dangerous illnesses by analyzing every data point in our DNA sequence. They can keep dangerous criminals off airplanes. And they can suggest the perfect birthday present for a loved one.

We should be grateful to big data for its power to accomplish so many different things. But it’s time we also see big data in all its fallibility.

To borrow Geoffrey Moore’s own phrase, big data has as much power to leave us “blind and deaf and in the middle of a freeway” as it has power to pilot our civilization safely. If we truly become the first generation in history to buy driverless cars en masse, we’ll need to make sure those cars come with override switches, and that we fasten our safety belts like we were taught as children. It’s really just a matter of human commonsense.

About the author: Catherine Williams is Chief Data Scientist at AppNexus, the world’s leading independent advertising technology company. She earned her PhD in mathematics at the University of Washington and held postdoctoral appointments at Stanford University and Columbia University.

Related Items:

Police Push the Limits of Big Data Technology

Big-Data Backlash: Medical Database Raises Privacy Concerns