U.S. Nanotech Initiative Emphasizes Predictive Analytics, Informatics

The National Nanotechnology Initiative (NNI) in the United States, which was launched in 2000, is comprised of 25 federal agencies that touch the nanotechnology space in terms of research, development and funding. The group brings experts together to create a regulatory, budgetary and ideological framework for nanotechnology initiatives. The NNI has developed the core infrastructure at a number of educational and research institutions and designs, funds and oversees key nanotechnology projects.

This week the organization released its National Nanotechnology Initiative 2011 Environmental, Health and Safety(EHS) Research Strategy report, which includes, for the first time in their series of internal guides, a wealth of information about the new challenges “big data” presents.

This week the organization released its National Nanotechnology Initiative 2011 Environmental, Health and Safety(EHS) Research Strategy report, which includes, for the first time in their series of internal guides, a wealth of information about the new challenges “big data” presents.

Given the comprehensive scope of the NNI on the funding and research levels, big data is presenting a new set of issues in terms of the analytics and computational resources required to crunch big nanodata. The NNI is working to address these issues head on in the coming year with an emphasis on predictive analytics, modeling, and other computationally-driven solutions to moving the pace of nanotechnology research forward.

As Erwin Gianchandani, director of the Computing Community Consortium noted today, “For this first time, the research strategy includes a core area of research in predictive modeling and informatics—at the same time as nanomaterial measurement, human exposure assessment, human health, environment and risk assessment and management.” In other words, the NNI is finally seeing that the analytics side of the nanotechnology initiatives equation is on par with the other most prominent matters in the space.

The big data issues in nanotechnology are important enough that they dominated a great deal of the NNI’s research strategy as stated in the EHS report. The group states that “expanding informatics capabilities will aid development, analysis, organization, archiving, sharing and use of data that is acquired in nanoEHS research projects.” They claim that improving management, data quality and modeling/simulation capabilities are keys to moving forward.

Additionally, NNI sees challenges ahead in terms of data acquisition and reproducibility, the use of advanced modeling and predictive analytics tools and the ability to share data easily and securely. These are concerns that are shared by many others across the broad spectrum of scientific and technical computing, so what is it about nanotechnology that requires big input on big data?…

As Mark Bruns from NanoTools said, “it may not be immediately obvious, but nanotechnology is dependent upon effectively using big data, scalable computing resources, virtual instruments, and every advantage that information technology furnishes. Proficiency in the use of information technology [as it exists at this point in time, not five or ten years ago] is a prerequisite for effectively doing nanotechnology.”

For more details about NNI and these big data-driven initiatives, read a post from the U.S. Office of Science and Technology Policy, “Responsible Development of Nanotechnology: Maximizing Results While Minimizing Risk” from this week, which comments on the NNI’s new focus.

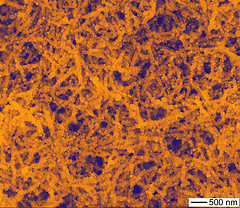

Image credit: Argonne National Laboratory