Intel Hitches Xeon to Hadoop Wagon

No longer content to sit on the sidelines of major Hadoop events, Intel today unveiled its strategy to tap into the momentum around the open source platform with its own purpose-built distribution.

Intel’s VP of the Architecture division, Boyd Davis, pointed to the gathering of forces around Hadoop and big data, noting that for the chipmaker, big data is far more than a buzzword to be bandied about.

Intel’s VP of the Architecture division, Boyd Davis, pointed to the gathering of forces around Hadoop and big data, noting that for the chipmaker, big data is far more than a buzzword to be bandied about.

The company thinks it has found an in through optimizing both the hardware and software stacks supporting production Hadoop clusters, making them enterprise-ready and backed by a company that has an entrenched reputation in overall IT.

Hadoop has evolved from an experimental platform reserved mostly for web companies to an increasingly viable option for large-scale traditional enterprise processing. Driven in part by enhancements and support from the ripe ecosystem of distro vendors and hangers-on, the race has been to recreate the platform into one that is robust, stable, and performance-conscious enough for high end enterprise customers to consider. An important element for those potential big money users is on the reliability, security and governance side—and at this week’s Strata event, an endless string of announcements from vendors across the Hadoop ecosystem focus on that very issue of enterprise-readiness.

For example, not to be outdone (by a hardware vendor, no less), on the same morning of Intel’s announcement, one of the largest Hadoop distro vendors, Cloudera, upped their enterprise appeal with some key fixes on the data governance and security sides. During our conversation with Cloudera’s Charles Zedlewski, he said the ability for Hadoop to be audited and governed in sensitive environments (life sciences, financial services, etc.) makes it finally more appealing for top-tier mission critical environments.

But this all begs the question, what is Intel’s role going to be in an already well-stocked distro vendor pond? It’s all about harnessing the power of new hardware developments, says Davis. From instruction sets to SSDs to novel caching approaches, the chipmaker says that they are uniquely positioned to steel Hadoop for the toughest of enterprise deployments via their Xeon line.

Intel hopes to tie its Xeon processors to the leg of Hadoop by declaring that most distros aren’t optimized to capture the power of the hardware underpinnings. For an open source package that’s designed to run on cheap commodity hardware, this is somewhat compelling, especially for those trying to optimize Hadoop clusters for their specific application.

Naturally, Intel sees Xeon as the premium chip for the Hadoop job, but they note they’ve made some enhancements to optimize Xeon for Hadoop deployments. For instance, over a year ago Intel baked in instructions to accelerate encryption for high performance applications, but now they’ve managed to wrangle that same technology to push securing speeds on Hadoop at a reported 20x improvement on the standard, according to their internal benchmarks. What this means for users is that they can secure data inside of Hadoop without the encryption overhead that traditionally exists when this process is handled at the framework level, creating some significant performance overhead.

SSDs are another important area where Intel has invested using their pre-existing caching software, which has been twisted to fit the needs of the unique storage and caching layers Hadoop clusters rely on.

Intel Labs is still pushing the boundaries to address some of the more specific issues around parallelism, compression and dealing with the mighty map algorithms that power the platform. While they weren’t specific about what elements they would address in these areas, they noted that these three main areas were the impetus from some other core enhancements to optimize Hadoop for Xeon—all of which should find their way into the open source community over time.

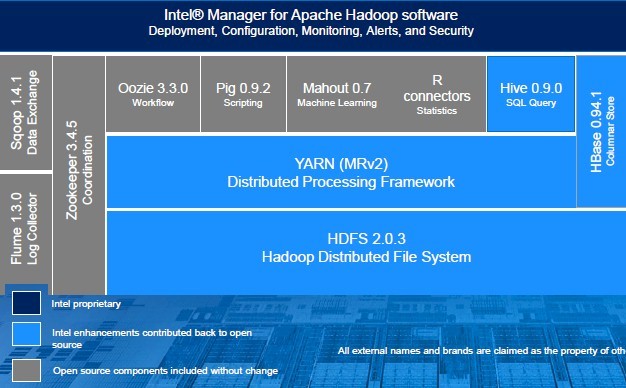

Beyond the silicon, Intel labs and the company’s phalanx of software devs have tackled a few key challenges of Hadoop on the performance side, including tweaking MapReduce and YARN performance (there are not many details about the specifics there), enhancing the security of HDFS (a big step toward enterprise-readiness, as all distro vendors seem to agree), optimizing queries in Hive and tweaking some performance drags in HBase.

“We want to evolve the framework to take advantage of the latest technology,” explained Davis, noting that Intel sees beyond Hadoop as a mere batch engine for relatively simple data, especially if it is able to tap into hardware advances. He noted that they want to make Hadoop more dynamic by letting users make data stored on a Hadoop cluster be made available for a broad variety of applications. In other words, they see Hadoop as a base layer upon which applications are built.

All of this is notable, in part because of the rich partner ecosystem that Intel has built over the course of decade. On the other end, there are some juicy possibilities when some recent acquisitions are taken into account that could alter the course of Hadoop. For instance, Intel acquired Lustre backers, Whamcloud some time ago, thus creating the possibility for Hadoop in high performance computing environments becomes an interesting possibility.

Speculation about the distro ecosystem and future for Intel aside, the real question is why Intel decided this was important enough for a major investment. Actually, says Davis, it’s not something that was planned at first, but rather something that developed out of customer requests in 2011. Davis claims their foray into Hadoop followed work with large-scale customers in China, including China Unicom and China Mobile. These users were making early production use of Hadoop but some of the performance, security and optimization pieces weren’t in place. Intel stepped up to the software challenge and along the way, patched other sections of the Hadoop framework that yielded specific security and resiliency features that were pushed out with this debut mass release.

While their work with the Chinese media and telco giants might have yielded some innovative work toward optimizing Xeon for Hadoop workloads, at the time, they hadn’t contributed these findings to the open source community just yet. However, now that they’ve entered the distro game where there is the very persistent expectation that vendors commercializing off an open source platform contribute to the cause, the pressure will be on Intel to not only compete, but to do so against their own contributions.

According to Davis, Intel wants to be a steward of the open source community by contributing back many of their developments, particularly on the SSD, security and query optimization fronts. He describes Intel’s role in Hadoop as the “rising tide that lifts all boats,” noting that the only big piece that won’t go back to Apache is the management interface and some of the enhancements going on within Intel Labs, including work with the Lustre file system via the company’s recent acquisition of Whamcloud.

Intel’s big data software makeover is noteworthy for a few reasons, the most compelling of which is that it’s a hardware-oriented vendor stepping into a distro market that’s already rather noisy. Their claim to customers is that they need software and services from an established, large company that can provide highly stable services. This is a clear jab at the dense startup market that has gathered around Hadoop distros and supplemental databases and services.

This marks yet another hardware-oriented vendor hopping into what has traditionally been considered a software-only enterprise—one that is designed to run on vanilla hardware at that. Companies like EMC, NetApp, Data Direct Networks, HP and others have also lined up to support the movement around Hadoop in recent weeks with what are extensions of their current offerings with Hadoop optimization baked in. Like Intel, they are pulling their partners close, leaning on them to provide some of the missing analytics and software pieces that hardware companies might not have seen reason to invest in five years ago—before they realized they had to be something more than a mere hardware vendor.

Related Articles:

Simba Provides SQL Access on Intel Distribution

Pentaho Brings Big Data Analytics to Intel Distribution

AMD Seamicro Push New Approach to Big Data