Optimizing Visualization Workloads

Visualize this: You’re a car designer and you’re scanning a 3D simulation of your latest concept vehicle. You pan, zoom and otherwise manipulate the simulation that uses streamlines around the car to illustrate airflow.

The simulation is massively pixeled and highly compute-intensive, and yet you’re viewing and manipulating it on a low-end, three-year-old laptop. What’s more, several of your colleagues are on a conference call with you, and they’re manipulating those same pixels in the same session from multiple locations using typical office PCs and even mobile devices.

The simulation is massively pixeled and highly compute-intensive, and yet you’re viewing and manipulating it on a low-end, three-year-old laptop. What’s more, several of your colleagues are on a conference call with you, and they’re manipulating those same pixels in the same session from multiple locations using typical office PCs and even mobile devices.

Pie in the sky, you say? Hardly. The fact is, this scenario is a reality in leading-edge visualization environments today. That’s because an integrated solution comprised of HPC workload management software and visualization software is doing for 3D simulation workloads what Software as a Service did for 2D applications: keeping applications and data together on the server side where they can be accessed simultaneously by multiple PCs, thin clients and mobile devices on the same session. And compute resources can be used more efficiently than ever before.

Change Is Long Overdue

For IT managers, visualization workload optimization is arriving in the nick of time. That’s because today it’s not unusual for 3D simulations to top out at 50 gigabytes or more. Worse still, they’re getting bigger every year, and workload management issues are following suit. Workstations are becoming obsolete faster than ever before—usually in less than 3 years—and, of course, licensing, troubleshooting, patching and updating requirements at widely dispersed offices and desks is an expensive, time-consuming hassle. Network congestion and latency are serious issues, too, as is leakage of proprietary data.

Upfront high-end workstation cost is also a key concern. Desk-side workstations usually feature high-end CPUs, top-of-the-line GPUs, and lots of memory. And they are single-purpose machines. Once a simulation is up and running, the system’s usefulness is tapped out.

Adding insult to injury, workstations must be sized for the largest potential simulation job. So, even if small or mid-sized technical visualization sessions are the norm, the workstation must be built to handle the worst-case scenario: that is, the most compute-intensive session imaginable. Given today’s widely dispersed and mobile workforce—and the need for elasticity when it comes to compute and network resources—the immovable and otherwise rigid desk-side workstation is an idea whose time has come, and gone.

Moving 3D Applications Closer to the Data

Instead of having expensive hot, loud, underutilized workstations taking up space under desks, it makes sense to have 3D CAD/CAE applications and data housed in the data center, transferring pixels rather than data down to typical PCs, laptops and mobile devices. Users get the full power of a high-end, GPU-enabled machine—as if it’s under their desks–while GPUs and other high-performance compute resources are shared by multiple sessions.

In addition, compute capabilities can be “right-sized” on the fly, and utilization can be maximized. Network congestion is minimized; support, updating, and replacement of hardware and software are more efficient, less costly processes that do not affect users nearly as much as when they are completed at individuals’ desks; and access control and data security are much tighter.

Users are able to walk away from their desks and securely initiate a session, or log on to an existing one, in conference rooms, at home after work, or wherever they are authorized to do so from just about any device. As a result, workforce collaboration and productivity can improve dramatically.

Key Components of the Visualization Workload Optimization Solution

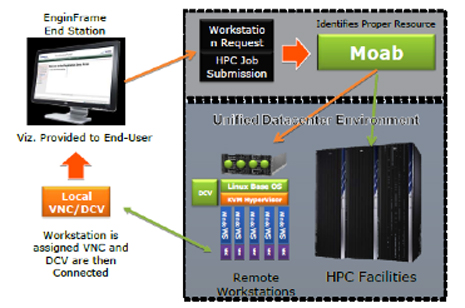

Visualization workload optimization can be accomplished using an integrated software solution developed through a partnership between Adaptive Computing and NICE Software. Adaptive provides the Moab HPC workload management software that maximizes resource utilization in technical visualization environments as well as conventional HPC clusters by automating placement, scheduling, provisioning, SLA balancing and uptime for workloads according to policy-based priorities and efficiencies. When the data center receives a session request, the software assesses the size and application requirements of the request and the availability of data center resources—applications, CPUs, memory, GPUs, and nodes—and allocates and schedules accordingly. All it takes is a single click from a user requesting a session for the software to determine the requirements and schedule the optimal session.

In instances where the visualization workloads are small enough to be virtualized, the HPC workload management software can dynamically provision both Linux and Windows resources. It can manage setting up multiple Windows or Linux user sessions on a single machine to further maximize utilization. And it can manage the dynamic re-provisioning of the OS and applications on a compute machine to better meet workload demand and maximize resource utilization and availability.

Visualization software from NICE enables Web portal-based user access to 3D visualization applications running on Linux or Windows servers. The software enables GPU-sharing across multiple Linux and Windows desktops and can aggressively compress simulation data in order to accommodate situations where bandwidth is in short supply. This is especially beneficial for users logging in from wireless or other relatively bandwidth-constrained network connections. The software also enables the user to toggle frames per second and modify the quality of the image depending on the quality of the connection and the user’s level of interaction.

In addition, the NICE visualization software works with Red Hat’s KVM-based hypervisor or other hypervisors on Linux servers, which enables multiple Windows virtual machines to run on the same node (see figure 2). Thus, full GPU-sharing and acceleration to the Windows-based application are possible, as well as GPU-sharing across multiple Windows OSes. In this way, a Linux server containing three GPUs can typically support 10-12 Linux and Windows users.

TORQUE, part of the Moab HPC Suite, is also a part of the visualization workload optimization solution. Designed to improve overall utilization, scheduling, and administration on clusters, its primary function is to start sessions and keep the HPC workload management software apprised of the health of the node and session.

Figure 1. The HPC workload management software and visualization software, in conjunction with KVM or another hypervisor, enable Windows-based virtual machines to run on a Linux server and enable multiple Windows sessions on the same node.

Next — The Private Technical Compute Cloud >>

Many companies have major investments in HPC in the data center, and, generally, those resources aren’t used at anywhere near capacity. The same can be said for visualization workstations that are dispersed throughout many companies. But the good news is that those resources can now be brought together in a private technical compute cloud that handles both HPC and visualization. In many cases, GPUs can be shared between computational and visualization workloads—dedicated to visualization workloads during the day and HPC computational requirements through the night. HPC workload management software policies can be put in place to automatically determine which sessions and jobs go where. Those policies can also be set up to establish reservations for higher-priority workloads at specified times of the day or night.

A Centralized, Highly Efficient, One-Stop Computing Shop Is Now Possible

Linux and Windows don’t have to be isolated on disparate machines under users’ desks. Visualization and HPC capabilities can be fully integrated in the data center. Users who are used to having their own high-powered workstations and applications at their fingertips can have a similar—and often superior—experience when compute power and applications are transferred to a central location, plus they gain mobility and elasticity of compute resources. With proper authorization, access can be had anytime, anywhere, and security can actually be enhanced. Equally important, by centralizing and consolidating resources in the data center for both visualization and HPC, utilization of resources and ROI are optimized, and IT support costs are minimized. Data storage can be consolidated into common, centralized storage nodes to reduce storage costs, and you can improve application license utilization to reduce software costs with a shared common pool of applications across multiple users.

Can you visualize anything better than that?

About the Author

Michael Jackson is president and co-founder of Adaptive Computing and helped to drive the first nine years of profitable growth. Michael drives Adaptive Computing’s strategic planning and cross company coordination, with an added focus on business and partner development.

Michael Jackson is president and co-founder of Adaptive Computing and helped to drive the first nine years of profitable growth. Michael drives Adaptive Computing’s strategic planning and cross company coordination, with an added focus on business and partner development.

Prior to joining Adaptive Computing Enterprises, Michael was product manager of Internet and security products at Novell Inc.—the current developer of SUSE Linux. He also served as Novell’s channel business development manager. Prior to Novell, Michael was with Dorian International, the second largest import–export management company in the United States.