Cloud Looms As Answer to All Things Analytic

(antishock/Shutterstock)

Think this whole cloud thing is just a passing fad? That view may have been permissible 10 years ago. But as organizations try to put their IT goals in clearer focus for 2018, it’s becoming increasingly evident that the cloud has become a centralizing force, especially when it comes to storing and processing large amounts of data.

There are two main drivers propelling the cloud’s prowess in data analytics: the sheer simplicity of cloud-based storage and the cost flexibility of cloud-based computing. Instead of funneling all their data into a big data cluster, organizations today are more inclined to let one of the cloud-based object storage system store it, including Amazon Web Services’ Simple Storage Service (S3), Microsoft‘s Azure Blog (binary large object) store, or Google Cloud Storage.

Instead of storing data in one place, organizations increasingly are mixing and matching a variety of storage repositories to satisfy the particular whims and demands of specific projects. That means it’s not unusual to see increasingly complex workflows that involve traditional file storage and object stores, living either in the cloud or on premise.

Keeping track of all that data is critical, particularly in this year of GDPR. But Felix Van de Maele, CEO of data governance and catalog provider Collibra, says the evidence of a shift to the cloud is unmistakable. “The cloud is growing,” he says. “We see the trend where data is moving to the cloud.”

Where the data resides is important for achieving good governance. Whether the data sits in an S3 bucket in an AWS data center or an Oracle database in an Albuquerque office building, one still needs to track it, manage it, and ensure that it’s accessible and not being mishandled.

While the cloud grows bigger as a repository for data, it doesn’t necessarily mean the data silo problem is going away. In fact, it probably exacerbates the data silo problem, as cloud adoption makes it easier to store data in far-flung places. “The reality is, over the next 10 to 15 years, every company is still going to have data on the premise,” Van de Maele says. “Data moves slow to the cloud.”

If you’re embarking upon a new data-related journey in 2018 (and really, who isn’t?), it’s very likely that the cloud will play a role in it. This inference can be drawn from multiple studies, including reports from Gartner and Forrester that find public cloud storage is growing at between 18% and 19% per year.

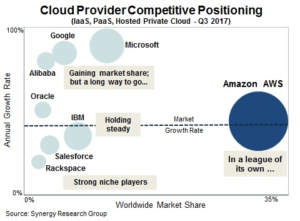

Synergy Research Group has its own set of numbers, and predicted that cloud hosting revenues would grow at a 40% annual rate in 2017. John Dinsdale, a chief analyst and research director at Synergy Research Group, said there’s “something a little shocking about seeing a business unit the size of AWS consistently growing its revenues by over 40%.” Microsoft and Google are growing quickly too, while IBM is gaining ground in hosted private cloud engagements, the research group says.

Another study to paint a picture of strong cloud emergence is The 451 Group’s inaugural Digital Pulse survey, which found that 60% of organizations will house the majority of their IT systems off-premise by the end of 2019. The same Digital Pulse survey also pointed to data being the primary driver of new IT investments this year. The top IT priority is business intelligence and analytics, with 45% of respondents pointing to this as their top priority, followed by machine learning and artificial intelligence (29%) and big data (28%).

On the processing front, a big reason why applications are moving to the cloud is the ease of spinning up and spinning down compute resources. This is particularly true in the data science world, where the capability to experiment with new procedures on new pieces of data can be the difference between success and faluire.

In this vein, the capability to tap into the management flexibility offered by containers gives the cloud a big advantage. Sure, you can spin up test, dev, and production environments using Docker, Mesos, or Kubernetes in an on-premise system. But the fact is, few organizations have the computer science and data engineering expertise required to put sophisticated virtualized and containerized systems together today. In the cloud, you can ride atop the systems that AWS, Microsoft, and Google have already built, leaving you to focus on the data and your business challenge.

When you combine the fast pace of today’s DevOps environment, the widespread availability of data feedstock for analytics, the power of Kafka to move data, and the sophistication of microservices to plumb complex workflows together, you end up with the ingredients for rapid innovation. The fact that those ingredients are more readily available on cloud platforms gives it another advantage over on-premise brethren.

“Right now, enterprises without cloud integration capabilities are experiencing digital disruptions and missing the potential for huge productivity gains,” said Chris McNabb, the CEO of Dell Boomi, a provider of data integration and cloud workflow solutions that’s owned by Dell.

“Right now, enterprises without cloud integration capabilities are experiencing digital disruptions and missing the potential for huge productivity gains,” said Chris McNabb, the CEO of Dell Boomi, a provider of data integration and cloud workflow solutions that’s owned by Dell.

All of the major public cloud platforms are embracing advanced analytics on the cloud. That includes machine learning and deep learning automation, as well as real-time streaming analytics.

At its recent AWS re:invent show, AWS unleashed a torrent of new cloud-based offerings, including: an automated machine learning system called SageMaster; a hosted graph database called Amazon Neptune; a real-time platform for streaming video data called Kinesis Video Streams; and various new deep learning services for identifying objects in videos, and understanding and translating languages. Amazon Kinesis, meanwhile, is gaining steam as a stand-in for Kafka.

Google isn’t standing on its laurels, and last week unveiled Google AutoML, a new services that will use deep learning techniques to automate a range of machine learning tasks. The first service, AutoML Vision, will allow users to train a neural network with their own custom image suite, and then use that model to identify objects in the wild. In terms of real-time stream processing, Google’s TensorFlow model is leading the way, particularly since elements of the stack have been open sourced as Apache Beam.

Microsoft may actually hold the lead in terms of advanced and automated machine learning system that are easy to use and available now. Its primary offering, dubbed Azure Machine Learning Studio, is considered to be both powerful and easy to use. On the streaming front, Microsoft last month announced that’s it’s supporting Kafka with HDInsight, it’s Hadoop-based big data platform.

In any event, all three cloud provider will be looking to improve all aspects of their big data storage and processing capabilities in the coming year. But they may not want to take their eyes off IBM, whose cloud business grew 27% last year to $17 billion, according to Martin Schroeter, Big Blue’s senior vice president of global markets.

“Over the last several years, we’ve been making investments and shifting resources, embedding AI and cloud into more of what we offer and building new solutions and modernizing existing ones,” Schroeter told analysts last week on a call about IBM’s quarter results. “We still believe cognitive solutions is going to be driven by the shift to analytics, the shift to cloud, the shift to security. We see that continuing.”

As the amount of data that companies want to collect, store, and analyze gets bigger, cloud providers will respond with services that make those things relatively easy to do – certainly easier than building them oneself. When you consider that many (if not most) of the disruptive technologies that have fueled the 10-year big-data boom were developed by cloud providers to improve their own data handling capabilities (see: Hadoop, MapReduce, S3, TensorFlow, NoSQL databases, Kafka, Storm, etc), then it becomes clear that the cloud is not just a place to get cheap storage and processing, but is also a source of innovation in its own right.

Related Items:

Graph Databases Ascend to the Cloud

Cloud In, Hadoop Out as Hot Repository for Big Data

The Top Three Challenges of Moving Data to the Cloud