Exabytes Hit the Road with AWS Snowmobile

Big data, meet your big rig. Amazon Web Services took containerization technology to new levels yesterday when it unveiled plans to use 45-foot trailers equipped with scads of disk and fast fiber optic connections to help customers upload 100 petabytes of data into the cloud.

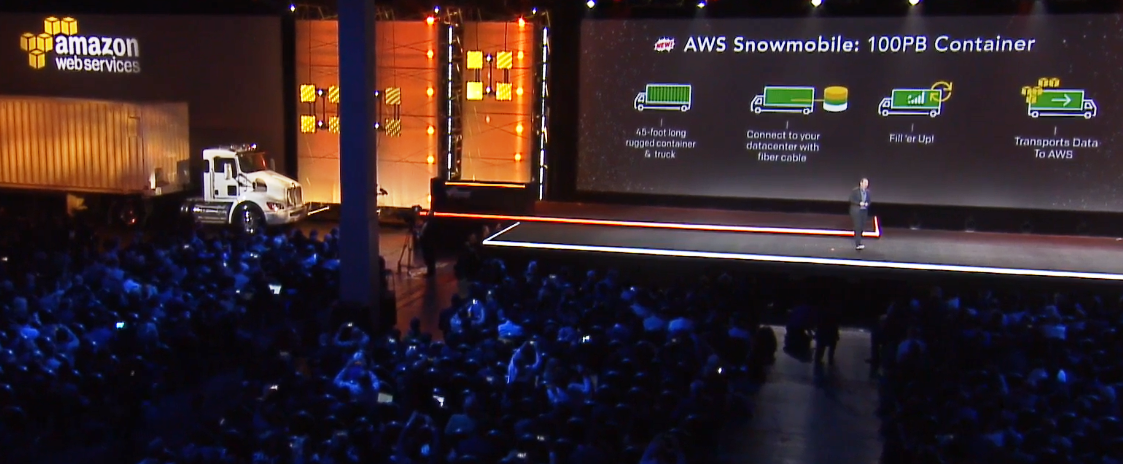

AWS CEO Andy Jassy introduced the new big data big rig, dubbed Snowmobile, yesterday during the AWS re:Invent conference in Las Vegas. The crowd cheered as a semi-truck pulled an AWS Snowmobile trailer onto the stage at the end of Jassy’s two-hour keynote address.

The cloud giant launched the Snowmobile as a follow-on to last year’s launch of the AWS Snowball, a rugged suitcase-sized devices designed to allow customers to upload 50TB of data to the AWS at a time. The success of the SnowBall surprised Jassy, who said he had to increase orders of the devices by 10x to meet demand following the initial roll out.

“A lot of customers are interested in moving petabytes and petabytes of data that way,” Jassy told the crowd. “Many of our customers say, ‘That’s great if I have hundreds of petabytes or dozens of petabytes. What about if I have exabytes of data?'”

The new AWS Snowball Edge combines 100TB of on-board storage with S3, processing, and IoT capabilities

When Jassy launched AWS 10.5 years ago with a team of 57 workers, the idea of customers storing an exabyte of data in the cloud was patently absurd. Who would have that much data? Fast-forward to 2016, and the answer is clear.

“We have a lot of customers who have exabytes of data,” he said. “Today an exabyte of data is a lot more common place. You’d be surprised how many companies, large enterprises and successful startups, given the way they collect data, who have exabytes of data.”

But moving an exabyte of data into the AWS cloud using a Snowball device–even with one of the new 100TB Snowball Edge devices that AWS unveiled yesterday that includes the equivalent of an EC2 M4 4XL instance and functions as S3 and IoT endpoints via the new AWS Greengrass initiative—is not feasible. It would take 10,000 Snowball Edges to move 1EB of data.

“We thought about it. It was a tricky one,” Jassy said. “The first thing that came to mind was, we’re going to need a bigger box.”

And that’s when the 45-foot Snowmobile drove out onto the stage, to the roaring approval of the large crowd.

“The way it works, we’ll drive the truck up to your data center,” Jassy said. “We’ll take the Snowmobile fixed to the trailer. We have power and network fiber that we’ll connect to your data center. You fill ‘er up, and then the truck will come back, put the trailer back on the truck, and we’ll move it back to AWS.”

The crowd roared as an AWS Snowmobile big rig holding 100PB of data drove onto the stage at AWS re:Invent yesterday

Despite the huge computing advances made over the past decade, moving large amounts of data still poses a thorny logistical problem. As Jassy pointed out, if a company attempted to use a modest 10Gbps link to upload an exabyte of data, it would take 26 years. However, the same amount of data could be moved in just six months using a series of 10 Snowmobile deliveries.

This is the future of big data, and it runs on diesel. “You would not believe how many companies now have exabytes of data that they want to move to the cloud because they want to take advantage of storage services that we have, and they want to take advantage of the database services we have and they want to take advantage of the huge number of analytic services we have and AI services,” Jassy says.

Twenty-six years doesn’t cut it. “Moving exabytes of data before was completely unreasonable,” he said. But with the Snowmobile, at least it’s possible.

Related Items:

Amazon Adds AI, SQL to Analytics Arsenal

Amazon Debuts Fast, Cheap BI with QuickSight