Barcelona Supercomputing Center Powers Encrypted Neural Networks with Intel Tech

Homomorphic encryption provides two extraordinary benefits: first, it has the potential to be secure against intrusion by even quantum computers; second, it allows users to use the data for computation without necessitating decryption, enabling offload of secure data to commercial clouds and other external locations. However – as researchers from Intel and the Barcelona Supercomputing Center (BSC) explained – homomorphic encryption “is not exempt from drawbacks that render it currently impractical in many scenarios,” including that “the size of the data increases fiercely when encrypted,” limiting its application for large neural networks.

Now, that might be changing: BSC and Intel have, for the first time, executed homomorphically encrypted large neural networks.

“Homomorphic encryption … enables inference using encrypted data but it incurs 100x–10,000x memory and runtime overheads,” the authors wrote in their paper. “Secure deep neural network … inference using [homomorphic encryption] is currently limited by computing and memory resources, with frameworks requiring hundreds of gigabytes of DRAM to evaluate small models.”

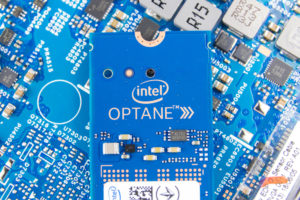

To do that, the researchers deployed Intel tech: specifically, Intel Optane persistent memory and Intel Xeon Scalable processors. The Optane memory was combined with DRAM to supplement the higher capacity of persistent memory with the faster speeds of DRAM. They tested the combination using a variety of different configurations to run large neural networks, including ResNet-50 (now the largest neural network ever run using homomorphic encryption) and the largest variant of MobileNetV2. Following the experiments, they landed on a configuration with just a third of the DRAM – but only a 10 percent drop in performance relative to a full-DRAM system.

“This new technology will allow the general use of neural networks in cloud environments, including, for the first time, where indisputable confidentiality is required for the data or the neural network model,” said Antonio J. Peña, the BSC researcher who led the study and head of BSC’s Accelerators and Communications for High Performance Computing Team.

“The computation is both compute-intensive and memory-intensive,” added Fabian Boemer, a technical lead at Intel supporting this research. “To speed up the bottleneck of memory access, we are investigating different memory architectures that allow better near-memory computing. This work is an important first step to solving this often-overlooked challenge. Among other technologies, we are investigating the use [of] Intel Optane persistent memory to keep constantly accessed data close to the processor during the evaluation.”

To learn more about this research, read the research paper, which is available in full here.

July 3, 2025

- FutureHouse Launches AI Platform to Accelerate Scientific Discovery

- KIOXIA AiSAQ Software Advances AI RAG with New Version of Vector Search Library

- NIH Highlights AI and Advanced Computing in New Data Science Strategic Plan

- UChicago Data Science Alum Transforms Baseball Passion into Career with Seattle Mariners

July 2, 2025

- Bright Data Launches AI Suite to Power Real-Time Web Access for Autonomous Agents

- Gartner Finds 45% of Organizations with High AI Maturity Sustain AI Projects for at Least 3 Years

- UF Highlights Role of Academic Data in Overcoming AI’s Looming Data Shortage

July 1, 2025

- Nexdata Presents Real-World Scalable AI Training Data Solutions at CVPR 2025

- IBM and DBmaestro Expand Partnership to Deliver Enterprise-Grade Database DevOps and Observability

- John Snow Labs Debuts Martlet.ai to Advance Compliance and Efficiency in HCC Coding

- HighByte Releases Industrial MCP Server for Agentic AI

- Qlik Releases Trust Score for AI in Qlik Talend Cloud

- Dresner Advisory Publishes 2025 Wisdom of Crowds Enterprise Performance Management Market Study

- Precisely Accelerates Location-Aware AI with Model Context Protocol

- MongoDB Announces Commitment to Achieve FedRAMP High and Impact Level 5 Authorizations

June 30, 2025

- Campfire Raises $35 Million Series A Led by Accel to Build the Next-Generation AI-Driven ERP

- Intel Xeon 6 Slashes Power Consumption for Nokia Core Network Customers

- Equal Opportunity Ventures Leads Investment in Manta AI to Redefine the Future of Data Science

- Tracer Protect for ChatGPT to Combat Rising Enterprise Brand Threats from AI Chatbots

June 27, 2025

- Inside the Chargeback System That Made Harvard’s Storage Sustainable

- What Are Reasoning Models and Why You Should Care

- Databricks Takes Top Spot in Gartner DSML Platform Report

- Why Snowflake Bought Crunchy Data

- LinkedIn Introduces Northguard, Its Replacement for Kafka

- Change to Apache Iceberg Could Streamline Queries, Open Data

- Snowflake Widens Analytics and AI Reach at Summit 25

- Fine-Tuning LLM Performance: How Knowledge Graphs Can Help Avoid Missteps

- Agentic AI Orchestration Layer Should be Independent, Dataiku CEO Says

- Top-Down or Bottom-Up Data Model Design: Which is Best?

- More Features…

- Mathematica Helps Crack Zodiac Killer’s Code

- ‘The Relational Model Always Wins,’ RelationalAI CEO Says

- Confluent Says ‘Au Revoir’ to Zookeeper with Launch of Confluent Platform 8.0

- Solidigm Celebrates World’s Largest SSD with ‘122 Day’

- AI Agents To Drive Scientific Discovery Within a Year, Altman Predicts

- DuckLake Makes a Splash in the Lakehouse Stack – But Can It Break Through?

- The Top Five Data Labeling Firms According to Everest Group

- Supabase’s $200M Raise Signals Big Ambitions

- Toloka Expands Data Labeling Service

- With $17M in Funding, DataBahn Pushes AI Agents to Reinvent the Enterprise Data Pipeline

- More News In Brief…

- Astronomer Unveils New Capabilities in Astro to Streamline Enterprise Data Orchestration

- Databricks Unveils Databricks One: A New Way to Bring AI to Every Corner of the Business

- BigID Reports Majority of Enterprises Lack AI Risk Visibility in 2025

- Seagate Unveils IronWolf Pro 24TB Hard Drive for SMBs and Enterprises

- Astronomer Introduces Astro Observe to Provide Unified Full-Stack Data Orchestration and Observability

- Snowflake Openflow Unlocks Full Data Interoperability, Accelerating Data Movement for AI Innovation

- BigBear.ai And Palantir Announce Strategic Partnership

- Gartner Predicts 40% of Generative AI Solutions Will Be Multimodal By 2027

- Databricks Donates Declarative Pipelines to Apache Spark Open Source Project

- Code.org, in Partnership with Amazon, Launches New AI Curriculum for Grades 8-12

- More This Just In…