AI Opens Door to Expanded Use of LIDAR Data

When it comes to big data, LIDAR is right up there with the biggest generators. But outside of a few niche use cases, LIDAR–which uses lasers to build a three-dimensional model of physical objects–has not been widely adopted. Now, a San Francisco startup called Enview is hoping to change that, starting with today’s launch of its Web-based AI service for analyzing LIDAR data.

San Gunawardana is imminently familiar with LIDAR (which stands for Light Detection and Ranging) and other types of geospatial data. He started his career in the U.S. Air Force, where he built satellites, then moved to Stanford University, where he earned his PhD in aerospace engineering in 2012. He received funding from NASA to develop computer vision and NLP technology for UAVs, then went “downrange” to Afghanistan as a civilian with the Army before returning to the United States to co-found Enview.

Along the way, Gunawardana developed an appreciation for not only the massive volume of LIDAR data (particularly when it’s collected at its highest fidelity), but also the huge potential the LIDAR has to benefit companies, people, and society.

“LIDAR technology has been around for decades,” Gunawardana tells Datanami, “but there are some big changes happening that are really revolutionizing what we can do with this.”

The costs associated with building and using LIDAR systems are coming down, and LIDAR is being used in more applications. For example, the latest generation iPhones and iPads feature LIDAR sensors, which are intended to be used with augmented reality applications.

“It’s becoming easier and cheaper to collect more and more data,” Gunawardana says. “But we don’t, and the reason we don’t is because the data is extraordinarily complex. It’s large in scale. It’s a true big data problem.”

Enview works with companies in the electricity and natural gas distribution industry to analyze LIDAR images of power lines and pipelines. LIDAR can identify anywhere from 50 million to 250 million objects per mile, and the objects in these “point clouds” historically have been manually labeled.

“There have been incredible advances in 2D computer vision and image processing,” Gunawardana says. “The challenge is, the entire body of literature for image processing is based on the assumption that you have structured data. Unfortunately, LIDAR data is unstructured, so you can’t just throw a pile of this into your typical AI framework and have it work.”

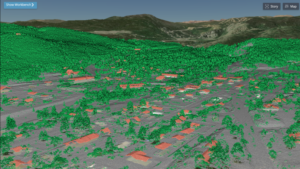

What Enview has done is develop an AI system that automates the processing of LIDAR data. Running atop a collection of CPUs and GPUs, its convolutional neural network can identify real-world objects embedded in “3D point clouds,” such as power poles, pipelines, buildings, bridges, trees, and vehicles.

Up to this point, Enview has utilized this AI system as part of consulting engagements. With today’s launch of Enview Explore, the company is now exposing the AI to a wider audience via the Web. Anybody with a Web browser can now load their LIDAR data into Enview’s AI system and receive the output in a faster and automated fashion.

“The application itself will give users the ability to take a raw point cloud and do what we call segmentation, or classification,” says Anthony Calmito, a vice president with Enview. “Instead of manually understanding what each of those dots is, our AI will come back and say, out of this point cloud, these represent trees, cars, or building. So things like feature extraction can be highly automated, with high accuracy and high detail, because you’re using a 3D model rather than a traditional 2D model.”

The system runs on the AWS cloud, and utilizes CPU and GPU resources behind the scenes. According to Gunawardana, the system “leverages a fairly complex stack of cascading machine learning frameworks” that run in parallel and can scale up and down depending on demand. The system currently requires users to upload their data, but the company is working on exposing its deep learning capabilities via an API. It also envisions allowing partners to partake of the deep learning LIDAR pipelines with software plug-ins.

By eliminating the need to have LIDAR data experts, Enview’s new AI has the potential to revolutionize how LIDAR data is analyzed, potentially empowering customers to see the world in new light, Gunawardana says.

“In the olden days, you would have to have a PhD in machine learning and know how to use a command line prompt to kick off this 10,000 GPU process, and we would generate insights and hand deliver them back to customers,” he says. “But our vision at Enview has been to empower people, particularly the operational end user, with the confidence and ability to perceive and navigate the world that’s changing very rapidly around us.”

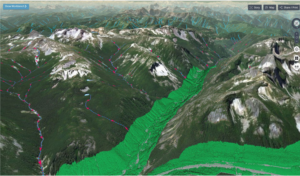

One of Enview’s customers would manually inspect thousands of miles of power lines to ensure that it was aware of threats, such as potential landsides or trees touching the wires. By using LIDAR sensors to take before-and-after images of its physical infrastructure, and then Enview’s AI to automate the interpretation of those images, saves the company millions of dollars.

“As the collection and sensor capabilities become widely available, there’s this opportunity to massively unlock this previously manual workflow through use of a novel category of AI to enable us to maintain these living 3D models of the world, in both high fidelity and frequency,” Gunawardana says.

As a society and as a species, we are sitting on the cusp of massive new capabilities thanks to LIDAR, Gunawardana says. For example, we could generate high-resolution 3D scans of the entire Gulf of Mexico to see how a major hurricane changed the landform. It could also be used to scan the Western Forest before and after wildfire season to determine its impacts.

Mother Nature won’t stop threatening humankind, particularly when people continue to buld and live in areas that are prone to natural disasters. But with more widespread LIDAR data and AI-based processing methods, we can have the potential to reduce the risks that Mother Nature throws at us.

“Not only are we out there trying to develop these incredible advances that move the state of the possible forward in AI, but it’s really important to us personally to apply these to real-world problems that make a genuine operational impact,” Gunawardana says. “Geospatial is difficult, and it has been very rewarding to harness this sort of new technology and use it to help people.”

Related Items:

Massive Autonomous Vehicle Sensor Data: What Does It Mean?

The Evolution of Remote Sensing: Delivering on the Promise of IoT

Inside Hitachi Vantara’s Very Ambitious Data Agenda