Is Kubernetes Overhyped?

(Mia-Stendal/Shutterstock)

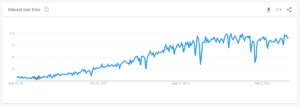

The amount of attention paid to Kubernetes has increased substantially over the past couple of years. What started out as a relatively obscure container management system open sourced by Google has turned into the must-have technology for running machine learning and advanced analytics applications, among other workloads. But is Kubernetes the real deal? Will K8s deliver on the hype, or turn into just another once-shiny thing that lost its luster?

Kubernetes certainly seems to be the right technology for the right time. One of the biggest business drivers today in enterprise computing is the rise of the cloud. Companies have mandates from their boards to move to the cloud and take advantage of its flexible and adaptable infrastructure, whether it’s public cloud services or private cloud offerings.

But there are technological obstacles to that must be overcome before a company can get the most out of the cloud’s flexible and scalable environment. For starters, existing applications need to be adapted (or new apps developed from scratch) to work in a certain way. Today’s clouds were created to run applications in self-contained units of infrastructure called containers (i.e. Docker containers) that can be started, stopped, scaled up, scaled down, and moved without impacting the underlying application.

Google developed Kubernetes (called K8s for short) to be the orchestration layer for managing large numbers of Docker containers. It quickly became the defacto standard, outperforming other techniques in the market, such as Mesos, as well as homegrown container management methods.

Adoption of Kubernetes and containers has skyrocketed in recent years. According to a recent survey rom the Cloud Native Computing Foundation (CNCF) survey, 84% of companies are using containers in production this year, an increase from 23% who reported that in 2016. Nearly 80% of them are using Kubernetes to manage those containers.

The major cloud providers quickly developed their own Kubernetes distribution based on Google’s source, including Google Cloud’s Google Kubernete Engine (GKE), Amazon Web Services’ Elastic Kubernetes Service (EKS), and Microsoft Azure’s Azure Kubernetes Service (AKS). Other K8s distributions have popped up, including Red Hat’s OpenShift, Rancher from RacherLabs, and Cloud Foundry, among others.

In the Hadoop ecosystem, K8s has overtaken YARN as the most-used resource scheduler, at least for cloud deployments. Cloudera is also adopting K8s for on-prem Hadoop clusters (which also increasingly use S3-compatible storage), although it’s retaining YARN for some workloads.

Vendors that once supported Hadoop as the vehicle for bringing their advanced analytics and AI applications to market have flocked to Kubernetes. For example, Splice Machine recently announced the delivery of a Kubernetes operator that enables its platform (which leverages “Hadoop” components like HBase, Spark, and Zookeeper) to run on clusters managed by Kubernetes.

According to Splice Machine CEO Monte Zweben, the productivity boost that Kubernetes has brought his DevOps team is worth the price of entry for K8s.

“I saw this firsthand,” Zweben told Datanami recently. “The ability of my DevOps team went up an order of magnitude with Kubernetes. They are so much more productive with the Kubernetes tools, and the richness of the community is unbelievable.”

Developing and running distributed frameworks at large scale is notoriously fraught with complexity. We saw this during Hadoop’s heyday, when developers at the Hadoop distributors were tasked with maintaining integration among 30-odd fast-moving frameworks. The complexity essentially doomed that Hadoop style of computing to collapse under its own weight, and led directly to the rise of cloud computing and Kubernetes.

As an active participant in that Hadoop computing wave, Zweben personally experienced those difficulties, and he swears that Kubernetes provides a much better way.

“For example, HBase which is under the covers of Splice Machine, and Zookeeper and Spark are very particular about when they’re coming up live, what happens first,” Zweben explained. “And you can encode that automation extremely cleanly in a Kubernetes operator. So the self-healing, declarative capability of that to provision, manage, and operate was so easy for my team to do on the new Kubernetes infrastructure coming from the community. I just don’t believe it’s overhyped.”

Zweben is also impressed by Helm Charts, which are essentially customized container apps that are easily integrated into the Kubernetes cluster. Splice Machine customers can develop their own Helm Charts, which could take the form of a machine learning model or Kafka queue pushing data from an oil rig, and easily deploy it at scale. “This is really creating a new level of agility that you didn’t have before,” Zweben said

“I don’t think it’s overhyped,” he continued. “I think it’s important. It soon will become commonplace. It will be table stakes, just like Linux is. It will just be the way you do things, and I don’t think it will be as big a deal in the press anymore.”

But not everybody is convinced that all the hype around Kubernetes is justified, at least for all applications and all customers. Ankur Singla, the founder and CEO of infrastructure services firm Volterra, has mixed feelings about Kubernetes.

“Overhyped might not be the right word,” Singla told Datanami in a recent interview. “Is a mainstream enterprise ready to wholesale embrace and adopt Kubernetes? I think that’s the big challenge. That will take time…”

Kubernetes is becoming a must-have for managing large-scale application deployments on the cloud ….(RoboLab/Shutterstock)

Volterra develops a pair of solutions, called VoltMesh and VoltStack, that allow companies to take advantage of Kubernetes-enabled cloud computing environment in edge and multi-cloud deployments, but without worrying about the operational complexity that comes with a Kubernetes. Volterra uses Kubernetes within the solutions, which exposes a full application stack (in the case of VoltStack) or networking and security services (VoltMesh). But it hides Kubernetes complexity from its customers, which include large financial services firms, gaming companies, and ecommerce outfits.

“I would say 80% of our customers don’t care about Kubernetes and 20% care about Kubernetes,” Singla said. “Those that don’t care about Kubernetes, we’ve tried to abstract the application management in such a way that, even if they’re working with containers or VM workloads, they don’t have to care about what is the underlying API that they have to use….We will manage this for the customer.”

While Kubernetes elegantly solves the problem of scaling large numbers of sizable workloads in cloud environments, it brings a level of DevOps complexity that smaller companies are ill-equipped to deal with. For example, Volterra employs 25 DevOps engineers to work with Kubernetes, which allows 100 other engineers to focus on their work. “They don’t want to deal with the operational complexity of Kubernetes clusters or managing them,” Singla said.

In addition to lack of DevOps expertise on the part of companies, many applications just aren’t the right fit for something like Kubernetes, Singla said.

“The reason for Kubernetes is you want to deploy lots of different containers. You want all of them to talk to each other. You want them to be re-startable. You want them to scale independently,” Singla said. “Those are really good use cases for Kuberntes. But it may not be required for someone who is running just one container and he wants to just set it on his laptop. Why does he need to run a Kubernetes cluster on his laptop for that use case? Probably not.”

Running Kubernetes on the edge is a hit or miss affair. One of Volterra’s customers runs 17,000 convenience stores in Japan, and uses video analytics to take inventory. That deployment required substantial computing and networking resources to be in each edge location, and the company leaned on Volterra’s solutions (software pre-configured on hardware devices) to enable the company to operate those 17,000 deployments as a single cloud.

“For example, if you have a water meter, does a water meter gateway need Kubernetes environment?” Singla asked. “Probably not. It’s only running one or two applications or workloads. Do they need to be containerized? Probably yes, because it’s easier to manage them and operate them. But it doesn’t mean that you need a Kubernetes environment there to run it.

“But on the other hand, if you were running a video analytics application or building a robot, there you may actually have many workloads running on the edge, and there for sure you would need some kind of a workloads orchestration and management system and that’s where Kubernetes is really good.”

In Singla’s view, Kubernetes is an essential component for running complex apps at large scale, but it’s not usable by everybody, and it’s not necessary for every application. “I think it might be overkill, is the right word,” he said.

Related Items:

The Curious Case of Kubernetes In the Enterprise

The Cure for Kubernetes Storage Headaches: Break Your Data Free

Kubernetes Gets an Automated ML Workflow