Putting Continual Learning on AI’s Map

(Bakhtiar Zein/Shutterstock)

The capability of a machine to “learn” on its own is the subject of some debate. With traditional supervised machine learning, decisions can be optimized, but the machine isn’t really learning by itself. Now a startup called Cogitai is hoping to push the limits of a machine’s capability to learn continuously using reinforcement learning techniques.

Cogitai was founded in 2015 by some of the earliest innovators in the reinforcement learning (RL) field, including Mark Ring, Peter Stone, and Pete Wurman. The Orange County, California is hoping to leverage the collective RL knowledge work of its founders and the 15 or so PhD computer scientists in the firm to change the course of AI applications.

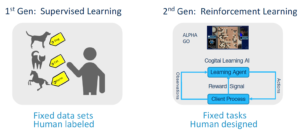

Ring, Cogitai’s CEO, paints a stark contrast between the supervised machine learning (ML) that he says powers 99% of AI applications today, and the newer RL approaches that Cogitai is taking with its cloud-based platform, called Continua, which becomes generally available today.

“Supervised learning data has to be labeled by people, by humans who provided the data labels, then algorithms are really just finding patterns in the data that match the labels supplied by the people,” Ring tells Datanami.

“It’s extremely powerful,” he continues, “but also very limited. In particular, the ability to make decisions and take actions in the world and then to learn from that decision-making process is completely missing from that picture.”

Reinforcing Learning

Cogitai is utilizing reinforcement learning techniques to power an array of AI applications via its Continua cloud service.

RL is a branch of machine learning that has been getting lots of attention recently, especially in certain industrial use cases, such as robotic process automation. Compared to supervised ML, where a data scientists is typically employed to periodically retrain the algorithm to keep its accuracy high, the feedback loop is built directly into the RL process.

While a supervised ML algorithm will look for external cues to gauge its accuracy or performance – from a data scientist or the line of business – an RL algorithm has a built-in feedback mechanism that allows it to continually adjust its settings and try new approaches to reach a desired outcome. This is an important distinction that Cogitai hopes to exploit with its new cloud service.

The key component in Cogitai’s RL system is a learning agent. According to Ring, the Continua learning agent determines what actions to take and then passes those decisions back to the client. After the client executes the action, the result from that action is sent back to the learning agent, which assesses whether it met certain performance metrics determined b the client.

“The learning agent tries to maximize that performance metric,” Ring says. “It tries to increase that metric as much as possible by taking different actions in different situations that lead to the maximal possible reward signal.”

This RL approach allowed Google to train a computer model called AlphaGo to beat the world champion at that game. After iterating across millions of games, AlphaGo was able to optimize its decision making, without any direct input from human programmers.

In other words, the system learned not only what works, but what doesn’t. This RL capability to recall success and built skills worked spectacularly well for Google’s video game killer, Ring says, and the same process can be applied to address specific industrial challenges, such as automobile control systems, manufacturing optimization, and logistics operations, among others.

Continual Learning

The benefits of RL have been well-documented, and so have its limitations. The folks at Cognitai are hoping to blow past some of those limitations to take RL into the realm of continual learning.

“It’s taking RL one step further,” Ring says. “Instead of just learning a task, which is what RL allows you do to, it’s learning skills. It’s an important distinction that once you’ve learned to do something, you want to be able to keep it around.”

Once an AI is able to recall the tasks that it has already learned, it will be able to build upon those tasks. That’s not an easy thing to do with computers and robots, Ring says.

“It seems really trivial to us,” he says. “It seems like something that any system should be able to do. It seems like a no brainer. But unfortunately, built into the theory of RL [is] why it’s very difficult to do that. Being able to go beyond that requires a completely different framework, different structure, different way of doing things to get to a place where skills can be built up and reused and built upon in new ways.”

Cogitai has worked with several customers already. The company, which was backed by Sony, is ramping up its Continua service, with a focus on improving processes, systems, and software bots.

The progress we’ve made in supervised machine learning the past 10 years has been phenomenal, Ring says, and people are getting lots of use out of it. But we’ve just started exploring the tip of the iceberg for what AI has in store for us, he says.

“I think when we look back at 10 to 20 years at the state of AI today, we’ll see that supervised learning was just the first step,” he says. “It was just a tiny sliver of everything that was possible.. Once we allow AI system to start making decisions on their own, and then improving their behavior, improving their decisions based on how well things turned out, then the application to industry will explode. It’s going to dwarf what was possible with supervised learning alone.”

Related Items:

Machine Learning, Deep Learning, and AI: What’s the Difference?

Developers Will Adopt Sophisticated AI Model Training Tools in 2018

Meet Ray, the Real-Time Machine-Learning Replacement for Spark