Intel Unveils Nauta, a DL Framework for Containerized Clusters

Intel today unveiled Nauta, a new distributed computing framework for running deep learning models in Kubernetes and Docker-based environments. The chip giant says Nauta will make it easier for data scientists to develop, train, and deploy deep learning workloads on large clusters, “without all the systems overhead and scripting needed with standard container environments.”

Deep learning has become one of the hottest areas of machine learning, thanks to its capability to train highly accurate predictive models in areas like computer vision and natural language processing. The technique, however, generally requires huge amounts of data and large amounts of computing horsepower, which today’s enterprises typically want to manage using containers.

Getting all these pieces to work together – Kubernetes, Docker, and deep learning frameworks – is complex and hard. Now Intel is hoping to simplify the effort with Nauta.

Nauta (pronounced “Now-Tah”) provides a way to run deep learning workloads on large, distributed computer clusters managed by Kubernetes, the popular orchestration tool used atop containerized infrastructure. Nauta is essentially a commercial implementation of Kubeflow, the open source machine learning library for Kubernetes that was developed by Google, creator of Kubernetes.

According to Carlos Morales, senior director of deep learning system at Intel, Nauta is an enterprise-grade product that will help make data science teams more productive in developing, training, and deploying deep learning workloads on containerized environments.

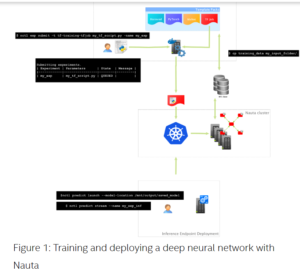

“With Nauta, users can define and schedule containerized deep learning experiments using Kubernetes on single or multiple worker nodes, and check the status and results of those experiments to further adjust and run additional experiments, or prepare the trained model for deployment,” Morales says in a blog.

“At every level of abstraction, developers still have the opportunity to fall back to Kubernetes and use primitives directly,” he continues “Nauta gives newcomers to Kubernetes the ability to experiment – while maintaining guard rails.”

Nauta features customizable deep learning model templates that support popular deep learning development frameworks, including TensorFlow, MxNet, PyTorch, and Horovod. The software enables developers to run deep learning experiments on one or multi-node systems, in batch or streaming modality, all in a single platform, Morales says.

Nauta sports a bevvy of user interfaces, including a Web UI and command line interface, which “reduces concerns about the production readiness, configuration and interoperability of open source DL services,” Morales continues. In addition, Intel is supporting TensorBoard, a graphical user interface (GUI) that helps users visualize TensorFlow programs, with an eye to understanding and debugging them.

Developers can create deep learning applications using their favorite environment, including Jupyter a popular data science notebook environment, according to a video on Intel’s Nauta landing page. Multiple users can interact with Nauta simultaneously, and the product facilitates sharing of inputs and outputs among multiple team members, Morales writes.

Intel unveiled Nauta in conjunction with its AI DevCon event, which took place today in Munich, Germany. The company is distributing Nauta under an Apache 2.0 license. The project, which managed on GitHub, will see updates throughout 2019, including some coming before the end of the first quarter.

Related Items:

Deep Learning: The Confluence of Big Data, Big Models, Big Compute

Deep Learning Is Great, But Use Cases Remain Narrow

Inside Intel’s nGraph, a Universal Deep Learning Compiler