Anaconda Taps Containers to Simplify Data Science Deployments

Anaconda (formerly Continuum Analytics) today unveiled a new release of its commercial data science platform that leverages Docker and Kubernetes containerization technology to allow data scientists to deploy models to production environments with a single click.

It takes more than a click to get Anaconda Enterprise 5 installed and set up, of course. But once it’s up and running, the process of provisioning resources and deploying data science models into a production environment can be accomplished with a single button press, Anaconda claims.

The features in Anaconda Enterprise 5–the company’s flagship commercial offering for streamlining production deployment of models that data scientists create with its open source Anaconda toolset—is all about delivering an “integrated experience,” according to Anaconda CTO Peter Wang.

“You can take a data science project with notebooks, Python or R scripts, or interactive dashboard applications, and you can go and one-click deploy them,” Wang tells Datanami. “You can also look at all the deployed instances you have. You can kill them, or share a live running instance with collaborators…The whole thing is an integrated environment.”

Prior to this release, data scientists would commonly spend weeks or months collaborating with the company’s IT and application development teams to get a particularly data science model deployed into production.

By leveraging Docker and Kubernetes under the covers to automate the deployment of highly tuned environments to X86 laptops, servers, or even clusters of servers running Apache Hadoop or Apache Spark, Anaconda Enterprise 5 largely eliminates the need for this extra work, thereby enabling the data scientist to spend more time on data science and less on understanding the technologies and processes of enterprise IT environments.

Want says the software is particularly useful in more complex environments, such as when a data scientist wants to start experimenting with different Python or R packages within the open source Anaconda toolset, or when multiple data scientists or teams of data scientists want to use different data science tools.

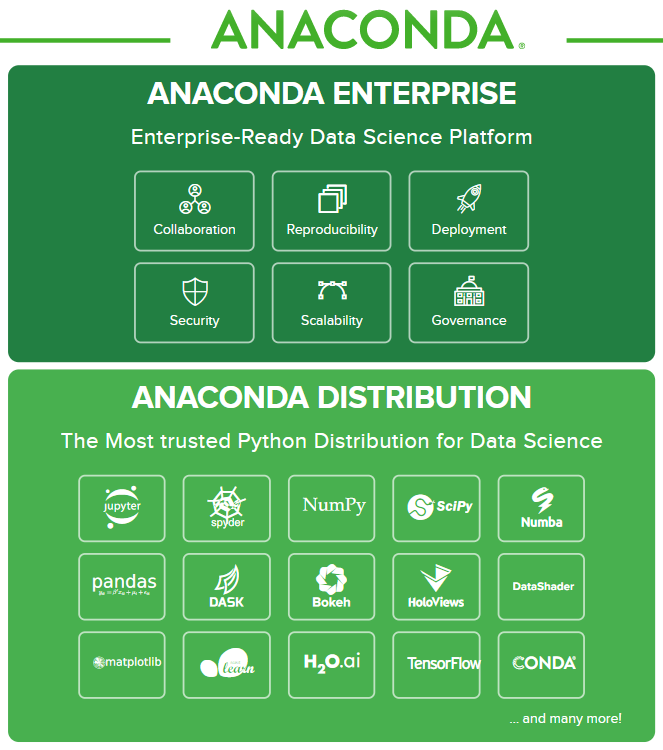

Anaconda Enterprise provides complimentary capabilities for users of the open source Anaconda distribution

“Even in cases where you have a good IT team, they can get it configured once,” he says. “But when you have a couple of production jobs to keep running and then you start using new version of liberties that just came out to do other stuff, the IT team is going to be really hard pressed to service that in a robust way.”

Many IT department are embracing containerization specifically to address this problem. But data scientists are often ill-equipped to get up to speed with things like Docker and Kubernetes, when they’re already being asked to be experts in statistics, machine learning, and the business problem at hand.

“Data scientists themselves are not necessarily equipped to manage the container stuff and do all the bits and pieces,” Wang says. “That’s why we decided to build this bridge piece.”

Anaconda Enterprise is integrated with Active Directory and LDAP servers, ensuring that only authorized users have access to data science tools. The software works with both on-premise and cloud infrastructures, but most paying customers use it on-premise, Wang says.

The flexibility to target cloud and on-prem is important, he says. “This is something that’s of critical importance because data science spans such a huge swath of technology,” he says. “Some people need a GPU. Other people need a big memory, and other people need a cluster, or a specific version of Spark.”

In other news, the Austin, Texas company changed its name from Continuum Analytics to Anaconda on Monday, taking the name of its main product, which includes more than 1,000 Python and R tools and has more than 4.5 million users.

Related Items:

Why Anaconda’s Data Science Tent Is So Big–And Getting Bigger

Open Data Science Presents Business Opportunity to New Continuum CEO