Bridging the Trust Gap in Data Analytics

(eelnosiva/Shutterstock)

Do you trust your analytics? If you’re like most executives, you pledge allegiance to the power of data and analytics to produce insights and guide intelligent decision-making. However, there’s a big gap between what analytic decision-makers publicly declare about their analytics, and what they privately believe, a new KPMG survey suggests.

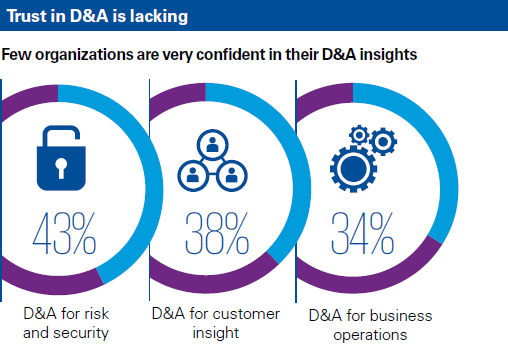

Despite the frequent declarations from executives about developing data-driven processes with analytics, only one third of decision-makers actually trust their analytics, according to a survey conducted by Forrester Consulting on behalf of KPMG for its latest report, titled “Building Trust in Analytics.”

However, more than three-quarters of survey respondents reported that their customers believed they were getting analytics right. This gap between the outward belief in the power of analytics, and the lack of confidence in analytics held by executives, represents a potential problem, especially as investments in big data analytics continues to ramp up.

KPMG’s study found businesses are using data and analytics (D&A) for a variety of purposes these days, including finding new customers (used by 66% of survey respondents), monitoring brand sentiment (67%), understanding existing customers (69%), spotting fraud (70%), complying with regulations (70%), targeting marketing (65%), and developing new products and services 67%). To be sure, the breadth of use cases for big data analytics increases by the day.

While data analytics continue to become more widespread in business and society, they’re not having the promised impact, at least in the eyes of executives. A full 60% of the 2,165 data and analytics decision-makers surveyed by Forrester say they’re “not very confident” in their analytics insights. Only 51% of respondents say the C-suite fully supports their data analytics strategy.

Haunted by Old Bugaboos

So what’s holding up analytics, or at least our trust in their accuracy? The culprits are not surprising.

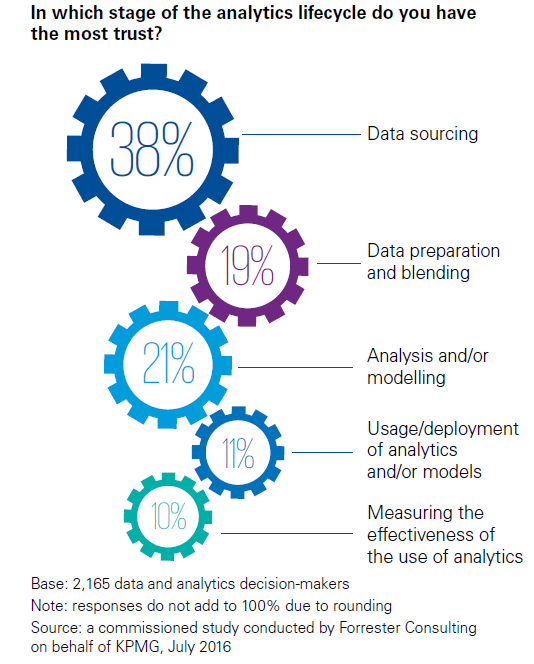

According to the report, the trust gap in analytics stems from a fairly widespread inability to get the details right. Most data and analytics decision-makers simply do not believe their organizations have a firm handle on the processes and tools needed to engage with analytics at a high level.

The survey found that:

- Only 10% of organizations believe they excel in quality of data, tools, and methodologies;

- Only 13% believe they excel in privacy and ethical use of data analytics;

- Only 16% believe they perform well in ensuring the models they produce are accurate;

- Only 19% believe they perform well in data preparing and blending.

To be sure, some of trust gap stems from the relative newness of the field of big data analytics. However, another factor to consider is that modern-day practitioners of data analytics are being tripped up by the same old problems that plagued the old data warehousing and OLAP practitioners 15 years ago. Without investing in things like data governance, security, and model efficacy, one won’t enjoy lasting success in data analytics at a high level.

“I think [the trust gap] has existed for a while, but it hasn’t had the urgency that it has today because people are starting to really realize how pervasive analytics are,” says Bill Nowacki, Managing Director of Data & Analytics for KPMG Lighthouse in the United States. “I think that for a long time business executives were allowed to chalk up their success to having a great gut. But with the pace of business today, we know we can’t rely on the gut anymore.”

(Imagentle/Shutterstock)

The systems powering today’s analytics are largely “black boxes” that are difficult to understand. That’s not helping the trust gap, Nowacki says. “The remedy of course isn’t to give them a black box,” he tells Datanami. “Give them a glass box.”

Fixing Trust Gaps

KPMG espouses a four-point plan for combating the so-called “trust gap.” To gain (or regain) trust in analytics, organizations should focus on quality, effectiveness, integrity, and resilience:

- Quality: Are the fundamental building blocks of D&A (data and analytics) good enough? How well do organizations understand the role of quality in developing and managing tools, data and analytics?

- Effectiveness: Do the analytics work as intended? Can organizations determine the accuracy and utility of the outputs?

- Integrity: Is the D&A being used in an acceptable way? How well-aligned is the organization with regulations and ethical principles?

- Resilience: Are long-term operations optimized? How good is the organization at ensuring good governance and security throughout the analytics lifecycle?

Real World Impact

Getting analytics right is imperative, not just so executives can sleep well at night knowing their KPIs are accurate, but because analytics are impacting our daily lives more and more each day. The trust gap gets wider every time a driver dismisses a routing app because it’s telling them to turn left onto a road that’s closed, or encourages a shopper to repurchase a major kitchen appliance they already bought online.

“We need to find ways to establish societal trust in how organizations operate in the emerging data-driven society,” says Sander Klous, partner, KPMG in the Netherlands. “The simple reality is that we are moving quickly into a world in which our behavior and decisions are heavily impacted by systems fueled by data.”

Simply put, when the algorithms are wrong, it impacts people. If data and analytics are to deliver the life-changing impacts that it promises to deliver, the analytics folks need to get the details right. Currently, many of them are not.

Nowacki says the twin challenges of data governance and data curation have to be addressed to make the analytics believable to executives. If an analytic is based on the existence of a certain piece of data, one must ensure that it’s maintained and there are secondary and tertiary sources of the data if the original source is lost.

Continuous validation of the accuracy of models is another step that organizations must take if they’re going to have long-term success with analytics. However, there’s a general lack of tools to automate experimentation with analytics.

“It comes backs to how do you make it transparent so it can earn confidence and trust,” Nowacki says. “The answer is increasingly, can I validate this data set, as well as can I continuously run champion-challenger or experimental design, to help demonstrate that the black box is actually making good decisions.”

Related Items:

Data Catalogs Emerge as Strategic Requirement for Data Lakes

Charting a Course Out of the Big Data Doldrums