Peering Into Computing’s Exascale Future with the IEEE

Computer scientists worried about the end of computing as we know it have been banging heads for several years looking for ways to return to the historical exponential scaling of computer performance. What is needed, say the proponents of an initiative called “Rebooting Computing,” is nothing less than a radical rethinking of how computers are designed in order to achieve the trifecta of greater performance, lower power consumption and “baking security in from the start.”

Thomas Conte, the Georgia Tech engineering professor co-chairing the Rebooting Computing crusade, said he tells his students its either the best or worst time to be studying computer science. The outcome of the initiative sponsored by the Institute of Electrical and Electronics Engineers (IEEE) Computer Society will go a long way toward determining whether Conte’s students enter a dead-end field or a new Golden Age of Computing.

Moreover, the IEEE sees a critical application for whatever emerges from its overhaul of computer architectures: the emerging if ill defined Internet of Things. Hence, the effort to reboot how computers are designed could ultimately be driven by the technical challenges presented by the IoT.

That outcome could bode well for one viable approach: cloud computing. The abstraction of computing elements in the cloud may offer a way forward, said Conte. “The cloud and IoT change things,” he said in an interview. Together, “they offer a way of enabling the rebooting of computing.”

The cloud and the construct known as the Internet of Things represent more than the incremental approaches of the last decade designed mainly squeeze the last drops of performance out of computer architectures erected on the foundation of Moore’s Law. But the end is nigh for the driving force of computing over the last several decades: “It’s a basic property of physics that you cannot make transistors too small,” Conte said, “eventually the size of the silicon atoms limit you.”

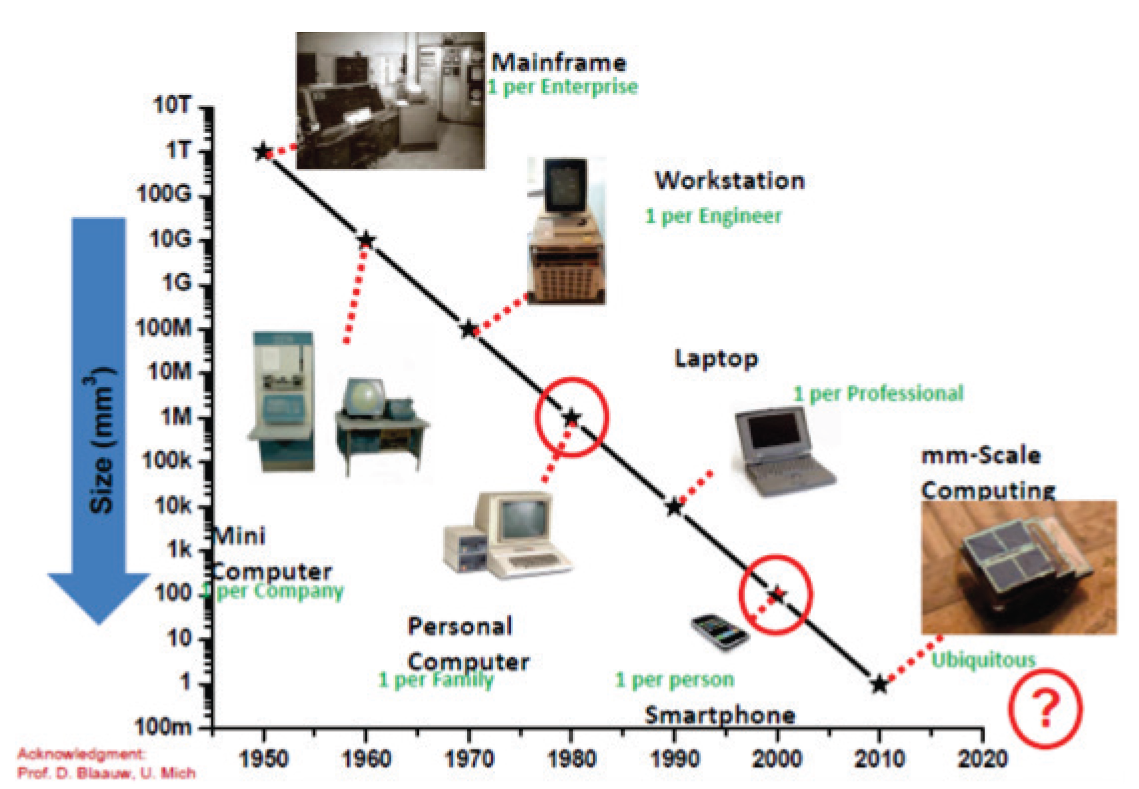

Courtesy: IEEE Computer Society’s “How Will Rebooting Computing Help Iot?”

For computing, the beginning of the end came in the mid-1990s when computer architectures themselves became the limiting factor. The first trick was parallel processing, augmented by superscalar microprocessors. For a time, Conte said, this architecture delivered a doubling of computer performance for roughly the same cost every 18 months.

Eventually, the “speculative execution” of more and more instructions linked higher performance with higher power. This was unsustainable, and the beginnings of the end of Moore’s Law surfaced.

The response to this architectural conundrum was multiple computing cores on the same chip, or multicore. Conte argued that that stopgap fundamentally shifted the computing burden from hardware to the programmer. “Software is still brittle,” Conte asserted, and multicore turned out to be little more than a Band-Aid.

This is why the proponents of “rebooting computing” believe applications like IoT could end up driving future computer architectures rather than relying on the inherently sequential processes used by today’s computer programmers.

Then there is a growing list of emerging approaches that Conte acknowledges are considered “lunatic fringe” to the computer industry. But the rebooting computing camp is attempting to steer the conversation away from incremental steps to new ways of building computers for the next set of applications. The question is, Will applications like IoT end up driving computer architectures?

“Can we build computers in a fundamentally different way, to operate on very different algorithms and programming languages than we have today?” Conte asks.

There is no shortage of “lunatic fringe” computer architectures. What is lacking, Conte and others assert, is the willingness to risk a fundamental overhaul in order to transform computing. It will take a public-private partnership, the IEEE group maintains. (The impetus for “rebooting computing” was a National Science Foundation initiative several years ago to revamp computer education.)

Along with the “Three Pillars” of energy efficiency, new user interfaces and “dynamic security,” the list of possible computing approaches ranges from “neuromorphic” and “approximate” computing to adiabatic, or “reversible,” computing to variations on parallelism.

Quantum computing, which has attracted much investment, shows promise, Conte agreed. “It’s going to have it’s own niche,” he added, “its own node in the cloud. But it’s not low power.”

A more promising approach, one Conte thinks could fundamentally transform computing, is HP Labs’ “The Machine.”

The HP architecture “fuses” memory and storage, simplifies complex data hierarchies and—in a nod to the era of big data and the IoT—moves processing closer to the data. Unlike today’s computer architectures, The Machine also “bakes” security into hardware and software stacks and promises to deliver the scaling that Moore’s Law no longer can.

“What were trying to do,” Conte adds, “is find a new way of scaling across the hierarchy.”

Another possible source of computer innovation, one that would help cement the public-private partnership sought by the IEEE, is ongoing computer science research at the Defense Advanced Research Projects Agency. DARPA’s Microsystems Technology Office (MTO) spends a lot of money on device research as it searches for a replacement for silicon. But MTO Director William Chappell also stressed it also looking beyond circuit design to find new ways of representing data. Hence, the agency is placing greater emphasis on areas like algorithm development.

For the military, that translates into software-defined capabilities like being able to share scarce spectrum. In the commercial sector, those same techniques could be used to process data from the billions of connected devices and sensors that will make up the IoT.

Chappell comes at the computing problem in a way similar to the IEEE initiative: “Year after year, you start seeing the ‘free ride’ [Moore’s Law] going away.” In other words, the number of transistors keeps rising, but the ability to leverage that processing power is flattening.

The defense agency, like IEEE, sees the same need to reboot computing: “Our computing systems must have the capabilities to handle this ever increasing demand in new ways, exploring new architectures, algorithms/signal processing and hardware,” it said.

The key to all of these efforts, of course, will be moving promising architectures like The Machine from the lab to the supercomputer center and, eventually, to hyper-scale datacenters.

These development efforts are squarely aimed at the goal of achieving the next big goal: exascale computing, that is, performance at 1018 calculations per second. This level of performance along with the three pillars of future computing could in turn provide a path to achieving an Internet of Things as a real computing platform rather than merely a marketing construct.

Hence, as Conte notes, the computer architecture of the future would be driven by applications, displacing the old approach in which architectural choices were made, enshrined and the resulting machines perform programs, the programs calculate numbers to a given accuracy or run one instructions after another.

Again, IEEE argues, that’s simply not sustainable in a connected world.

Other applications like weather simulation also illustrate why this sequential approach to computing no longer works. Forecasters can’t predict storm tracks with the accuracy needed to avoid, for example, economic disruptions. The problem is computers and the models they use are not keeping up with the waterfall of satellite data be produced each day. Just this week, for example, the National Oceanic and Atmospheric Administration announced a cloud-based big data effort in an attempt to get its arms around the estimated 20 terabytes of observational data produced each day by U.S. weather satellites.

While chipmakers like Intel focus on selling more chips to add intelligence to IoT devices and networking companies like Cisco Systems build corporate strategies around the “Internet of Everything,” it does appear that a critical mass is building to fundamentally rethink how computers are designed and how they will be used in the future.

To that end, Conte said the Rebooting Computing movement is scheduled to reconvene again at the end of this year in Washington to launch what the president of the IEEE’s Computer Society calls the start of “an earthquake in the computing industry.”

Related Items:

Intel Says Upgraded Haswells Will Speed Analytics

Why Big Data Isn’t Changing Everything (At Least Not Yet)

Five Reasons Machine Learning Is Moving to the Cloud