Hadoop Speeds Seismic Event Processing

While the perception exists that data science is the purview of Internet companies working to predict and optimize clickthrough rates and make recommendations in the fashion of e-retailer Amazon, there are many other scientific fields (astronomy, geophysics, genomics, etc.) using large data sets to advance discovery. The challenges faced by these two groups are very similar. In fact, many of big data’s roots can be traced to the high-performance computing community, which has long struggled with data-intensive workloads.

Seismic data processing has a long tradition of pushing the boundaries of high-performance computing. The discipline has applications in earthquake and aftershock detection and it’s also heavily used by exploration and production companies in order to identify oil and natural gas deposits. Seismic techniques even led to the discovery of the Chicxulub Crater implicated in wiping out the dinosaurs.

Seismic data processing has a long tradition of pushing the boundaries of high-performance computing. The discipline has applications in earthquake and aftershock detection and it’s also heavily used by exploration and production companies in order to identify oil and natural gas deposits. Seismic techniques even led to the discovery of the Chicxulub Crater implicated in wiping out the dinosaurs.

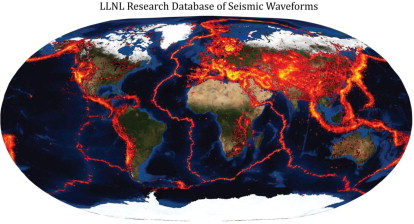

Recently a team of scientists from Lawrence Livermore National Laboratory and Google explored whether the Hadoop framework would support the large-scale analysis of massive amounts of seismic event data. The authors lay out their methodology and conclusions in a recently published paper, “Large-Scale Seismic Signal Analysis with Hadoop.”

Going back to the 1960s, scientists have used waveform correlation as the basis for highly sensitive detectors in studying earthquakes. Correlation detectors have been used almost exclusively over small regions of interest to track aftershock sequences, but interest has grown in using correlation to process events over broader areas. It’s considered a key tool to detecting earthquakes, but developing the technique requires further study.

“To make better use of correlation relationships in seismic data analysis we need to understand what fraction of observed seismicity displays correlation relationships as functions of observed properties (e.g. source-receiver distance, window-length, bandwidth), and correlated source differences (e.g. location, depth, magnitude),” the authors explain. “The longer-term goal is to understand the physics underlying observed correlation behavior in terms of source similarity and path effects.”

Before the work can proceed, a major hurdle must first be cleared. There are tens of thousands of seismic events each year observed at tens of thousands of stations, generating an enormous amount of data. “Without an extremely efficient way of performing these computations, it is simply too difficult and time consuming to perform the many correlation runs that may be required to achieve a deep understanding of the phenomena under study,” the team writes.

To succeed, they must improve the speed at which massive amounts of seismic event data can be processed, and for this, they turn to Hadoop, an open source framework for large-scale processing of data sets. “When run over a distributed file system such as HDFS [Hadoop Distributed File System] with nodes connected to dedicated storage, this framework naturally leverages data locality, whereby tasks running on a particular node process data resident to that node. By moving computation to the data, Hadoop allows exceedingly fast IO and compute performance for highly parallelized algorithms,” the authors contend.

To succeed, they must improve the speed at which massive amounts of seismic event data can be processed, and for this, they turn to Hadoop, an open source framework for large-scale processing of data sets. “When run over a distributed file system such as HDFS [Hadoop Distributed File System] with nodes connected to dedicated storage, this framework naturally leverages data locality, whereby tasks running on a particular node process data resident to that node. By moving computation to the data, Hadoop allows exceedingly fast IO and compute performance for highly parallelized algorithms,” the authors contend.

The team started out by cross correlating a global dataset of more than 300 million seismograms. This part of the experiment was done on a conventional distributed cluster, and required 42 days. They then re-architected the algorithms to run as a series of MapReduce jobs on a Hadoop cluster.

After several implementations and a lot of time and effort devoted to increasing the parallelism of their algorithms, runtime was winnowed significantly. “In aggregate, the refined Hadoop implementation yielded a factor of 19 improvement over the original waveform correlator, going from 48 hours on the 1 TB test dataset to under 3 hours in total,” the team writes.

Based on these results, the team expects to be able to re-correlate its entire database, containing about 50 terabytes of data and more than 300 million waveforms, in about two days instead of the original 42 days. They expect this will have a major impact on their ability to process massive seismic data sets, and they intend to describe that research in future papers.

In discussing their results, the team suggests that “the Hadoop model of distributing the data with the computations presents a paradigm shift for the data-intensive scientific computing community,” where it’s essential that algorithmic priorities shift to make the best use of underlying architectures.

“Instead of asking ourselves how we can decrease the IO burden, as we did in our original implementation of the waveform correlator, we now find ourselves asking how we can increase the parallelism of our algorithms. Whereas before we had lots of CPU which could not be fully utilized, now we have blazingly fast IO and imbalanced CPU load. The process of finding new ways to break apart one’s algorithms into finer-grained sub-problems is at the heart of the Hadoop philosophy: scale out, not up,” state the researchers.

While the results are very promising, the time cost of getting data into a Hadoop cluster was not insignificant. In this case, NAS speed and network throughput limitations put a damper on load times. They estimate that it may take one to two months to transfer their complete data holdings. Researchers who have data stored remotely may encounter even higher levels of latency. A possible solution involves using the Hadoop cluster as the main storehouse for waveforms as well as associated metadata.

The authors originally set out to explore and expose the correlation behavior of large collections of seismic data and by necessity they kept refining their computational setup to make the problem more tractable. Because of these and other discoveries, correlation is on track to becoming a more useful tool in both geophysical research and seismic event cataloging operational systems. While not the focus of this paper, the authors suggest that building high-resolution models of the Earth’s crust and upper mantle is another data-intensive endeavor that and could conceivably benefit from a Hadoop implementation of sufficient scale.

The Hadoop ecosystem is still maturing with SQL-over-Hadoop, streaming analytics, and time series databases under development. This activity makes it difficult for researchers looking to settle on a given implementation, but the authors anticipate there will be some clear winners emerging in the next couple years with technology that will benefit the seismological community.

Related Items:

Earthquake Science Makes Headway in Big Data Era