In-Memory Tweaks Boost Proteomics Research

From genome sequencing to mass spectrometry to collect information for proteomics work, researchers have honed the craft of data collection for the life sciences—now the real questions of large-scale analysis are foremost on the minds of many in this forward-thinking community.

The list of analytical solutions and platforms gets longer each year, but when we think about column-based in-memory databases, the varied needs of business versus scientific analytics tend to come to mind.

The list of analytical solutions and platforms gets longer each year, but when we think about column-based in-memory databases, the varied needs of business versus scientific analytics tend to come to mind.

According to a team of German researchers who worked with the SAP Innovation Centers in Germany and Palo Alto, the business community isn’t the only user base that can tap sophisticated in-memory data mining tools. The researchers state that the analytical performance of these engines is a promising prospect for the data mining needs of the life sciences community.

More specifically, this in-memory experiment focused on bringing a business analytics platform into the proteomics fold. This molecular biology branch requires complex analysis across several terabytes of protein data to identify diseases and other traits via biomarkers.

The team behind the business-to-scientific analytics flip focused on the HANA, SAP’s in-memory data management platform. Upon fine-tuning the platform to handle the vast wells of life sciences data, they concluded that an in-memory database approach meets “some inherent requirements of data mining in the life sciences.” More specifically, they claim that for their specific area, proteomics research, HANA and the implementation of a “flexible data analysis toolbox can be used by life sciences researchers to easily design and evaluate their analysis models.”

Life sciences data is not only massive in volume, it requires complex, extensive analysis that requires ultra-performance from the data management side of the fence. This is usually handled with heterogeneous systems, applications and scripts, but with the diversity and distribution of the data across different files, formats and systems, the challenges are significant. This means that what these users require are systems that support heterogeneity of “application logic and data sources.”

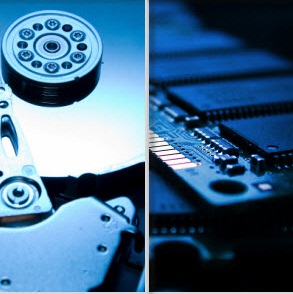

The advent of analytical platforms that allow for analysis of big, diverse, distributed data in near-real time creates new possibilities. The team claims that the SAP HANA in-memory database is different from standard disk-based solutions since it stores primary data directly in memory to boost performance and allow for the speedy calculations. This, they say, “enables fast execution of complex ad-hoc queries even on large datasets and includes multiple data processing engines and languages for different application domains.”

The researchers said that an enterprise platform like SAP’s HANA, which serves as the foundation for their future enterprise applications, can be tailored to meet scientific needs. They say it already has the basis for a solid analytical platform built-in with complex data mining capabilities and machine learning functionality as well as the aforementioned ability to sling ad-hoc queries across massive datasets in relatively short timeframes.

The buzz around in-memory approaches to complex analysis for both science and business has picked up in pace over the last year or two. In addition to SAP HANA, there are a number of other companies that are focusing on different areas of the market, including SAS, Kognitio, ParAccel, IBM, VoltDB and others.

Related Articles

Pulling Big Insurance Into the In-Memory Fold

World’s Top Data-Intensive Systems Unveiled

IBM Fellow Tracks HPC, Big Data Meld

Six Super-Scale Hadoop Deployments