Lightning AI’s New Thunder Compiler Boosts AI Development Efficiency by 40%

NEW YORK, March 28, 2024 — Following the company’s presentation at the NVIDIA GTC AI conference, Lightning AI, the company behind PyTorch Lightning, which has over 100 million downloads, today announced the availability of Thunder, a new and powerful source-to-source compiler for PyTorch designed for training and serving the latest generative AI models across multiple GPUs at maximum efficiency. Thunder is the culmination of two years of research on the next generation of deep learning compilers, built with support from NVIDIA.

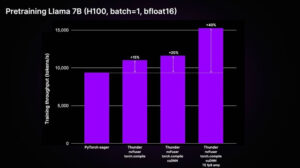

Large model training can cost billions of dollars today because of the number of GPUs and the length of time it takes to train these models. Lack of high-performance optimization and profiling tools puts this scale of training out of reach for developers who don’t have the resources of a large technology company. Even at its early stage, Thunder achieves up to a 40% speed-up for training large LLMs, compared to unoptimized code in real-world scenarios. These speed-ups save weeks of training and lower training costs proportionally.

Large model training can cost billions of dollars today because of the number of GPUs and the length of time it takes to train these models. Lack of high-performance optimization and profiling tools puts this scale of training out of reach for developers who don’t have the resources of a large technology company. Even at its early stage, Thunder achieves up to a 40% speed-up for training large LLMs, compared to unoptimized code in real-world scenarios. These speed-ups save weeks of training and lower training costs proportionally.

In 2022, Lightning AI hired a group of expert PyTorch developers, with the ambitious goal of creating a next-generation deep learning system for PyTorch that could take advantage of the best-in-class executors and software like torch.compile; nvFuser, Apex, CUDA Deep Neural Network Library (cuDNN) – all three products from NVIDIA; and OpenAI’s Triton. The goal of Thunder was to allow developers to use all executors at once so that each executor could handle the mathematical operations it was best designed for. Lightning AI leveraged support from NVIDIA for the integration of NVIDIA’s best executors into Thunder.

The Thunder team is led by Dr. Thomas Viehmann, a pioneer in the deep learning field best known for his early work on PyTorch, his key contributions to TorchScript, and for making PyTorch work on mobile devices for the first time.

Today, Thunder is starting to be adopted by companies of all sizes to accelerate AI workloads. Thunder aims to make the highly specialized optimizations discovered by prominent programmers at organizations such as Open AI, Meta AI, and NVIDIA more accessible to the rest of the open-source community.

“What we are seeing is that customers aren’t using available GPUs to their full capacity, and are instead throwing more GPUs at the problem,” says Luca Antiga, Lightning AI’s CTO. “Thunder, combined with Lightning Studios and its profiling tools, allows customers to effectively utilize their GPUs as they scale their models to be larger and run faster. As the AI community embarks on training increasingly capable models spanning multiple modalities, getting the best GPU performance is of paramount importance. The combination of Thunder’s code optimization and Lightning Studios’ profiling and hardware management will allow them to fully leverage their compute without having to hire their own systems software and optimization experts.”

“NVIDIA accelerates every deep learning framework,” said Christian Sarofeen, Director of Deep Learning Frameworks at NVIDIA. “Thunder facilitates model development and research. Our collaboration with Lightning AI, to integrate NVIDIA technologies into Thunder, will help the AI community improve training efficiency on NVIDIA GPUs and lead to larger and more capable AI models.”

“I couldn’t be more thrilled for Lightning AI to lead the next wave of performance optimizations to make AI more open source and accessible. I’m especially excited to partner with Thomas, one of the giants in our field, to lead the development of Thunder,” says Lightning AI CEO and founder Will Falcon. “Thomas literally wrote the book on PyTorch. At Lightning AI, he will lead the upcoming performance breakthroughs we will make available to the PyTorch and Lightning AI community.”

Thunder will be made available open source under an Apache 2.0 license. Lightning Studios will offer first-class support for Thunder and native profiling tools that enable researchers and developers to easily pinpoint GPU memory and performance bottlenecks.

“Lightning Studios is quickly becoming the standard for not only new developers to build AI apps and train models but also for deep learning experts to understand their model performance in a way that is not possible on any other ML platform,” says Falcon.

Pricing

Thunder is open source and freely available.

To use Lightning Studios, users can get started free without a credit card. They’ll get 15 free credits per month and can buy more credits as they go. For enterprise users, Lightning AI can be deployed on their cloud accounts and private infrastructure so they can train and deploy models on their private data securely.

There are four pricing tiers in Lightning Studios: a free level for individual developers; a pro level for engineers, researchers, and scientists; a teams level for startups and teams; and finally, for larger organizations in need of enterprise-grade AI, the enterprise level.

To sign up for Studios, go to https://lightning.ai.

PyTorch Lightning, developed by Falcon, has become the standard for training large-scale AI models such as Stable Diffusion, with over 10,000 companies using it to build AI at scale. Using this knowledge, the team at Lightning AI rethought from the ground up what AI development on the cloud should feel like.

About Lightning AI

Lightning AI is the company behind PyTorch Lightning, the deep learning framework of choice for developers and companies seeking to build and deploy AI products. Focusing on simplicity, modularity, and extensibility, Studio, its flagship product, streamlines AI development and boosts developer productivity. Its aim is to enable individual developers and enterprise users to build deployment-ready AI products. For more information, visit: https://lightning.ai.

Source: Lightning AI