People to Watch 2017

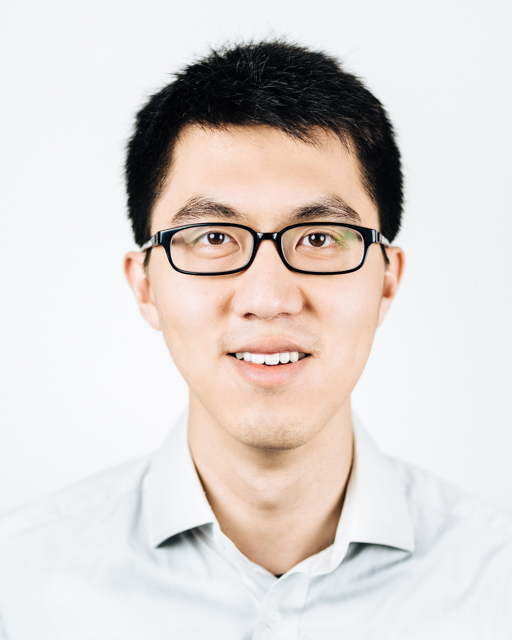

Reynold Xin

Co-founder and Chief Architect

Databricks

Apache Spark has become the go-to processing engine for big data workloads, and Reynold Xin—who has the most commits to the Spark project of any Spark contributor—is a big reason why. As an expert in distributed systems design, Reynold is a key part of Databricks‘ drive to improve Spark’s performance and to widen its usage with GPUs, graph processing, and more.

Apache Spark has become the go-to processing engine for big data workloads, and Reynold Xin—who has the most commits to the Spark project of any Spark contributor—is a big reason why. As an expert in distributed systems design, Reynold is a key part of Databricks‘ drive to improve Spark’s performance and to widen its usage with GPUs, graph processing, and more.

Datanami: Hi Reynold.

Congratulations on being named a Datanami Person to Watch in 2017! To kick things off, we wanted to ask what the timeframe is for achieving the “holy grail” of big data development: automated development of continuous applications?

Reynold Xin: Thanks for the interview.

We define a continuous application as an end-to-end application that reacts to data in real-time. Continuous applications typically need to combine many modes of operations together, e.g. updating data that will be served in real-time, ETL, creating a real-time version of an existing batch job, or online machine learning.

The primary challenge with building continuous application in the past is that it requires stitching together many disparate tools and data integrity (exactly-once, transactions) is often sacrificed among this complexity. In short, it’s extremely difficult to build, and we can’t even trust the result coming out of it.

To address these challenges, we started the Structured Streaming effort in Apache Spark last year. Structured Streaming creates one unified API for both batch and streaming, and data transformations defined in batch can work directly against real-time streaming data, as the engine will automatically incrementalize the logic with proper exactly-once and transactional semantics to guarantee data integrity.

While still early, Structured Streaming already supports the basic data transformations often used in ETL, e.g. roll-up aggregates for cubes, joins for data enrichment. We have also started deploying it in the wild. At Databricks we moved many of our batch-based production ETL pipelines to streaming with little effort. Viacom built a real-time analytics platform for shows such as MTV and Comedy Central using Structured Streaming.

It will take more time to support incrementalization of all the data transformation, but we are making tremendous progress here.

Datanami: What trends do you see as being most important for big data as we look to 2017 and beyond?

In the context of big data, the industry is rapidly shifting in the midst not one, not two, but three major trends.

The first is moving big data to the cloud, for better agility, for flexibility, and for lower total cost of ownership. From a technical architecture perspective, we would see changes in software architecture coming to decouple storage from compute and to become more elastic. Products that were designed for on-premise architecture in which the set of compute resources were relatively stable will require drastic changes in order to work efficiently and effectively in the cloud.

The second is the AI trend. With the cloud providing abundant compute resources and big data available for training better models, AI, through machine and deep learning, has come to the fore, found its voice, if you will. It’s being democratized, as distributed neural networks frameworks allow developers to analyse myriad data types—images, voice, text, video, social—in their applications to do image and voice recognition. AI’s offering will be ubiquitous in many applications, including advertising; social; medical; financial; cyber detection, threats and prediction; and automation driving autonomous transportation–you name it.

The last one is the increased accessibility to big data for users higher up the stack. Traditionally, only Silicon Valley-style tech powerhouses were capable of extracting value out of big data. Over time, with platforms such as Databricks and vertical applications, business-oriented users can also gain insights and make decisions based on big data.

Datanami: What are Databricks’ top goals for the coming year with regard to big data?

Obviously our goals align with the broader trends. One particular thing I want to highlight, that ties all the three trends together, is to drive value out of big data projects.

In the past few years, many organizations have invested in Hadoop-based big data infrastructure, often on-premise, and are now able to store a large amount of data. Having data sitting in storage doesn’t necessarily help. Many are finding these big data projects failing to provide value. We see this coming up across most of our customers, caused by a combination of technological and organizational problems.

We want to help organizations get more out of their data. We think the future of analytics will be virtualized — with elastic resources on demand, analytics platform that is able to connect to heterogeneous sources, and AI as a driving workload. And making things easier underpin all of these, as data is only as useful as people are able to leverage it.

Datanami: Outside of the professional sphere, are there any fun facts about you that your colleagues may be surprised to learn?

I’m a foodie. I like eating delicious food all over the world, from street food joints in Shenzhen to tapas in Tickets to French Laundry. I eat a lot and I’m lucky that I have fast metabolism and don’t gain much weight from eating too much.

More about Reynold Xin:

Reynold Xin is a co-founder and Chief Architect at Databricks. At Databricks, he led the development of Apache Spark. He served as the release manager for the project’s 2.0 release, and is behind many of the latest innovations in Spark, including GraphX, Project Tungsten, DataFrames, to make Spark more powerful, more robust, and easier to use. Prior to Databricks, he was pursuing PhD research at the UC Berkeley AMPLab, where he worked on large-scale data processing.