ChatGPT Growth Spurs GenAI-Data Lockdowns

(PeachShutterStock/Shutterstock)

The rise of generative AI and ChatGPT has the potential to increase global economic output by trillions of dollars per year, according to McKinsey. But it will also put about 2.4 million out of work by 2030, according to a Forrester report. The uncertainty over GenAI, including privacy and security concerns, is prompting some companies to block access to GenAI applications at work.

Banks like JPMorgan Chase and Deutsche Bank, tech firms like Samsung and Apple, and services firms like Accenture have already restricted employee use of ChatGPT, according to an article in HR Brew, a publication for human resources professionals.

Meanwhile, large publishers are also not playing along, with the New York Times, Reuters, CNN, Bloomberg, and the Washington Post reportedly blocking access to OpenAI’s Web crawler, GPTbot, which harvests data, according to a CNN story.

All told, about 10% of enterprises have locked down their data and refuse to allow employees to use it for AI internally, according to Komprise, which recently released its 2023 Unstructured Data Management Report.

That report, which is based on a survey of IT leaders and executives at 300 large companies across the U.S. the UK, found a shift in attitude as it pertains to AI and data. “Preparing for AI” was the top data storage priority for the next 12 months, with 31% of survey-takers expressing that sentiment, followed by cloud cost optimization at 22%.

But when it comes to company policies regarding enterprise use of AI, the survey detected big differences. The survey found:

- One quarter (26%) of survey respondents said they have no enterprise AI policy yet and are still trying to figure it out;

- Another quarter (24%) said employees are free to use any data with approved AI services;

- 21% of the survey takers said there are no restrictions on the use of AI;

- 20% said corporate data was available for use with approved AI services only;

- 10% said they completely forbid any AI.

“This year’s survey shows that in the blink of an eye, IT leaders are shifting focus to leverage generative AI solutions, yet they want to do this with guardrails,” Komprise CEO and co-founder Kumar Goswami said in a press release. “Data governance for AI will require the right unstructured data management strategy, which includes visibility across data storage silos, transparency into data sources, high-performance data mobility, and secure data access.”

Many AI use cases involve unstructured data, such as text and images. Companies are looking to AI for novel ways to put their vast collections of unstructured data–which is often said to comprise 80% of the world’s total data volume–to use to grow the business and improve customer satisfaction.

The characteristics of unstructured data are different than structured data, and it carries its own sets of risks that companies must be aware of if they’re going to use unstructured data to power AI applications for competitive advantage.

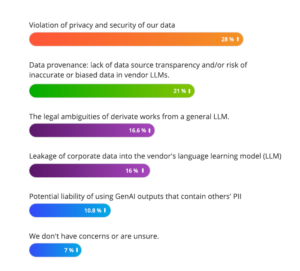

Potential violations of security and privacy was the number one concern about GenAI expressed in Komprise’s survey, with 28% stating this as a concern. That was followed by 21% who cited data provenance as a concern, particularly as it pertains to data source transparency and the possibility of inaccurate or biased data in large language models.

Other GenAI concerns include: legal ambiguities of deveritaive works from LLM, cited by 17%, leakage of corporate data into a vendors’ LLM, cited by 16%, and the potential liability of using GenAI outputs that contain others’ personally identifiable information (PII). Only 7% said they had no concerns about GenAI.

“Generative AI raises new questions about data governance and protection,” says Steve McDowell, the principal analyst and founding partner at NAND Research. The Komprise survey “shows that IT leaders are working hard to responsibly balance the protection of their enterprise’s data with the rapid roll-out of generative AI solutions.”

Related Items:

GenAI and the Future of Work: ‘Magic and Mayhem’

How to Help Your Data Teams Put Privacy First

What Does ChatGPT for Your Enterprise Really Mean?