Numbers Station Sees Big Potential In Using Foundation Models for Data Wrangling

(Oselote/Shutterstock)

A startup called Numbers Station is applying the generative power of pre-trained foundation models such as GPT-4 to help with data wrangling. The company, which is based on research conducted at the Stanford AI Lab, has raised $17.5 million so far, and says its AI-based copilot approach is showing lots of promise for automating manual data cleaning tasks.

Despite huge investments in the problem, data wrangling remains the bane of many data scientists’ and data engineers’ existences. On the one hand, having clean, well-ordered data is an absolute requisite for building machine learning and AI models that are accurate and free of bias. Unfortunately, the nature of the data wrangling problem–and in particular the uniqueness of each data set used for individual AI projects–means that data scientists and data engineers often spend the bulk of their time manually preparing the data for use in training ML models.

This was a challenge that Numbers Station co-founders Chris Aberger, Ines Chami, and Sen Wu were looking to address while pursuing PhDs at the Stanford AI Lab. Led by their advisor (and future Numbers Station co-founder) Chris Re, the trio spent years working with traditional ML and AI models to address the persistent data wrangling gap.

Aberger, who is Numbers Station’s CEO, explained what happened next in a recent interview with the venture capital firm Madrona.

Numbers Station co-founders Chris Aberger and Ines Chami with Madrona Managing Director Tim Porter (left to right) (Image source: Madrona)

“We came together a couple of years ago now and started playing with these foundation models, and we made a somewhat depressing observation after hacking around with these models for a matter of weeks,” Aberger said, according to the transcript of the interview. “We quickly saw that a lot of the work that we did in our PhDs was easily replaced in a matter of weeks by using foundation models.”

The discovery “was somewhat depressing from the standpoint of why did we spend half of a decade of our lives publishing these legacy ML systems on AI and data?” Aberger said. “But also, really exciting because we saw this new technology trend of foundation models coming, and we’re excited about taking that and applying it to various problems in analytics organizations.”

To be fair, the Stanford postgrads saw the potential of large language models (LLMs) for data wrangling before everybody and his aunt started using ChatGPT, which debuted six months ago. They co-founded Numbers Station in 2021 to pursue the opportunity.

The key characteristic that made foundation models like GPT-3 useful for data wrangling task was their broad understanding of natural language and their capability to provide useful responses without fine-tuning or training on specific data, so-called “one-shot” or “zero-shot” learning.

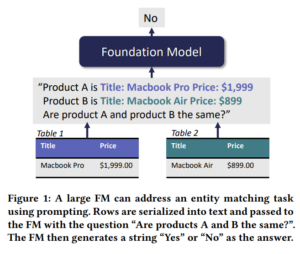

Image source: “Can Foundation Models Wrangle Your Data?”

With much of the core ML training done, what remained for Numbers Station was devising a way to integrate those foundation models into the workflows of data wranglers. According to Aberger, Chami wrote the bulk of the seminal paper on using foundation models (FMs) for data wrangling task, “Can Foundation Models Wrangle Your Data?” and served as the engineering lead to develop Numbers Station’s first prototype.

One issue is that source data is primarily tabular in nature, but FMs are mostly created for unstructured data, such as words and images. Numbers Station addresses this by serializing the tabular data and then devising a series of prompt templates to automate the specific tasks required to feed the serialized data into the foundation model to get the desired response.

With zero training, Numbers Station was able to use this approach to obtain “reasonable quality” results on various data wrangling tasks, including data imputation, data matching, and error detection, Numbers Station researchers Laurel Orr and Avanika Narayan say in an October blog post. With 10 pieces of demonstration data, the accuracy increases above 90% in many cases.

“These results support that FMs can be applied to data wrangling tasks, unlocking new opportunities to bring state-of-the-art and automated data wrangling to the self-service analytics world,” Orr and Narayan write.

The big benefit of this approach is that FMs can used by any data worker via their natural language interface, “without any custom pipeline code,” Orr and Narayan write. “Additionally, these models can be used out-of-the-box with limited to no labeled data, reducing time to value by orders of magnitude compared to traditional AI solutions. Finally, the same model can be used on a wide variety of tasks, alleviating the need to maintain complex, hand-engineered pipelines.”

Chami, Re, Orr, and Narayan wrote the seminal paper on the use of FMs in data wrangling, titled “Can Foundation Models Wrangle Your Data?” This research formed the basis for Numbers Station’s first product, a data wrangling copilot dubbed the Data Transformation Assistant.

The product utilizes publicly available FMs–including but not limited to GPT-4–as well as Numbers Station’s own models, to automate the creation of data transformation pipelines. It also provides a converter for turning natural language into SQL, dubbed SQL Transformation, as well as AI Transformation and Record Matching capabilities.

In March, Madrona announced it had taken a $12.5 million stake in Numbers Station in a Series A round, adding to a previous $5 million seed round for the Menlo Park, California company. Other investors include Norwest Venture Partners, Factory, and Jeff Hammerbach, a Cloudera co-founder.

Former Tableau CEO Mark Nelson, a strategic advisor to Madrona, has taken an interest in the firm. “Numbers Station is solving some of the biggest challenges that have existed in the data industry for decades,” he said in a March press release. “Their platform and underlying AI technology is ushering in a fundamental paradigm shift for the future of work on the modern data stack.”

But data prep is just the start. The company envisions building a whole platform to automate various parts of the data stack.

“It’s really where we’re spending our time today, and the first type of workflows we want to automate with foundation models,” Chami says in the Madrona interview, “but ultimately our vision is much bigger than that, and we want to go up stack and automate more and more of the analytics workflow.”

The company takes its name from an artifact of intelligence and war. Starting in WWI, intelligence officers set up so-called “numbers stations” to send information to their spies working in foreign countries via shortwave radio. The information would be packaged as a series of vocalized numbers and protected with some type of encoding. Numbers stations peaked in popularity during the Cold War and remain in use today.

Related Items:

Evolution of Data Wrangling User Interfaces

Data Prep Still Dominates Data Scientists’ Time, Survey Finds