SageMaker Bolstered with Better Controls, AI Governance

(Golden Dayz/Shutterstock)

AWS yesterday unveiled a host of enhancements for Amazon SageMaker, its end-to-end machine learning offering. Among the most prominent capabilities are a collection of new governance tools aimed at keeping ML projects on the straight and narrow, but there are many more new capabilities designed to make putting AI applications into production easier.

As machine learning and AI usage spreads, companies are realizing the need better tools and processes for governing the new predictive capabilities, with an eye on preventing bad outcomes related to bias, ethical violations, and privacy violations.

AWS addressed some of these concerns with three new SageMaker tools–including Role Manager, Model Cards, and Model Dashboard–which the cloud giant unveiled yesterday at its re:Invent conference in Las Vegas, Nevada.

Amazon SageMaker Role Manager is intended to provider finer-grained control over who has access to SageMaker resources, including the machine learning models as well as the data used to train them. According to Amazon SageMaker General Manager Ankur Mehrotra, Role Manager gives administrators the ability to onboard new users into SageMaker with just the right level of access,

“They want to make sure that the users have access to the tools they need, but they don’t want permission to be overly permissive,” Mehrotra tells Datanami. “They want to also reduce exposures.”

Guided prompts and prebuilt policies can help administrators quickly get new users setup in SageMaker with the right level of access, including the ability to access encrypted data and any networking restrictions that might be needed.

Just a few years ago, SageMaker was primarily used by data scientists. But as ML and AI spreads, more stakeholders are being brought into the mix, which complicates governance, Mehrotra says. “The visibility and controls around how these models are vetted or tools are governed is getting harder,” he says.

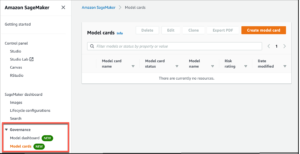

As more ML and AI applications make it into production, tracking them is becoming more difficult too. To that end, Amazon SageMaker Model Cards is designed to help data scientists and others keep a record of how the model training proceeded, how the model behaved, when problems surfaced, and what changes were made in response.

“As part of training, there are all kinds of things in terms of hyperparameters and other things that need to be observed,” Mehrotra says. “And recording these things is important because sometimes they may be needed for approvals. Let’s say you’ve done a POC and you want to approve it for use in production. So the right stakeholders may want to look at that information.”

Today, much of that ML model behavior information is tracked in an ad-hoc fashion using emails and spreadsheets. The new Model Cards offering is designed to provide a “single source of truth” for the ML model information. Data scientists can enter their observation in the Model Cards, and it can also automatically populate some information, Mehrotra says.

‘These [Model Cards] can be accessed at any time and can be used to refer to model history and what decisions they’re making,” he says.

Monitoring multiple ML models in production is the goal of Amazon SageMaker Model Dashboards, the third new governance tool launched this week. The company already offers some model monitoring capability with SageMaker Clarify and SageMaker Model Monitor.

If users are not using either of these two tools–which AWS recommends they do use as a best practice, Mehrotra says–then Model Dashboards can give the user performance data. Model Dashboard also provides model lineage and performance history, which can be useful for tracking models over the long term.

AWS has tens of thousands of customers using SageMaker, which makes more than 1 trillion predictions per month, Mehrotra says. As companies ramp up their use of SageMaker and AI from proof of concepts (POC) stage into full production mode, they’re running into thorny problems around bias, fairness, and ethics.

“A lot of these are really hard problems, and we will continue to invest in making sure our customer can implement ML safely and responsibly,” Mehrotra says.

But wait, that’s not all! AWS unveiled a slew of other SageMaker enhancements at re:Invent.

It launched Next Generation SageMaker Notebooks, in which AWS bolsters its Juypter-based notebook environment with built-in data prep tools to improve data quality. Multiple users can also access the same notebook, eliminating the need to manually share code, thereby boosting collaboration. Read more here.

AWS is also giving SageMaker users an “easy button” for deployment. Instead of fussing around with dependencies, users can press a single button, and their SageMaker model will be automatically deployed on an EC2 instance of their choice. Behind the scenes, SageMaker bundles the model into a Docker container, with all of the dependencies automatically accounted for.

“Today going from the notebook world to the jobs that run in production at scale, that requires multiple steps… and it can be a laborious process,” Mehrotra says. “So we’re launching a new capability where in just a few clicks, you can automatically convert a notebook to a job that can run in production at scale.”

A new “shadow testing” feature lets users see how changes to a model will work in production, but without actually deploying the model to the production environment. “Shadow testing helps you build further confidence in your model and catch potential configuration errors and performance issues before they impact end users,” AWS’s Antje Barth writes in a blog post.

AWS launched SageMaker Data Wrangler two years ago helps users clean and prepare data for machine learning uses. However, AWS users discovered that the same data prep steps needed to be conducted to get the right answer during inference. To address this, AWS this week announced that Data Wrangler is now available as a “real-time inference endpoint” so customers can get consistent predictions during inference. It can work in batch and real-time mode, according to Donnie Prakoso’s blog post.

Finally, AWS is also introducing support for geospatial data in SageMaker. AWS is delivering pre-trained deep neural network (DNN) models and geospatial operators that make it easy to access and prepare large geospatial datasets, AWS’s Channy Yun writes in a blog post.

Related Items:

AWS Bolsters SageMaker with Data Prep, a Feature Store, and Pipelines