HPE Adds Lakehouse to GreenLake, Targets Databricks

Imitation may be the sincerest form of flattery. But if you’re Databricks, you might not be too flattered by HPE, which today announced Ezmeral Unified Analytics, a new lakehouse offering based on Spark and Databricks’ Delta Lake technology that customers can run on-prem at two-thirds the cost of public cloud offerings, HPE claims. It also launched a Kubernetes-based object storage system called Ezmeral Data Fabric Object Store that’s aimed at making data available wherever it needs to be accessed.

HPE may be playing catch up in the big data analytics space. But the systems giant, which acquired MapR Technologies in 2019, seems convinced that it’s poised to leapfrog the industry leaders in this space by delivering a third-generation platform that uses the latest technology to deliver high-performance analytics and machine learning on prem and in the cloud without the high costs or lock-in that customers have grown to expect from the cloud.

“The way we think about it, we are in the third generation of data analytics,” said Vishal Lall, the senior vice president and general manager of GreenLake Cloud Services during a presentation Monday. “If you go back to the ‘80s and ‘90s, that was the era of warehousing with companies like Teradata, etc. Then it was all about structured data at that time. Then in the early 2000s, we entered this era of unstructured data, where big data lakes were formed [with] new companies like Hortonworks and Cloudera.”

The second-generation Hadoop offerings hit a wall when it came to support for transactions, which necessitated an extensive amount of inefficient data copying, he said. The public cloud platforms and vendors like Databricks helped to address that problem, but with caveats and higher costs.

But not everybody has a path forward, Lall said. “Customers who are on the second generation or the first generation technology are finding themselves to be stuck,” he said. “They find that there isn’t really much of a product roadmap when it comes to existing technologies like Cloudera or Hortonworks. And as they look at Spark or Delta Lake, they’re like, there’s nothing on premises.”

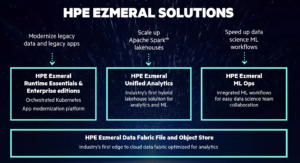

That’s the sweet spot that HPE is looking to occupy with its evolving Ezmeral offerings, which it first launched back in June 2020 as its platform for running containerized analytics, machine learning, and AI workloads. Now HPE says it has transformed Ezmeral into a first-of-its-kind platform that can run on-prem, on the edge, or in the cloud; work with structured and unstructured data; serve the needs of data analysts and data scientists; and can do so without the expense and lock-in of proprietary solutions.

The offering, which is available as software or as a service, is composed of four elements, two of which are new, including Ezmeral Analytics and the object fabric data store.

For starters, there is the Ezmeral Runtime (available in open source and enterprise versions) which provides the Kubernetes orchestration system. Then there is Ezmeral Analytics (one of the new components), which includes Apache Spark, the Swiss Army knife of data engines, as well as Delta Lake, the open source storage layer created by Databricks that brings ACID transactions to Apache Spark and big data workloads. There is also RAPIDS Accelerator for Apache Spark, software from Nvidia designed to accelerate Spark workloads running on GPUs.

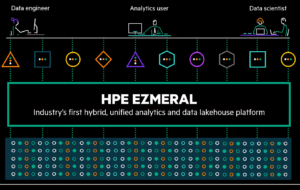

Ezmeral Analytics is “the industry’s first solution for hybrid lakehouses and analytics,” claims Matt Maccaux, HPE’s global field CTO for Ezmeral software. “We’re deploying those open-source libraries and we’re delivering that as a service through HPE Green Lake, but deployable across these various environments. It’s all based on Apache Spark at its core. So again, when users are writing their analytical workloads and their applications, they’re hitting the open source APIs while still getting that Databricks and Snowflake experience.”

Workload orchestration is handled through Ezmeral ML Ops, which supports open source Airflow, ML Flow, and Kubeflow packages. Finally, there is the new Ezmeral Data Fabric File and Object Store, which it says is the industry’s’ first storage repository that combines an S3-native object store with support for files, streams, and databases, all in one platform that runs on prem, on the edge, or in the cloud. Atop of all of this, HPE is working with dozens of ISVs to enable their offerings to run within Ezmeral Analytics, including H2O.ai, Starburst, Dremio, and others.

It’s all about choice, Lall said. “We have a hybrid native environment, which is basically you can run your cloud where your data is using this platform, on premises or on co-los,” he said. “Really it’s about providing choice. That way you don’t feel locked in as a customer and then eventually that translates into more TCO [total cost of ownership].”

The capability to support different users and the tools they need, whether it’s code-first data scientists working in a Juypter notebook or data analysts working in Tableau, is a hallmark of HPE’s Ezmeral approach, Maccaux said.

“When we say unified analytics, what we mean is a common set of app stores where these users can come in to spin up their tools,” he said. “We’re deploying Apache Spark as that core runtime and the broker for a lot of these services. And then using things like Thrift server and Livy server and Delta Lake libraries that are all open source, but delivering that same Databricks and Snowflake experience wherever these users need to do their work–whether that’s on premises, whether it’s in the cloud, or it’s out at the edge.”

Ezmeral contains elements of previous acquisitions for HPE, including the Kubernetes operator it obtained with its BlueData acquisition, as well as the MapR platform that it bought in the fire sale of MapR Technologies in the summer of 2019. Ezmeral Analytics and Ezmeral Data Fabric Object Store are due to ship in the first half of 2022.

Related Items:

HPE Unveils ‘Ezmeral’ Platform for Next-Gen Apps

HPE Ships Container Platform, Including Kubernetes and MapR File System