Facts Can Change, and AI Can Help

As understanding of an issue evolves, the best set of available facts often changes: for instance, mask-wearing wasn’t recommended early in the COVID-19 pandemic, but now, it’s common knowledge that mask-wearing is helpful to stop the spread of the disease. But what happens to the information published across the internet early in the pandemic which, while accurate when written, is now outdated and misleading? At MIT’s Computer Science and Artificial Intelligence Library (CSAIL), researchers are applying AI as a solution – and helping to improve the AI models themselves in the process.

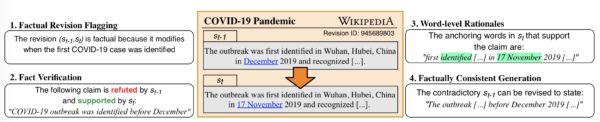

“[Our AI models] can monitor updates to articles, identify significant changes, and suggest edits to other related articles,” explained Tal Schuster, lead author of the research and a PhD student at CSAIL. “Importantly, when articles are updated, our automatic fact verification models are sensitive to such edits and update their predictions accordingly.”

As a barometer for the most recent information, the team is using edits to major Wikipedia pages. This, of course, requires filtering out vast numbers of formatting-related and grammatical edits. “Automating this task isn’t easy,” Schuster said. “But manually checking each revision is impractical as there are more than six thousand edits every hour.”

Using a set of about 200 million of those revisions, the researchers applied deep learning to identify the 300,000 most likely to represent factual changes. About a third of these changes were annotated by hand for the first run.

“Achieving consistent high-quality results on this volume required a well-orchestrated effort,” says Alex Poulis, creator and senior director of TransPerfect DataForce, which assisted with the annotation. “We established a group of 70 annotators and industry-grade training and quality assurance processes, and we used our advanced annotation tools to maximize efficiency.”

Then, using that annotated data (collectively called the “Vitamin C” dataset), the process was automated, allowing the model to detect around 85% of fact-change revisions.

Building on this model, they introduced a second model for automatically suggesting revisions using sequence-to-sequence transformation. “Instead of teaching the model that the population of a certain city is this and this, we teach it to read the current sentence from Wikipedia and find the answer that it needs,” Schuster said.

The researchers describe their dataset generation in a paper, “Get Your Vitamin C! Robust Fact Verification with Contrastive Evidence,” which will be presented at the NAACL Conference on Computational Linguistics this summer. Furthermore, the team made the Vitamin C dataset public to assist other researchers working in fact verification.

“We hope both humans and machines will benefit from the models we created,” said Schuster.