Ataccama Puts Its Name on the Data Map

(Titima-Ongkantong/Shutterstock)

When Roman Kucera joined Ataccama over a decade go, the company had a single product: a data quality tool. Today, Kucera is the CTO, and Ataccama sells a range of products, including a data catalog, ETL, MDM, and data governance tools. And with yesterday’s acquisition of Tellstory, you can add data visualization to that list.

Ataccama (pronounced “ata-KA-ma”) may not be a household name in the big data space. But it has quietly built itself into a midsize player in the market for data management tools. It doesn’t have the name-recognition of some of its larger competitors, but it has grown to support more than 350 customers around the world, including enterprises like American Airlines, Blue Cross Blue Shield, and T-Mobile.

In a recent interview with Datanami, Kucera recounted the growth of the company, which is named after the extremely dry desert in northern Chile but is headquartered in Toronto, Ontario.

“When we started, all of our customers would come to us with project-oriented tasks,” Kucera said in a call from his office in Prague, Czech Republic. “Somebody from the risk department of a bank needs to produce a report for a regulatory body, or somebody from marketing wants to do a marketing campaign. And somebody would analyze it, discover they get data from various data sources, and found out there are issues with it. ‘Hey, Ataccama, can you help?’”

The company had developed a well-regarded data quality tool. The software helped users prepare their data for analysis. That included transforming their data into the right format, identifying inconsistencies in the data, removing duplicate records, and basically consolidating it into a useful form. At this time, the software worked mostly in a manual manner, without the benefit of parallel processing or AI (but that was coming).

“About 2013, we started hearing about big data. At this point, it was more of a buzzword,” Kucera said. “Customers started asking, ‘Do you support big data?’ We did some research, and thought it was interesting. And we basically adapted our data processing engine to run at scale, on Hadoop at the time, and now on Spark.”

With Ataccama running on popular big data engines, the use cases suddenly changed. Instead of working with a handful of data sources–perhaps five to 10–customers were now looking to Ataccama to help it get a handle thousands of data sources. Along the way, big data was becoming a big mess.

“The challenge was suddenly they would start creating these data lakes where they would put all the data from across the company. They would get some external data as well and put it into the data lake,” Kucera said. “They start managing teams of data scientists…These people would come and say, ‘Hey quality is poor. We spend 80% to 90% of our time trying to find where the data is and what data we can use and fixing this data quality issue. Please fix it.’”

The challenge was that the standard rules-based approach to building and managing ETL pipelines was no longer working. Ataccama realized there needed to be a centralized repository that describes its customers’ data and the attributes of that data, and so it started building a data catalog.

The company realized that, at the scale of its customers’ big data projects, this information could never be entered manually, and so it sought to populate its data catalog by analyzing the database connections. “We try to provide as much information as possible just by looking at the data with some algorithms,” Kucera said. “We first digest the technical metadata. Then we share basic statistical metrics, what are the most common patterns in the data set.”

Rules-based approaches are also useful, particularly when the data relationships are already well understood. For example, if a customer already has created a glossary of well-defined terms, such as what constitutes an “email address,” then Ataccama’s data catalog can map its understanding to that definition.

The company prefers to take the traditional and simple approach when it can. But many situations are not simple, and over time, Ataccama has adapted its software to handle complex situations.

“The more sophisticated approaches involve some machine learning,” Kucera said. “We create this fingerprint…that is not very understandable to the end user. But we train the AI to preserve similarity in this metric. So if there’s two different data sets that represent similar values, they would have similar fingerprint.”

Whenever new data is entered into a customer’s data lake, Ataccama’s AI starts profiling this data to see if it’s similar to something that it’s seen before. If it’s close enough in size and shape to a piece of data that’s already indexed, then the software can automatically categorize it, which helps minimize the amount of manual labeling required by people.

“This way we can populate the customers catalog much more quickly than traditional tools where you have to manually go through each and every data set,” Kucera said.

As the data science or analyst teams start working with the data and creating derived data sets, the Ataccama software can keep track of this too, to ensure that the lineage is maintained and the next set of workers aren’t confused about where the data came from and what the metrics mean. This is an important element of any data governance project, but it’s often overlooked as data activity grows.

Some customers want to centralize data, often in cloud data warehouses or data lakes, while other customers want to maintain control over their data. The ability to work in both centralized and decentralized use cases is an advantage of the Ataccama software, which is called the One Unified Platform, Kucera said.

“That’s part of the challenge,” he said. “We try to have a central repository for the metadata. But the processing itself can be distributed…closer to the data set, which can be better for performance reasons and security, if you don’t want to transfer it all to one place.”

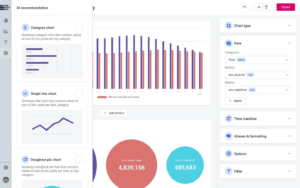

Ataccama plans to incorporate the Tellstory’s data visualization’s software into its data management suite

Ataccama needs a Spark cluster, which can be the Hadoop distribution from Cloudera, Amazon Web Services EMR, or Databricks. Many of Ataccama’s customers are using the suite to manage and prep data that’s destined for cloud data warehouses, such as AWS Redshift and Snowflake.

About 60% of the company’s customer base is currently using cloud data warehouses, Kucera says. “Snowflake is very popular these days, and Redshift as well. But Snowflake more so. We see big traction there,” he said.

ETL and ELT offerings are also gaining traction in the market, thanks to the explosion of analytics activity occurring in the cloud. Ataccama can be used as an ETL tool as well. “We have our own engines for the transformations,” Kucera said. “We support a layered approach where we basically represent the data transformation as a configuration.”

While AI is helping to take some of the manual effort out of cleaning and transforming data, there is still quite a bit of manual effort. “The automated cleaning and transformation are still in very early stages,” Kucera said. “Most of our customers want to automate the monitoring, but they still do transformations and cleaning in an assisted manner. We have metadata data available. It still helps. But the cleaning itself right now is mostly done manually.”

Yesterday the company bolstered its data suite with the acquisition of Tellstory, a data visualization startup. Ataccama plans to integrate the data visualization piece directly into its One Unified Platform.

Ataccama says the Tellstory data visualizations will help users by “providing rich data stories, relevant to a particular user or a team, on top of cataloged data assets.” This will enable data stewards and other users to add business context to the data, thereby providing “a deeper view into the business value of given data assets without the user ever leaving the platform,” the company said.

The Tellstory data visualization software will also be used to generate shareable reports for data quality projects and for MDM engagements. The entire Tellstory team is making the move to Attacama, which did not disclose terms of the acquisition. But one thing is for certain: this isn’t the last time you’ll hear about Ataccama.

Related Items:

Room for Improvement in Data Quality, Report Says

A Bottom-Up Approach to Data Quality

As Data Quality Declines, Costs Soar