Analytics Show Facebook Coddling Trump’s Falsehoods

Former president and formerly prolific tweeter Donald Trump has been banned from most major social media sites since the days following the attack on the U.S. Capitol. In the intervening weeks, many of those sites have been forced to grapple with Trump’s extensive litany of falsehoods that were, until the last weeks of his presidency, by and large amplified by their platforms. Currently, one of those platforms – Facebook – is on the verge of announcing whether or not they will lift restrictions on Trump’s account. Ahead of that announcement, The Markup has published an analysis of Facebook’s handling of false posts, concluding that Facebook generally treated falsehoods with “kid gloves” – particularly when it came to Trump’s.

The analysis used The Markup’s Citizen Browser project, which uses a desktop app to regularly collect HTML source code and screenshots of Facebook feeds from a diverse panel of participants across the U.S. That data – which excludes personal messages and the users’ actual browsing information – is then anonymized, uploaded to the cloud, and analyzed.

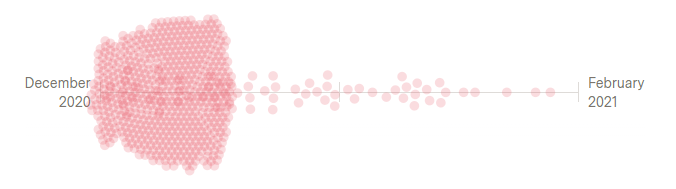

During the period in question – December 2020 to February 2021 – The Markup’s roughly 2,200 panelists’ feeds included 682 flagged posts: that is, posts that were “false, devoid of context, or related to an especially controversial issue.” However, 588 of these were automatic flags pinned on virtually anything election-related.

Of the remaining posts, a measly 12 were explicitly labeled “false,” while 38 were flagged under the far-vaguer label “missing context.” Many posts containing demonstrable lies received non-committal automatic flags urging caution rather than explicit fact-checks, and overall, older Americans and Trump voters were more likely to encounter these weakly flagged posts.

The chief flag-ee among the sample was, of course, Donald Trump. However, of the 12 posts that were marked “false,” none belonged to Trump, despite his repeated posts suggesting that President Biden’s win was statistically impossible, illegal, and so on.

Facebook, for its part, has argued that it is not its place to “referee political debates and prevent a politician’s speech from reaching its audience.” However, Facebook also claimed that it warned users when they attempted to share flagged content – which, when The Markup tested this functionality, it didn’t. After being asked about this incongruity, Facebook added the warning it had promised.

“My guess would be that Facebook doesn’t fact-check Donald Trump not because of a concern for free speech or democracy, but because of a concern for their bottom line,” Ethan Porter, an assistant professor in the School of Media and Public Affairs at George Washington University, told The Markup. “They’ve chosen not to aggressively fact-check. As a result, more people believe false things than would otherwise, full stop.”