NLP in the Cloud Is Growing, But Obstacles Remain

More than three-quarters of natural language processing (NLP) users utilize a cloud NLP service, according to the 2020 NLP Industry Survey. While cloud NLP workloads are on the rise, there are barriers to using the technology in the cloud, says Ben Lorica, one of the authors of the study.

Overall, this is a great time to be using NLP technology to process and analyze text, Lorica and Paco Nathan write in the 2020 NLP Industry Survey, which was sponsored by John Snow Labs, developer of the open source Spark NLP library that’s used in the healthcare field.

For starters, the budgets for NLP use cases are expanding quite a bit. The capabilities, accuracy, and scalability of NLP models and services, most of which at this point are based on neural networks, have also gone up, says Lorica, who is the principal of Gradient Flow Research (which conducted the survey) and also the chair of the upcoming NLP Summit.

“NLP is definitely a lot more accurate and a lot more scalable,” Lorica tells Datanami. “You have a lot more options as far as tools, and the number of people who are familiar with the these tools has grown a lot more, as opposed to when I was using these things 10 year ago.”

Even compared to just two years ago, he says, it has become easier for non-experts to pick up an NLP model or service and start using it for things like document classification, named entity recognition, sentiment analysis, or building a knowledge graph, which were the top four NLP use cases, respectively, according to the survey.

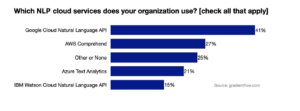

As NLP technologies and applications grow and mature, so too have cloud-based NLP services. According to the survey, which was conducted online in July and August and involved 571 individuals from 50 countries, 77% of respondents used at least one of the four main cloud NLP offerings, with Google Cloud being the most popular.

They survey also found that 64% of respondents who were consider “technical leaders” were using one or more cloud NLP services, and 65% of those who were in production with NLP were using at least one cloud NLP service.

While the survey indicates that the cloud is a hotbed of NLP activity, it doesn’t come without caveats. Survey-takers indicated they had several concerns about using cloud NLP services, specifically around cost, customization, accuracy, and security.

The top concern of cloud NLP is the cost. “You’re paying every time you touch a document, every time you parse a sentence or a word,” Lorica says. “Basically, it adds up fast. In other words, if you’re still playing around, exploring things, it may take you awhile to figure out what you’re doing.”

Secondly, the cloud NLP services are somewhat generic. They generally are called via an API, which delivers a result based on text provided by the user. However, users don’t have access to the underlying NLP model, which means that customers can’t tune them. That limits their usefulness for more advanced use cases, Lorica says.

“For example, if you want to use natural language technologies for healthcare, it turns out they have different ways of talking,” Lorica says. “So ER doctors may use different verbs than radiologists. Everyone has a certain level of tuning that they need to do, even for these really advanced models that you’ve been reading about. And I don’t know how you do that in the cloud. I don’t think the cloud NLP models are going to be easy for you to tune.”

The good news here is that actual NLP practitioners (as well as would-be practitioners) have a slew of tunable open source NLP models to choose from. Spark NLP was the most popular NLP library, followed by spaCy, Allen NLP, nltk, Stanford CoreNLP, Gensim, and Hugging Face.

Among those who are using one or more of these NLP models in production, scalability issues and integration with the overall software stack were the biggest issues. The difficulty level to install, learn and use the NLP library was the third most-cited concern, followed by accuracy levels.

With the state of NLP today, Lorica sees the potential for organizations to get started with cloud NLP services to see how text processing and analysis can be used, and then perhaps moving to running their own NLP models (either on prem or on cloud-based IaaS) when they hit barriers around tuning or cost.

“I would say if you want to get started, you can get started in the cloud. You can feed your data and you get the things you need done,” he says. “But over time, maybe as you need to tune it, it gets a little harder, and the pricing is not as optimal.”

Lorica identified a need for cloud providers to lower their pricing to make it more conducive to exploring NLP and running NLP in production. “You need to tune these models. That part is going to become easier,” he says. “I think people are going to focus around how do I build tools to enable developers to really tune these things for their applications.”

The report can be accessed at https://gradientflow.com/2020nlpsurvey/.

Related Items:

Mining New Opportunities in Text Analytics

Deep Learning Is Great, But Use Cases Remain Narrow

Biomedical Text Mining Tool Gets the Lead Out