Making Sense of Sound: What Does Machine Learning Mean for Music?

(whiteMocca/Shutterstock)

AI has proven to have a considerable impact on some major industries. While autonomous cars and virtual assistants are slowly becoming a reality, the creative industry has been experimenting with AI for several years already. Does it have meaningful implications and if so, what will it bring in the future?

It’s universally agreed that the first computer-assisted music score dates back to 1957 when composers Lejaren Hiller and Leonard Isaacson unveiled Illiac Suite for string quartet. Utilizing the interconnection between mathematics and music, Hiller was able to program the computer to come up with a stunning four-piece musical score.

One of the most notable AI-assisted music projects happened two years ago. Singer-songwriter Taryn Sothern decided to make AI a centerpiece of her music production workflow, releasing the album I Am AI. For the first time in history, all of the composition and production on the album was done by collaborative efforts of four AI programs, machine learning consultants, and a musician, those being Amper Music, IBM Watson Beat, Google Magenta, AIVA, and Taryn herself.

It’s important to note that AI didn’t produce the songs from start to finish. It just generated ideas based on set parameters. Afterward, all the stems had to be adjusted, structured, and mixed by a human. However, we are definitely not far from the point when a machine can write a song entirely on its own.

(Courtesy Hello World album)

In 2018, French music producer Skygge released a multi-genre album composed in collaboration with the AI system Flow Machines. The album was regarded as the first ‘good’ AI album by BBC Culture. The co-producer of the album, Michael Lovett, perfectly sums up the experience of writing music with AI: “It’s a bit like having somebody playing a piano in the corner of your studio. They’re kind of playing stuff and most of it is rubbish, but at some point you’re like, ‘Oh, what’s that? That’s interesting.’ And it’ll become the jumping-off point for a song.”

And what about the lyrics? Researchers at the University of Antwerp and the Meertens Institute created a rap lyrics generator called Deep Flow. By feeding the algorithm with an enormous number of hip-hop lyrics, the machine has learned to ‘spit’ arguably believable lyrics of its own. In case you want to prove the creators wrong, you can play the game on the Deep Flow website that challenges the players to tell machine-generated lyrics from the ones from actual songs.

Right now, computer-generated music is mostly found in functional and background compositions. For example, amateur filmmakers and YouTubers often face the challenge of finding original music for their works. Not only is it often hard to find the right artist, but content creators also need to consider the time it takes an artist to produce music and the costs associated with it. Amper Music can generate an entire song based on certain parameters like key, tempo, style, and mood in a matter of minutes. Such technology may significantly lower the entry barriers to the creative industries.

AI-assisted Audio Plugins

While music is a creative outlet, technology has always been a huge part of the industry. Nowadays, even if a certain song features recordings of real instruments, it requires a very specific skillset, extended knowledge, and sufficient experience to process these recordings properly.

Such work is usually done with the help of digital audio workstations (DAWs), hardware equipment and software plugins. Currently, the most advanced audio software developers are looking for ways to adapt AI in their plug-ins. Let’s look at some notable examples.

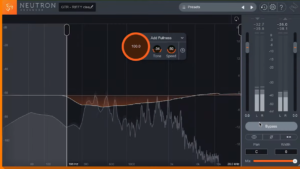

US-based company iZotope heavily invested in machine learning applications in recent years. For example, their intelligent channel strip plugin Neutron uses deep learning to identify what kind of instrument is being played, and based on its assumption recommends the user which EQ or compression settings to apply. iZotope has successfully adopted machine learning all over their products ranging from AI assistants for vocal processing to mastering.

Landr is one of the most popular online services for mastering that applies machine learning. A user uploads a song, Landr’s algorithm analyzes it, and exports the mastered song – all done in a matter of minutes. While opinions on the quality vary, the company’s CEO stated that based on the blind test they did in 2017, Landr did the job equally good or even better than the world’s top mastering engineers. The power of the service is that the algorithm improves with every new upload.

Another example of ML application in music production is a startup called Samply launched by MIT student Justin Swaney. With his tool, Swaney addresses music producers’ well-known problem of finding and matching the right samples. Samply uses machine learning to analyze audio waveforms and then organizes samples by their sonic characteristics. The end result is an automatically sorted sample library, where similar sounds are grouped. This enables musicians to find the matching sounds faster and spend more time creatively instead.

Onsets and Frames provided by Google Magenta would be a useful tool even for music hobbyists. It allows converting solo piano recordings into MIDI (Musical Instrument Digital Interface). You can think about MIDI as computerized sheet music. Once the transcription is complete, it could be used to play the same piece of music by a different instrument, like a synthesizer.

Accusonus, a Greece-based company, raised $3.3 million this November to develop AI-powered audio plugins. Their most recent plugin bundle consists of easy-to-use tools for video content creators and podcast producers who need to remove background noise and audio artifacts from their works. Every plugin in the bundle has a very small number of parameters to tweak, which makes audio editing accessible for non-professional users.

Limitations

While AI-powered applications are becoming widely adopted, there are still major bottlenecks on the way. One of the main ones is getting access to big data to feed machine learning algorithms. The software for creating neural networks is very accessible (Google TensorFlow, for example), and with the rapid evolvement of cloud networks, computational power is no problem either even for smaller companies. However, when it comes to data, tech giants like Google protect the access to it at all costs.

Another problem is the processing power of consumer-grade computers. A high CPU load is a common problem when using DAWs, and real-time machine learning requires huge amounts of computer resources. This is no small bottleneck particularly for developers, because learning in real time would allow plugins to become better much faster. For now, machine learning is mostly used at the actual development stage of audio plugins and software.

For example, Acustica gives the prototype of their plugin to a professional audio engineer, who would use it on a regular basis. Based on how and when he or she tweak certain settings, the plugin processes this data and learns to replicate those choices. This way, a deep learning-powered plugin is able to intelligently identify problems in the mix and address them accordingly.

Retaining Creativity

The penetration of AI in creative fields will always raise protests from certain parts of the community. However, this is a very familiar scenario for the music industry, which goes through this roughly once in a decade. We have seen it with the introduction of samplers, DAWs, auto-tune and beat-matching – all of these were heavily criticized at first for removing the human element and creativity from the process.

Whether to let programs do the artist’s job to the full or just assist in the process is everyone’s choice, though. AI and machine learning programs can serve as an extension of artists’ capabilities and shorten the time it takes to perform tasks which could be automated. It’s set to assist and not to substitute.

The same thing happens in sample-based music. With popular services like Splice, anyone can download a few perfect loops, match them together in terms of tempo, and call it a day.

Machine learning algorithms make decisions based on data and never approach tasks creatively. While AI can come up with a great suggestion about the note to be played next in the melody, this suggestion will always be predictable. Predictability often eliminates creativity, and the latter will certainly remain exclusive to the humankind.

With the rapid development of ML-based mastering services, it’s not surprising that some mastering engineers might worry about losing their job. However, it’s certainly a big exaggeration. Although music mastering might appear as an entirely technical work, communication between engineers and artists is a core factor. For example, Landr has a very limited number of parameters to hone a mix in the desired direction, while mastering engineers can adjust a mix according to very specific needs. You can compare it to buying a bicycle. While an average consumer would most certainly buy it off the mass market, a professional cyclist would spend thousands of dollars on a custom one.

What’s Next?

Thanks to AI-powered tools, we are likely to hear more music coming out. Both artists and audio engineers will have more time for creativity, leaving repetitive tasks to machines.

Moreover, with the advancement of tools like Amper, we will probably see more music hobbyists. Pascan Pilon, CEO at Landr, argues that our relationship to music will most likely be social media-based. With such moderate knowledge and skill barriers to writing music with AI, making music and getting ‘listens’ will become similar to posting photos on Instagram and getting likes.

About the author: Alex Paretski is knowledge manager at Itransition, Denver, Colorado-based software development company.

Related Items:

Slicing and Dicing Music Data for Fun and Profit

Hadoop Helps Maintain Music Genome Project

I Didn’t Know Big Data Could Do That!