2018: A Big Data Year in Review

(gguy/Shutterstock)

As 2018 comes to a close, it’s worth taking some time to look back on the major events that occurred this year in the big data, data science, and AI space. There’s a lot that occurred – and a lot that we chronicled in the virtual pages of Datanami. We hope you enjoy this retrospective as you prepare for 2019.

Data security continued to be a major topic in 2018, particularly as the rash of big data breaches continued. The IT world had a rude awakening following the 2018 New Year’s celebration when the Spectre and Meltdown vulnerabilities were discovered in practically every computer processor on the planet. Apparently, chipmakers took some shortcuts in developing the speculative execution methods that boost multi-threaded performance in modern CPUs. Failure to apply the patches (which robbed the chips of performance) put vulnerable data at risk.

The sophistication of machine learning automation tools increased a good deal during 2018, which is not surprising. Data scientists who are looking to boost their own productivity – or data analysts and power users who wanted to swing above their weight – had a smorgasbord of ML automation tools available from a raft of vendors like Cloudera, DataRobot, H2O.ai, Anaconda, Domino Data Labs, IBM, SAS, and Alteryx – not to mention cloud offerings from AWS, Azure, and GCP or open source kits, including those for Python, R, Java, and Scala.

Data governance isn’t a new concept, but it sometimes seemed that way this year, particularly with the General Data Protection Regulation (GDPR) threatening big sanctions on companies that were careless with data. The growing concerns around data security – along with the difficulty data science teams were facing in just corralling and making sense of data – combined to put data governance on the map in a big way.

The rapid growth of the cloud as a platform for storing big data and analyzing it was a huge story for 2018. At the end of the year, AWS had a $27 billion yearly run rate, and was growing at 46% annually. Azure and GCP were growing even faster, although they weren’t even close to matching AWS in the revenue department. Nobody was surprised when AWS unveiled a slew of new ML functionality at its annual re:Invent show at the end of the year

Let’s face it: Big data, as a defining name and concept, is on its last legs. The new paradigm that’s emerging is being defined with an old-ish phrase, artificial intelligence – but one that’s being infused with new meaning. As the big data bubble shrinks, AI’s just keeps getting bigger. The smart money folks on Sand Hill Road poured money into AI startups at a rapid clip in 2018, and there’s no sign that it’s abating.

The shortage of data scientists has been talked about for years. But as technology evolved, organizations found that what they really needed was more data engineers. Those folks who are skilled at using modern tools like Apache Spark to manipulate data and build data pipelines for machine learning and advanced analytics use cases are in even hotter demand that data scientists.

As the early months of 2018 rolled on, the May 25 deadline for GDPR compliance loomed large for chief data officers. Some worried that the new European Union law would kill artificial intelligence, while others thought that it could be beneficial by forcing companies to adopt good data management practices.

If 2017 gave us a small taste of blockchain technology and new crypto currencies like Bitcoin, then 2018 blew out the doors with a seven-course meal. The possibility of having a secure distributed ledger of transactions triggered the imaginations of technologists in all sorts of ways, including making the insurance industry more efficient, and even helping streamline ML and AI processes, not to mention managing big data. However, as 2018 draws to a close, most of the blockchain promises have been unfulfilled.

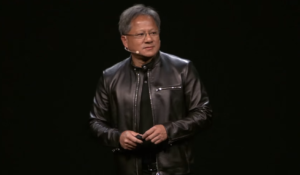

The fortunes of Nvidia continued to soar in 2018 as demand for its GPUs skyrocketed due to expanding deep learning and high performance computing (HPC) workloads. Nvidia CEO Jensen Huang cemented his reputation as a high tech rock star during his two-and-a-half hour keynote at the GPU Technology Conference in March. While the company’s stock was down at year’s end (along with the rest of the stock market) there’s no signs of a slowdown for Nvidia.

Cloud data warehouses had a giant year as companies warmed up to the prospect of adopting ready-to-use analytic databases that can analyze petabytes of data in milliseconds. AWS RedShift reportedly has 6,500 customers, while the amount of data customers had stored in BigQuery doubled from 2016 to 2017. Snowflake Computing, meanwhile, landed a mammoth $450 million funding round, and cloud analytics will never be the same.

Dogged by technological complexity and the rise of big data environments in the cloud, the Hadoop market continued to disintegrate. The surprise merger of Hortonworks and Cloudera emphasized the smaller role that Hadoop is playing in the enterprise as other alternatives continue to evolve. The merger led some to speculate that Hadoop is officially dead, but others point out that clouds can’t deliver hybrid on-premise and multi-cloud data management strategies, which (not coincidentally) is the new Cloudera’s focus.

Tabor Communications held its inaugural Advanced Scale Forum (ASF) event this May in Austin, Texas. The event brought together leaders in HPC, big data, and AI, and featured a number of speakers from leading companies like Gulfstream, Moonshot Research, Accenture, Comcast, Nimbix, The BioTeam, Dell EMC, and others. TCI also held its first HPC on Wall Street one-day seminar in September, capping a successful year of on-site events. Planning is underway for the second ASF, which will be back in Florida next April.

Driven by the rise of cloud container architectures, Kubernetes emerged as the new “it” technology in 2018. The rush was on to retrofit existing systems, from Hadoop and Kafka, to adopt Kubernetes for cluster scheduling and management. Much of the activity at the fall Strata Data Conference revolved around vendors expressing support for Kubernetes, which looks set to supplant YARN in next-gen big data cluster architectures.

Fueled by demand to train machine learning models on ever-bigger collections of data – not to mention Nvidia’s meteoric rise — 2018 saw a surge of activity in new silicon development. Google led the way with its tensor processing units (TPUs), while startups like Wave Computing, Groq, Flex Logix, and Graphcore explored other types of processors, including GPUs, FPGAs, and ASICs.

As more companies adopted neural networks and deep learning techniques to automate decision making, it also raised tought questions about exactly how it works. AI’s explainability problem, as its been called, has bolstered technologists to find solutions. One of the first was the LIME project, which emerged from Unviersity of California. But firms like FICO and SAS discovered that simply exercising the models and reporting their actions could be the way forward.

The improvement in machine learning automation and the shortage in classically trained data scientists combined to turbo-charge the rise of the citizen data science movement in 2018. The trend has the backing of Gartner, which predicts that citizen data science positions will grow five times faster than jobs for full-fledged data scientists. Being a citizen never felt so good!

As more companies adopt machine learning techniques to automate decision making, the thorny issue of AI ethics keep cropping up. Plenty of abuses of data and AI have been documented, which led some to observe that the data science community needs to take charge and build ethics into their work. It’s also renewed calls to find ways to use big data tech to actually help people, as opposed to just making more money.

Despite all the talk about getting ahead of the competition with AI and big data science, the fact remains that few organizations are actually having rip-roaring success with it yet, and the use cases remain narrow. Study after study show that, outside of tech giants, Web startups, and Fortune 100 firms with billion-dollar IT budgets, there just aren’t that many examples of companies hitting it out of the ballbark. The sage advice for succeeding at AI is the same as it was for big data: start small and grow smartly.

It was good year for startups raising money. Among those closing rounds were Dremio ($25 million Series B); InfluxData ($35 million Series C); Scality ($60 million Series E); ThoughtSpot ($145 million Series D); MemSQL ($30 million Series D); Immuta ($20 million Series B); CrateDB ($11 million Series A); Tamr ($18 million Series D); Cloudian ($94 million Series E); StreamSets ($24 million Series C); DataRobot ($100 million Series D); and Looker ($100 million Series E).

We also saw some companies going public in this sector. Among those having IPOs were: Pivotal (raised $555 million) and Elastic (raised $252 million). The acquisitions desk was also busy. Infogix bought LavaStorm in March; Cloudian bought Infinity Storage in March; Actian was bought by equity firm HCL in April; Microsoft buys Bonsai in June; Qlik bought Podium Data in July; MariaDB bought Clustrix in September; Talend bought Stitch in November; Cloudera and Hortonworks agreed to merge in October; and IBM agreed to buy RedHat in October.

We saw some big changes in strategy for key players. DataTorrent threw in the towel with its streaming data platform. Teradata announced it would no longer focus on analytics, but providing answers. Cloudera announced some strategic shifts following its announced merger with Hortonworks; Chris Lynch promised to scale AtScale after being named CEO in June.

2018 is almost over, but it’s not too late to make news. If you have a hot tip, please share it by contacting us.

Related Items:

2018 Predictions: Opening the Big Data Floodgates

What 2017 Will Bring: 10 More Big Data Predictions

2016: A Big Data Year in Review