Can Markov Logic Take Machine Learning to the Next Level?

(agsandrew/Shutterstock)

Advances in machine learning, including deep learning, have propelled artificial intelligence (AI) into the public conscience and forced executives to create new business plans based on data. However, the scarcity of highly trained data scientists has stymied many machine learning implementations, potentially blocking future AI development. Now a group of academics and technologists say the emerging fields of Markov Logic and probabilistic programming could lower the bar for implementing machine learning.

Markov Logic is a language first described in by two professors in the University of Washington’s Department of Computer Science and Engineering, Pedro Domingos and Matthew Richardson, in their seminal 2006 paper “Markov Logic Networks.” The work is based on mathematical discoveries made by Andrey Markov Jr., the Soviet mathematician who died in 1979 (his father, who had the same name, is associated with a related field, dubbed Markov chains).

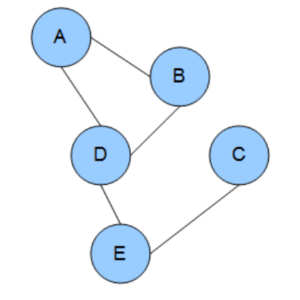

Markov Logic implements the concept of a Markov Random Field (also called a Markov Logic Field), which is a set of random variables that is said to have a Markov Property. A Markov Property, in turn, is described (by Wikipedia) as a stochastic process where “the conditional probability distribution of future states of the process [conditional on both past and present states] depends only upon the present state.”

How does this relatively obscure mathematical concept get translated into the world of data management and AI? That’s arguably above the paygrade for Datanami. Luckily for us, Professor Domingos recently put it into plain English during an interview following his keynote address at the ACM’s recent SIGMOD/POD conference in Houston, Texas.

Too Many Joins

“There’s a deep problem with applying deep learning, and in fact all traditional machine learning, to databases, which is that they make these assumptions that really don’t apply,” Domingos says. “One is that all the data comes from one table. So in typical deep learning and statistical learning, every object is one row and every variable is one column, and it’s just one table.”

Even if the source of the data is unstructured, such as text from a patient medical record or pixels in an image, the data is first organized into columns and rows before the data mining algorithms are set lose upon it.

However, this presents an issue when you consider the fact that, in practically all of data science, the useful insights are derived by combining different data sets and then using the power of statistics to spot correlations and anomalies that exist among them. According to Domingos, a big problem arises when data scientists try to join two tables together into a single table to run machine learning algorithms upon it.

“This is exactly what people try to do. In 99% of applications that’s what they do,” he says of SQL joins. “But it’s a very problematic thing to do because, number one, you lose a lot of information that way. Or you get a join that’s so huge that it’s unmanageable. And the problem is that, when you join two tables, in the resulting tables, parts of the rows of that table are copies of another part. If you join A with B and C then you get two copies of A.

“If you think of this from the point of view of the statistics that machine learning uses, this completely messes up the statistics because it changes the number of times that different things happen,” he continues. “People do it, but it’s pretty bad and doesn’t work very well. It’s also very time consuming and ad hoc. So there is a better way, and this is what I was talking about.”

Higher-Level Language

That better way, of course, is Markov Logic, which is essentially a high-level language that can be applied to certain types of data that are highly connected. As Domingos explains it, Markov Logic exploits higher level structures that exist in data sets, and does a better job of detecting patterns and conducting inference than the nearly structure-less tables that most traditional machine learning approaches use.

“Markov Logic is one language to do this,” he says. “It basically consists of defining features or learning features from data as formulas in first-order logic. And then the difference [from traditional machine learning] is that the features have weights. Just as in a perceptron you have a bunch of features with weights, those features are typically Boolean. Here, the features are in first-order logic, so they are naturally defined over multiple tables, because predicates can share variables and so on. Then you can learn features from data, and learn weights from them. And then the features and the weights together define a probability distribution over possible databases, over possible states of the world where the state of the world is described by the database.”

That implementation of the Markov Property is extremely powerful, Domingos says. “You could argue that it’s the most powerful representation ever invented in all of computer science because it just assumes all of the things that can be done in first-order logic, which is pretty much all of computer science, and all of the things that can be done in probabilistic models, including Bayesian Networks. So on the probabilistic and statistical side, it assumes most of the models that people use in machine learning so it’s a very powerful language indeed.”

Real World Impact

This exploitation of the Markov Property isn’t necessarily new, as Domingos and Richardson have been working on this for more than 10 years. All of the major universities have researchers working in this field, which is sometimes called probabilistic programming; Domingos mentioned Stuart Russell at Cal Berkeley, Daphne Koller at Stanford, and Josh Tenenbaum at MIT.

Apple’s digital assistant, Siri, is arguably the most successful implementation of a Markov Logic Network

The topic is increasingly being taught in higher-level university courses in computer science and data science. Major tech companies are also utilizing Markov Logic and probabilistic programing. The DARPA project from which Apple‘s Siri emerged, Domingos says, utilized Markov Logic as the “core representation” of the program.

“In the foreseeable future, it just becomes a standard part of the curriculum,” Domingos says. “It’s becoming part of the mainstream.”

Domingos is involved with one open source project based on Markov Logic called Alchemy. The package of software from Alchemy includes a series of machine learning algorithms that automate tasks like collective classification, link prediction, entity resolution, social network modeling, and information extraction.

It’s very easy to get started with Alchmey, according to Domingos, who says people often download Alchemy and start writing their first formulas before he reaches his first break during presentations. “Essentially you download it, you write down your model or your knowledge or whatever assumptions your making in first-order logic,” he says. “And then you learn weights, and now you have a machine learning model and you can use it to answer any question you want.”

Simplified ML for AI

It’s all about automating the drudge work of machine learning and letting data scientists and developers focus their time on the part that matters, which is developing the model and developing the knowledge.

“In a way, what we’ve done is we’ve taken a lot of the machinery, we’ve put it under the hood, and now people can just worry about the steering wheel and the pedals and driving the car as opposed to actually building the car before they can drive it,” he says.

Whether or not Markov Logic really catches on has yet to be seen. As Domingos says, there are many universities that are already teaching this approach and corporations that are actively using it, so that would seem to indicate that it’s already reached a certain base level of acceptance.

Domingos admits that creating a Markov Logic Network isn’t beginner-level stuff. “In fairness you have to remember, people are still struggling with the very basics of machine learning,” he says. It’s also computationally expensive, since the hardware is querying the entire probability distribution of a database rather than a single table.

However, the productivity advantages of Markov Logic may be too great to ignore. A deep learning machine that takes tens of thousands of lines of code in a traditional language could be expressed with just a few Markov Logic formulas, Domingos says.

“It’s not completely push-button. Markov Logic is not at that stage. There’s still the usual playing around with things you have to do,” he says. “But your productivity and how far you can get is just at a different level.”

Data scientists are looking for a new math to take AI and machine learning to the next level. Might they have what they’re looking for with Markov Logic? Domingos says it’s possible.

“Often in science and technology, the big progress happens when people define a new language to solve problems,” he says. “Imagine what databases would be without relational calculus? There would be no electrical engineering and no quantum mechanics without complex numbers, and there would be no physics without calculus. So really to crack AI, we need a new language. We need a new kind of math.

“That’s exactly what this is,” he continues, “a new kind of math that’s specifically designed to make solving AI problems easy.”

Related Items:

Why Cracking the ‘Brain Code’ Is Our Best Chance for True AI

Machine Learning, Deep Learning, and AI: What’s the Difference?