Attunity Relishes Role as Connective Tissue for Big Data

In the big data world, there are a few patterns that repeat themselves over and over again. One of those is the need to move data from one place to another. For data integration tool maker Attunity, that pattern has provided ample opportunities for its changed data capture (CDC) technology.

CDC emerged just after the turn of the century, at least five years before Yahoo started filling the first Hadoop cluster. Back in those days, enterprises built data warehouses and stocked them with the latest data from core business applications running atop IBM DB2, Microsoft SQL Server, Sybase (now owned by SAP), and Oracle‘s eponymous database.

At first, bulk extracts were the norm. But as CDC technology filtered into the marketplace, enterprises realized that automatically detecting changes recorded by the database’s log, and then replicating the changed parts – not the whole table –constituted a better way.

Over the years, the data ecosystem has evolved in dramatic fashion. While relational data warehouses are still widely used, enterprises have rushed to embrace Apache Hadoop-style storage and processing, whereby raw data is loaded into a distributed file system and processed at run-time. This “schema on read” approach has flipped the script, so to speak, on the highly structured nature of the old data warehousing world. But it didn’t eliminate the need to move data in carefully orchestrated time slices.

Apache Sqoop may be widely used to load bulk data into Hadoop, but CDC technology has retained a foothold when it comes to loading data from relational databases, which remain the go-to platforms for operational systems in the Fortune 500. Getting data out of those databases and into analytic systems is a booming business at the moment.

“We’re best known for real-time movement. CDC is the heart of what we do,” says Attunity’s vice president of product management and marketing Dan Potter. “We make our money by unlocking the data from those enterprise systems.”

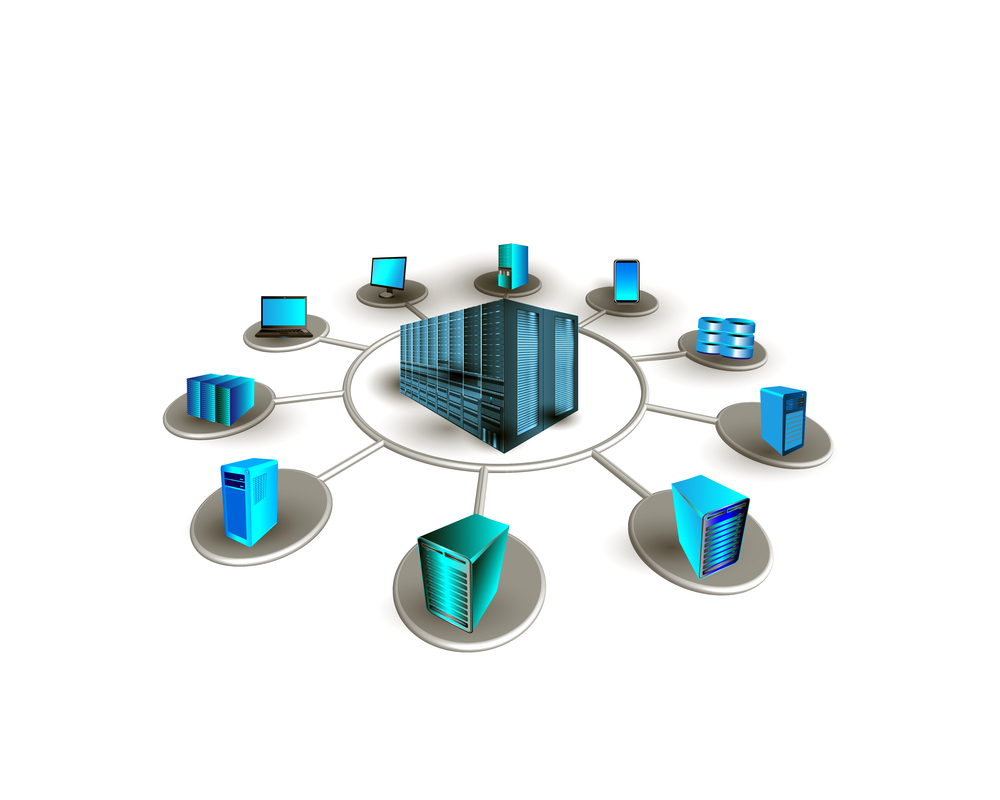

Accessing data from source systems remains a key part of analytic projects (TechnoVectors/Shutterstock)

Hadoop remains a popular target for data scraped off operational systems with CDC, Potter says. But cloud data repositories and their associated analytic systems, such as Google Cloud Platform‘s BigQuery and Amazon Web Services‘ Redshift, are quickly becoming popular targets for the data generated from traditional line-of-business apps.

“Snowflake is hot,” Potter says, referring to another cloud-hosted MPP-style database. “In the last six months, we’ve seen more data movement to the cloud and we’ve seen a lot more interest in S3 as the repository. We’ll continue to make investments around that.”

Real-time streaming analytics is also providing a fertile bed for CDC technology. While one might think that modern data message busses like Apache Kafka and Amazon Kinesis might in some ways compete with 15-year-old CDC technology, the reality is that they’re complementary to each other, says Itamar Ankorion, the chief marketing officer for the Burlington, Vermont company.

“What we do with CDC is turn a database into a live stream. It broadcasts from the database whenever something changes,” he tells Datanami at the recent Strata Data Conference. “If you’re adopting streaming architectures, it would only make sense that you’re feeding data into it as a stream.”

Potter concurs. ” Kafka is perfect for CDC,” he says. “People are starting to think about real-time data movement. The best way to get real-time data movement is through CDC. So we generate these events in real time and we’re allowing enterprise transaction systems to particulate in real-time streams.”

The company recently shipped a new version of its flagship CDC product, Attunity Replicate version 6.0, which brings several new features that open up the product to more big data use cases. That includes performance optimizations for Hadoop; expanded cloud data integration for AWS, Azure, and Snowflake; better integration with Kafka, Kinesis, Azure Event Hub, and MapR Streams. It also gets new streaming metadata integration support for JSON and Avro formats, a new central repository for metadata storage and management, and support for microservices via a new REST API.

Attunity, which trades on the NASDAQ National Market under the ticker symbol ATTU, also unveiled Attunity Compose for Hive, an ETL tool that enables users to better align relational data with the data formats that the Hadoop-based SQL analytics engine expects to see.

The update takes advantage of the new ACID merge feature that Hortonworks built into Hive with the latest version 2.6 release of its Hortonworks Data Platform (HDP) offering. Potter says that feature will help give a big data customer more confidence that the data they’re loading from a relational database into Hive is clean and ready for processing.

“If I’m going from an Oracle system, I know the schema and structure of that Oracle system. I can move that over and automatically create that same schema and structure in Hive,” Potter says. “There’s no coding at all involved. We pick up any of the changes on the source system, like table changes or other things, and we automatically accommodate that.”

Attunity likes its neutral position when it comes to accessing data. While the targets may change, for Attunity the goal is all about getting data out of host systems, including older ones that are harder to access, like IBM mainframes, IBM i servers, NonStop, VAX, and HP3000 systems, not to mention newer ones that nevertheless store data in unique ways. “We’re Switzerland,” Potter says. “We’re independent. It’s easy for us to work with Oracle’s competitors, like SAP.”

Lately, the architectural discussions have trended away from using Hadoop and toward using cloud systems. Attunity execs monitor those discussions and aren’t afraid to formulate a position. “I don’t think [Hadoop] is dead. I think there’s lots of evolution,” Potter says. “Hadoop is the best thing that happened to us because those projects are much larger. They’re transformative initiatives.”

Hadoop was important for the industry because it spurred a wave of innovation. “When Attunity started doing replications, it was ‘Let’s move from Oracle to SQL Server, point to point.’ It’s not that interesting,” Potter says. “Now it’s about ‘Let’s move a big set of our enterprise data into this data lake and start doing meaningful things with it.’ So there’s a lot more complexity, a lot larger data set, and it’s a lot more strategic to organizations.”

Related Items:

Cloud In, Hadoop Out as Hot Repository for Big Data

Hortonworks Touts Hive Speedup, ACID to Prevent ‘Dirty Reads’

Data Warehouse Market Ripe for Disruption, Gartner Says