How PRGX Is Making Its AS/400-to-Hadoop Migration Work

(2630ben/Shutterstock)

Many companies are using big data technologies to build new applications that can take advantage of emerging data streams, like sensor data or social media. It’s not often you see established back-office applications being migrated to Hadoop, but that’s just what PRGX is doing with a trusty old AS/400 application.

PRGX (NASDAQ: PRGX) works in the obscure field of accounts payable recovery audit services. Three-quarters of the top 20 retailers and grocery store chains in the world pay the 46-year-old Atlanta company to find and recover $1 billion annually. Its specialty is wading deep into the retail supply chain to find discounts or deals on goods that were promised to stores by manufacturers and distributors, but never actually delivered, often as the result of clerical or shipping errors.

As you can imagine, it’s not easy to trace those missed deals and discounts, essentially pinpointing the source clerical and shipping errors that were made months ago and thousands of miles away. When PRGX was founded in 1970, an audit would begin by traveling to a customer’s site and opening physical file cabinets to leaf through reams of paper-based documents—a hugely expensive and time-consuming task.

PRGX claims it invented the field of recovery audit in 1970 (feng-yu/Shuterstock)

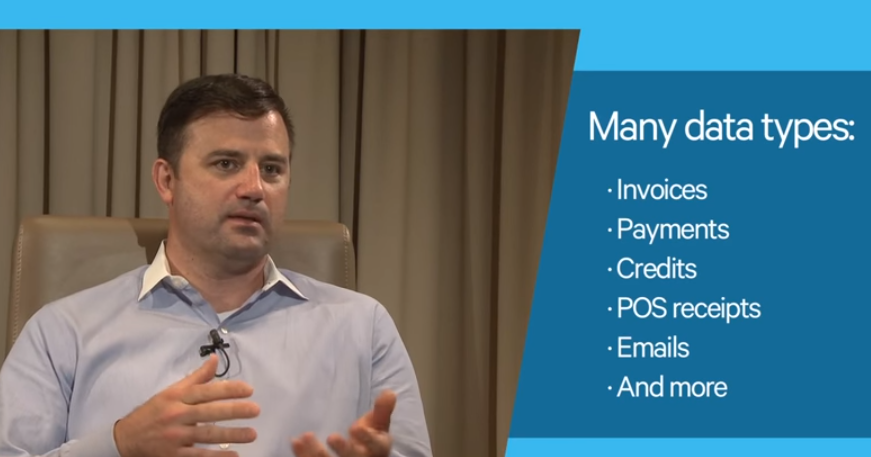

Thankfully, the process is largely electronic today, and typically starts with a big data dump involving all the pertinent records from the client’s ERP system, including invoices, purchase orders, payment receipts, contracts, agreements, and emails. Even detailed point of sale (POS) data is collected and reviewed for signs of missed deals on 12-packs of Coke and boxes of Pampers.

PRGX’s Data Services division is tasked with doing the painstaking work of parsing all those documents and picking out the pertinent bits, which it then hands over to the audit division to perform the actual recovery. Finding proof of a missed deal is a bit like finding a needle in a haystack. In this case, PRGX uses a mix of technologies and techniques to pinpoint the missed deal or discount, including ETL scripts, data cleansing tasks, keyword searches, and SQL queries. The company has been a customer of ETL automation vendor Talend (NASDAQ: TLND) for about seven years.

Even before then, PRGX standardized its recovery audit processes on an IBM AS/400 minicomputer. First launched in 1988, the AS/400 (since renamed eServer iSeries, System i, and now simply IBM i for Power Systems) owns a distinguished place in the annals of business systems and can trace its lineage directly back to IBM’s original System/3 launched before the advent of PCs in the 1970s. Over 100,000 of the big iron systems are still running around the world, many of them pumping 70’s-era RPG code at breakneck speeds using IBM’s latest 64-bit Power processors. Most customers are quite loyal to the machine (which also runs Java, C++, PHP, Python, and Node.js code) thanks to its renowned simplicity, reliability, and security.

IBM says about 100,000 IBM i servers (aka AS/400, iSeries, System i) are still running around the world

While today’s Power Systems servers can scale beyond even the legendary System z mainframe, PRGX ran into an issue with the scalability of its older iSeries-class server. As the documents and data involved in PRGX’s business changed from largely structured to largely unstructured, the AS/400 and its integrated relational database (dubbed DB2 for i) have proven to be a poor match for the work.

According to PRGX, it took an average of 140 hours, or nearly six days, to complete a batch job on the AS/400 system. Those long batch jobs—which involved enriching and purifying records, stripping out headers, and generally flattening out data contained in business records and millions of emails—really hamstrung the data services group, says Jonathon Whitton, director of data services for PRGX.

“We never have enough time and we never have enough people,” Whitton tells Datanami. “In the past, where things were running a long time…if it failed in the middle, we just have to restart it, and fix whatever was wrong with the data.”

Several years back, Whitton and his colleagues at PRGX began exploring ways to speed up the batch jobs that were running on the AS/400, as well as other jobs that had already been migrated to a sprawling array of hundreds of Microsoft (NASDAQ: MSFT) SQL Server systems.

The search eventually led them to Hadoop, the open source distributed computing platform. PRGX selected the CDH distribution from Hadoop pioneer Cloudera. The company rewrote, by hand, many of the old AS/400 data-transformation jobs originally written in RPG into HiveQL jobs that run using Pig and MapReduce on Hadoop.

Jonathon Whitton, director of data services for PRGX, explains the data types involved in his company’s operations in a video posted to Talend’s website.

That was enough to give PRGX a rather major speedup. Today, even with a rather diminutive Hadoop cluster consisting of 17 data nodes, those 160-hour batch jobs that used to run on the AS/400 now take just six to eight hours to run. Overall, the company has realized an average 10x speedup, with some jobs completing 45 times faster, according to PRGX.

That frees PRGX Data Services team to be much more flexible, Whitton says. “Now because things run so much faster, if we have a change or re-run request, things run overnight or within the work day,” he says. “So re-work has gone much quicker as compared to longer running processes.”

The $138-million company is also able to keep much more data online than it could before. Instead of having to load data from tapes into the AS/400 system, the company is poised to keep up to three years’ worth of its clients’ data live on disk in the Hadoop cluster, which consists of about 2.5PB. “That gives our advisory team the ability to go and look at a lot more data much more quickly, which gives them insights really fast,” Whitton says.

PRGX’s first-gen Hadoop system relied heavily on MapReduce, via Hive, as the underlying computational framework. Today, the company is working to migrate those hand-coded HiveQL jobs into Spark jobs. Instead of embarking into hand-coded Scala, however, the company is looking to leverage Talend and its code-generation capabilities for Spark to give them a productivity boost in the programming department.

PRGX’s first-gen Hadoop system relied heavily on MapReduce, via Hive, as the underlying computational framework. Today, the company is working to migrate those hand-coded HiveQL jobs into Spark jobs. Instead of embarking into hand-coded Scala, however, the company is looking to leverage Talend and its code-generation capabilities for Spark to give them a productivity boost in the programming department.

“The benefit with Talend is that when we want to change it…when the next big thing comes out, we don’t have to re-learn or hand code all the projects. We can just configure it,” Whitton says. “We don’t have that fully implemented right now, because we’re still transitioning stuff across. But that’s where we see the big benefit.”

The company’s AS/400 system is still chugging along, handling the work for one-third of the business for one of its largest customers. Getting off the ‘400 can be hard. But moving forward, the company is better positioned skill-wise to ride the current Hadoop wave than trying to find developers with skills in the older IBM system.

“Granted there could have been some re-engineering that could have gone on with the old code on the AS/400,” Whitton offers. “But with the AS/400, we have limited skills in our environment. Most of our business analysts are comfortable with SQL, not RPG or COBOL. For us, it was definitely a better fit.”

Related Items:

3 Ways Big Data Is Being Used in IT

Apache Spark Gets IBM Mainframe Connection

See EBCDIC Run on Hadoop and Spark