BlueTalon Delivers Fine-Grain Protection of HDFS Data

BlueTalon today announced that its big data security software now supports the enforcement of fine-grained authorization policies and masking of HDFS data. This will enable customers to control access to Hadoop data using the same tool they use to control access to enterprise data warehouses and relational databases, the company says.

One of the problems with implementing data-centric security in a big data world is the fact that data lives in multiple places. While many organizations are building big data lakes within Hadoop, those lakes often supplements existing data warehouse environments. And when one considers how many databases and repositories enterprises run for operational systems–from Oracle (ORCL) and MySQL to IBM (IBM) DB2 and Microsoft (MSFT) SQL Server–enforcing data access policies through multiple vendors’ database-specific choke points can quickly get out of hand.

BlueTalon CEO Eric Tilenius likens this state of affairs to “security chaos.” “If you literally only have one database company’s software and you want to get an access control system from them, you might be able to get away with it,” Telenius tells Datanami. “But when you say ‘I’ve got Cloudera and IBM Netezza and Hortonworks and Postgres and Greenplum–the minute you do that, [you fall into] what Gartner calls security chaos.”

Gartner recently issued a report advising chief information security officers (CISOs) to not treat big data security in isolation, but instead to require access control policies that encompass all data silos. That seems like good advice, considering the tsunami of data that we’re all under and the corresponding surge in the number of data repositories we’re all managing.

That advice is also common sense, especially considering the damage being done by hackers and cyber criminals, who are often backed by national governments and who are getting quite good at bypassing the CISO’s controls and extricating huge amounts of data from government and corporate repositories.

The Bigger the Data, the Harder It Falls

The list of organizations hit by massive data breaches–Home Depot (HD), Anthem (ANTM) the Office of Personnel Management, JPMorgan Chase (JPM), eBay (EBAY)—seems to get bigger by the day. According to Telenius, the total cost bore by business due to on cyber-attacks last year was $315 billion. One in six businesses say they’ve been the victim of a cyber-attack, and the cost of each breach has risen to $217 per record, he says.

And while an informed guess would suggest that Hadoop was not involved in the majority of these breaches—or even many at all—the evidence says the big lovable elephant doesn’t get off scot free either.

“I’m very aware of breaches that have happened with major corporations directly from Hadoop,” Tilenius says. “I was just on a phone call last week with a major corporation that had a massive breach from their Hadoop database.”

The core problem in nearly all of the massive data breaches is compromised user accounts, Tilenius says. “The vector of choice is someone who’s coming in and taking over an account,” he says. “For example, in the Anthem breach, what was interesting is there were only five accounts compromised that allowed them to take out 80 million records. It’s almost impossible to imagine a scenario where an employee would need that level of access, but that’s what they had.”

The core problem in nearly all of the massive data breaches is compromised user accounts, Tilenius says. “The vector of choice is someone who’s coming in and taking over an account,” he says. “For example, in the Anthem breach, what was interesting is there were only five accounts compromised that allowed them to take out 80 million records. It’s almost impossible to imagine a scenario where an employee would need that level of access, but that’s what they had.”

Hadoop Roadblock

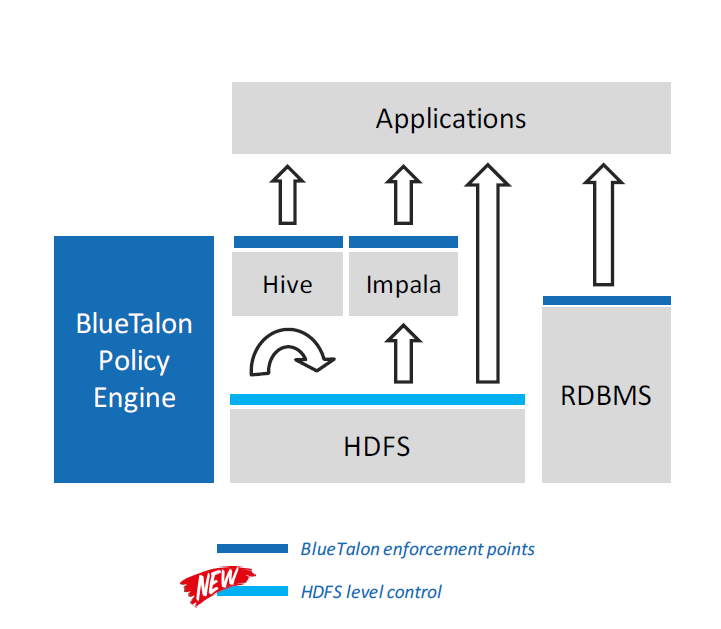

The 30,000-foot view of how BlueTalon works

The economics of Hadoop-based storage and possibilities of big data analytics are such that enterprises cannot ignore them. Having a big data strategy—often (though not always) centered on Hadoop—is quickly moving on from “competitive differentiator” to “keeping up with the Joneses.”

But somewhere along this road to big data bliss, the lack of big data security put up a serious roadblock. “This is impeding Hadoop adopting for a number of companies, where they say, ‘We don’t have the right controls,'” Tilenius says.

The Hadoop distributors are aware of the problem are taking steps to fix it. For authentication, Cloudera has Apache Sentry and Hortonworks has Apache Ranger. Oracle has its own set of solutions, and encryption is available for data at rest in Hadoop. And just yesterday, Cloudera unveiled RecordService, which provides fine-grain, data-centric access control and masking of data in HDFS.

But Tilenius maintains that trying to implement data-centric security from multiple point-level products is not going to work in the long run. “If all you’re doing is using Hadoop to analyze log file that are low risk then maybe it’s less of an issue,” he says. “But when you have Hadoop and RDBMs mixed together–the minute you have a heterogeneous system–you lose control over your data.”

Previously, BlueTalon’s software only provided data-centric protection of data as it sat in Hive or Impala. Today it expanded that protection to all data in the Hadoop Distributed File System.

Previously, BlueTalon’s software only provided data-centric protection of data as it sat in Hive or Impala. Today it expanded that protection to all data in the Hadoop Distributed File System.

The software works by instituting control points in Hadoop (or other databases) that cannot be bypassed. If a user attempts to access data for which he has not been authorized, he will be denied access at the control point. This level of protection does not come free—the software adds a processing penalty of less than 10 percent, Tilenius says. In addition to enforcement and masking, the software also provides auditing capabilities.

Related Items:

Will Poor Data Security Handicap Hadoop?

Big Data Breach: Security Concerns Still Shadow Hadoop