One Deceptively Simple Secret for Data Lake Success

Gartner turned heads last year when it declared that the majority of data lake projects would end in failure. As a self-avowed “old dog” of data warehousing, EMC’s Bill Schmarzo vehemently agrees with that assessment, but says there is one simple secret to having success with a data lake project.

Before we disclose Schmarzo’s secret formula (which really is unnervingly simple), let’s talk about how not to go about building a data lake. For starters, you can’t begin with the technology. That’s a recipe for disaster, but all too often in this over-heated big data culture of ours, that’s exactly what people do.

“The problem is that most customers are still tackling this whole big data conversation” from the technology side, Schmarzo says. “You start with your technology, bring in some Hadoop, throw some data in there and you kind of hope magic stuff happens. It’s really a process fraught with all kind of misdirection.”

While Hadoop has lowered the cost of data storage and data processing, it is not a magic bullet for big data. Unfortunately, many would-be practitioners of big data have bought the hype and are banking that their investments in Hadoop alone will somehow yield business insights. Chances are, it won’t.

Schmarzo also bemoans the data-first approach. Because Hadoop is so flexible and forgiving in how it eats up gobs of less-structured data, many organizations have taken a “store first, ask questions later” approach to data ingest. They’re putting every last piece of data they can get their hands on into Hadoop and hoping that their analysts and scientists can somehow make sense of it later.

“That’s like driving stakes in the sand and hoping to hit oil,” says Schmarzo, who is currently the CTO of EMC Global Services and used to work on Yahoo’s analytics team. “I would say that 90 percent of the companies I talk to have a flying blind approach. They’ve gone out and tried to hire the unicorns, the data scientists. If you can find one– and it’s hard because all five of them work at Google–then they can do great things.”

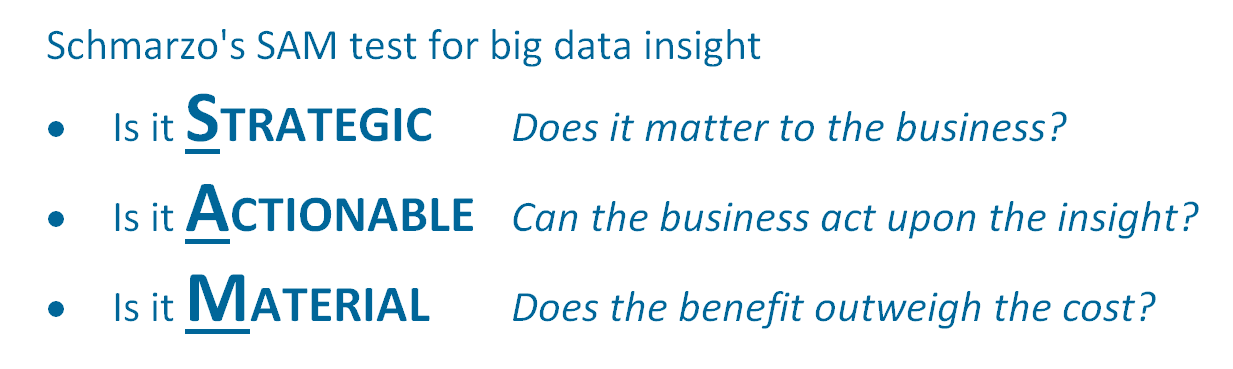

While data scientists are great at finding patterns in the data, it’s up to the business to make sure that those patterns actually mean something. “You’re going to find all kinds of stuff in the data, most of which is not useful at all,” Schmarzo says. “I’m sure there’s value in doing freeform discovery and letting smart people at it. But to be honest, they uncover insights that don’t pass the SAM test.”

Where’s Sammy?

Which brings us to Schmarzo’s simple process for data lake success. “Whenever we find stuff in the data we always apply the SAM test. Is it strategic? Is it actionable? Is it material? Use this really simple SAM test to decide what’s useful and what’s not useful. If it is [useful], then by golly we’ve got something. If it isn’t, then move on.”

If the insight doesn’t pass the SAM test, that doesn’t necessarily mean that you should ditch the data. After all, if you’ve (hopefully) already done the work to procure good data to test your hypothesis, then you might want to hold on to it to test other hypotheses. “More times than not, we end up archiving it because it’s so damn cheap to keep it,” Schmarzo says.

The SAM test is deceptively simple, but it’s not necessarily easy. Answering those three questions requires a lot of forethought. “It’s a really simple process but it is a lot of work, and it’s work in an area that most people in IT are uncomfortable with, which is really spending time to figure out how your company makes money,” says Schmarzo, author of the 2013 book “Big Data: Understanding How Data Powers Big Business.”

The SAM test is deceptively simple, but it’s not necessarily easy. Answering those three questions requires a lot of forethought. “It’s a really simple process but it is a lot of work, and it’s work in an area that most people in IT are uncomfortable with, which is really spending time to figure out how your company makes money,” says Schmarzo, author of the 2013 book “Big Data: Understanding How Data Powers Big Business.”

People in IT may know at a superficial level how the company makes money. “But if you ask them ‘What is the business trying to accomplish in the next nine to 12 months?’ they look at you like you have alligators crawling out of your ears,” Schmarzo says. “It’s an uncomfortable position for the IT folks to sit down with their business brethren and say ‘Hey what are you guys trying to accomplish?’ I can guarantee it’s not more BI reports, which is what IT is ready to give them, or a data lake with a whole bunch of data that nobody knows if it has any value in it or not.”

That raises another one of Schmarzo’s pet peeves: poor data governance in Hadoop, which he says will torpedo many data lake initiatives. “If you didn’t like data governance before big data, you’re going to hate it after big data,” he says. “Big data makes that problem worse.”

If you don’t have data governance in place in your data lake—not just cataloging the data but having full auditability, traceability, quality control, access control, and metadata management—then you can’t rely on the data.

And if you can’t rely on the data, then you can’t rely on the so-called “insights” that are supposed to come out of your data science projects.

“We think that big data means that data governance isn’t important any more. I’m an old old-school data warehousing guy and it always makes me laugh,” says Schmarzo, who’s been called the “Dean of Big Data.”

“Like ETL is dead. Really?” he continues. “What world are you in? Big data just makes that problem more hairy. I do have a single repository for data and I can do my ETL processing using Hadoop much faster. Life has gotten a bit more manageable. But it’s not managed. It’s a process you have to manage. You have to put in policies and processes to do that.”

Avoiding Big Data Alligators

Having confidence in your data lake requires a lot of hard work that goes beyond the data or the technology itself, he says. A successful data lake should be thought of a living entity where all the governance and security processes are well-defined. You may not want to hear it, but before you get to the sexy part of data science and finding insights hidden in data, there’s a bunch of boring old business work to do.

“It’s got to be treated like a corporate asset,” Schmarzo says. “You wouldn’t go out and buy a Tesla and leave it parked in a corn field. You’d put all the right kinds of protections around it. You’d park in a garage somewhere (unless you’re in Palo Alto–then you’d just leave it parked on the street). If data is truly an asset and the ‘new oil’ then by golly we should actually take care of it and value it.”

The twin phenomena of big data and Hadoop together are having a big impact. They’re changing the types of data that we analyze for insights and they’re changing how we analyze them. But there are some basic rules to the game that have not changed, but too many people have not realized this, according to Schmarzo.

You risk failure with your data lake if you don’t have a well thought-out process in place about what data you’re going to put in the data lake. “If you’re just going to randomly throw data in the data lake and hope that somehow the data is governed, you’re going to have a data dump really fast,” says Schmarzo, who teaches a course at the University of San Francisco called “The Big Data MBA.” “It’s a simple process, but it’s a lot of work. Make sure you know exactly how you’re going to use the data prior to bringing it in.”

Of course, what Schmarzo says is right. There are no shortcuts to success in big data. But for some reason, people want to believe that there are. “It’s as simple as that. I don’t’ know why people don’t want to do that,” he says. “Whenever we talk about our approach, people look at us like we’re crazy. No it’s the only way to do it!”

If you follow EMC’s approach, you end up building your data lake one business use case at a time. “You’re not going to start with 50 data sources. You start with three or four. And as you add a new use case, you keep adding new data….It becomes an asset, not just some random thing laying at the bottom of your basement.”

Related Items:

Finding a Single Version of Truth Within Big Data