How NVIDIA Is Unlocking the Potential of GPU-Powered Deep Learning

Companies across nearly all industries are exploring how to use GPU-powered deep learning to extract insights from big data. From self-driving cars and voice-directed phones to disease-detecting mirrors and high-speed securities trading, the potential use cases for the technology are large and expanding by the day.

Ever since computer scientist Geoff Hinton decided to try training a neural network on a GPU and did much to help popularize the field of deep neural networks several years back, researchers have been racing to apply the technique to tough modeling problems in the real world.

Hinton, who splits his time between Google and the University of Toronto, gets credit for popularizing the field, but GPU-maker NVIDIA is more than happy to pick up the reins and run with it. The company has years of experience applying GPUs in the high performance computing (HPC), gaming, and high-end graphics spaces.

But applying GPU and deep learning in big data is relatively new, and enormously promising, says Will Ramey, the senior product manager for accelerated computing software at NVIDIA.

“Deep learning is a powerful approach for making sense of big data,” Ramey says tells Datanami. “Big data has been around for a while. People have collected all this data and now they’re trying to make sense of it. Deep learning is one of the most powerful tools that we’ve found to make sense of these large quantities of data.”

Powering ‘Delightful Experiences’

Data scientists and other researchers are looking to NVIDIA to help them get started with GPU-powered deep learning. Many attended the company’s GPU Technology Conference earlier this year, which offered deep learning education that was as good, if not better, than dedicated conferences on deep learning, Ramey says. And just last month, NVIDIA was a little surprised when more than 7,500 people signed up for a free online course on deep learning.

Among the GTC presenters turning heads with GPU-powered deep learning were Google and Baidu. Google’s Jeff Dean told his audience how the company is using GPUs and deep learning in more than 50 projects.

One area where GPU-powered deep learning is especially promising is understanding the actual content of video. Like all big Internet firms that deal with video, Google must do its due diligence to ensure that inappropriate or trademarked content is not replicated on its systems. But instead of relying on human eyeballs to identify and index content, Google is exploring whether GPUs can do it cheaper and faster.

“If you have all that video content…and users can only search on the metadata–the textual description of what somebody typed in about the video rather than the content of the videos itself–that’s not as delightful an experience as you’d like to give your end user,” Ramey says. “But if you can actually have your computers analyze the content of the video and understand what’s in it and allow people to search based on what’s actually in the video…it’s a really powerful and delightful experience to deliver to your customers.”

Baidu, which is sometimes described as “the Chinese Google,” is doing similar things around speech recognition and natural language processing (NLP). “Like those of us in Western World, they want to just talk to their phones and have them do the right thing,” Ramey says. “For English and French and German and the Romantic languages, there’s been lots been a lot of deep research over years. Much of that is understood.

“But for the Chinese language, which is more a tonal language than a phonetic language, that same kind of research has proven to be much more challenging, much more difficult,” he continues. “So Baidu has developed really sophisticated deep neutral network models to allow them to provide the same, if not better, speech recognition capabilities in Chinese.”

Driving Miss GPU

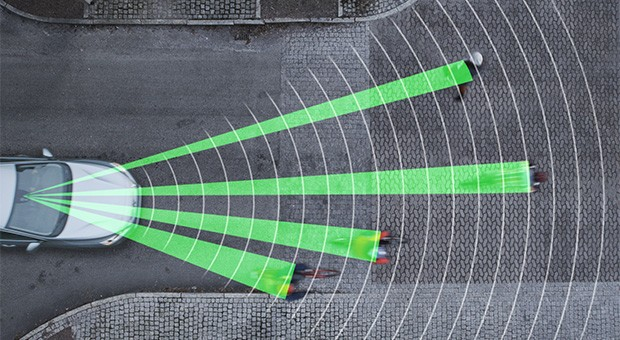

The path to driverless cars will also be paved with GPUs, which automakers are counting on to turn sensor data into actionable intelligence.

GPUs have already found their way into the computer systems of some advanced cars, where they’re powering “infotainment” systems and some basic autonomic capabilities, such as self-parking systems. But in the future, GPUs will be used for all sorts of real-time processing.

“We’re all excited about the path to self-driving cars. Self-crashing cars are a bad idea,” Ramey says. “We need to be able to demonstrate that these self-driving cars are going to be significantly safer than real human beings. You want it to do things like detect a pedestrian or read a speed limit sign.”

Deep neural networks trained on GPUs will also be counted on to properly identify the other vehicles on the road. “A car might see a vehicle coming head on and the way it responds really depends if it’s a fully loaded oil tanker or a full SUV or Mazda Miata,” Ramey says. “Having a car be able to make those decisions in split seconds is critical.”

Earlier this year, Audi announced that it will use NVIDIA TX1 GPUs in developing its future automotive self-piloting capabilities, such as speed limit sign reading and vehicle detection, Ramey says. Other OEMs are also doing impressive work towards self-driving vehicles, including Tesla Motors, BMW, Mercedes, Toyota, Honda, Volkswagen, Tata Motors, Ford and GM. “You’ll see this come out as driver-assist, recommendations, and notifications,” he adds. “But it’s all necessary on this path to completely self-driving cars.”

Mirror, Mirror, On the Wall

GPU-powered deep learning can be used in any field that requires fast and accurate recognition of unstructured data, such as images, video, and sounds. So it’s not surprising to hear that the technique is catching on in radiography.

NVIDIA is working with an AI startup named MetaMind that’s using GPU-powered deep learning to help doctors identify various conditions, including diabetic retinopathy, a form of blindness caused by untreated diabetes. An ophthalmologist equipped with the proper GPU-powered imaging machine could see the early signs of this disease and take steps to prevent it.

Another company is working on a diagnostic mirror that uses GPU-powered deep learning to judge the health of a person by their image. “This device might be able to give you a really insightful checkup every morning before you go to work,” Raney says.

The securities industry is also cashing in on GPU-powered deep learning. “They’re taking in large amounts of data, looking at historical patterns, and making recommendations for when and at what price to make trades… and to identify fraud and other mistakes,” Ramey says. “That whole area is a sweet spot for this technology.”

Some of the early projects involving GPUs and deep learning may seem far-fetched today, Ramey admits. “It’s a little bit of science fiction,” he says. “But you can basically see a straight line from where we are today to those kinds of applications being a reality.”

The World on GPUs

This month NVIDIA plans to ship new software aimed at helping data scientists build deep learning systems powered by GPUs. This includes the version 3 release of its cuDNN library, which will feature out-of-the-box integration with four popular frameworks used to train deep neural networks, including Caffe, Minerva, Theano, and Torch.

The second announcement revolves around DIGITS 2, a new release of its GUI for managing deep learning environments on GPUs. The new version adds support for training multiple GPUs simultaneously. By enabling data scientists to train their models twice as fast, it allows them iterate much more quickly, and achieve a target level of accuracy much faster, the company says.

There are probably some areas of machine learning that haven’t yet been impacted by the advances in GPU-powered deep learning, but Ramey hasn’t been able to find them yet.

“Every time I go out and poke around to see…if anybody using GPUs in this area, I keep finding not just one, but multiple people,” Ramey says. “The entire revolution in deep learning is powered by GPUs.”

Related Items:

The GPU “Sweet Spot” for Big Data

GPUs Push Envelope on BI Performance

GPUs Tackle Massive Data of the Hive Mind

This article was corrected. Geoff Hinton did much to popularize deep learning on GPUs, but he did not invent the field of deep learning. Kunihiko Fukusima’s introduction of the Neocognitron in 1980 helped facilitate modern deep learning architectures. Before that, Alexey Grigorevich Ivakhnenko published the first general, working learning algorithms for deep networks in 1965.