Will Poor Data Security Handicap Hadoop?

Companies around the world are looking to Hadoop as a platform on which to perform big data analytics. Every day, petabytes of data are flowing into Hadoop clusters with the aim of giving them a competitive edge. However, the overall lack of built-in security threatens to hamper the open source platform’s spread before it’s really gotten off the ground.

If you set out to build a big data platform today, chances are good that data security would be one of the top priorities in the effort. Every week seems brings news of yet another massive data breach. In the 14 months, Anthem Blue Cross, Target, Home Depot, Staples, and JPMorgan Chase have collectively lost hundreds of millions customer records, including names, addresses, credit card numbers, and Social Security numbers.

It’s unknown if any of these breaches involved Hadoop. In several of the breaches at retail chains, cyber criminals managed to get credit card numbers by surreptitiously loading malware onto Windows-based point of sale (POS) terminals used to process transactions. POS systems are typically hardened to withstand cyberattacks, but that’s not the case with Hadoop.

The question isn’t if anybody will hack Hadoop–it’s when. Companies are storing gobs of personally identifiable information (PII) in Hadoop. It’s just a matter of time before a malicious hacker or disgruntled employee tries to take it.

“I’ve heard story after story about people who are working in Hadoop clusters, and they can see everybody’s pay and everybody’s Social Security number,” says Eric Tilenius, CEO of Hadoop data security software vendor BlueTalon. “It’s shocking how open the system is today.”

Hadoop, of course, was designed in the open to allow people to access and manipulate large amounts of data. It wasn’t until Hadoop hit the mainstream that the community reconsidered the situation and tried to put some speedbumps in place to slow people down. Things like Apache Knox (authentication), Apache Sentry (authorization), Cloudera’s Project Rhino (encryption), and Apache Ranger (security policy management and user monitoring) are slowly making headway. More recently Hortonworks has spearheaded the related issue of data governance with the new Data Governance Initiative.

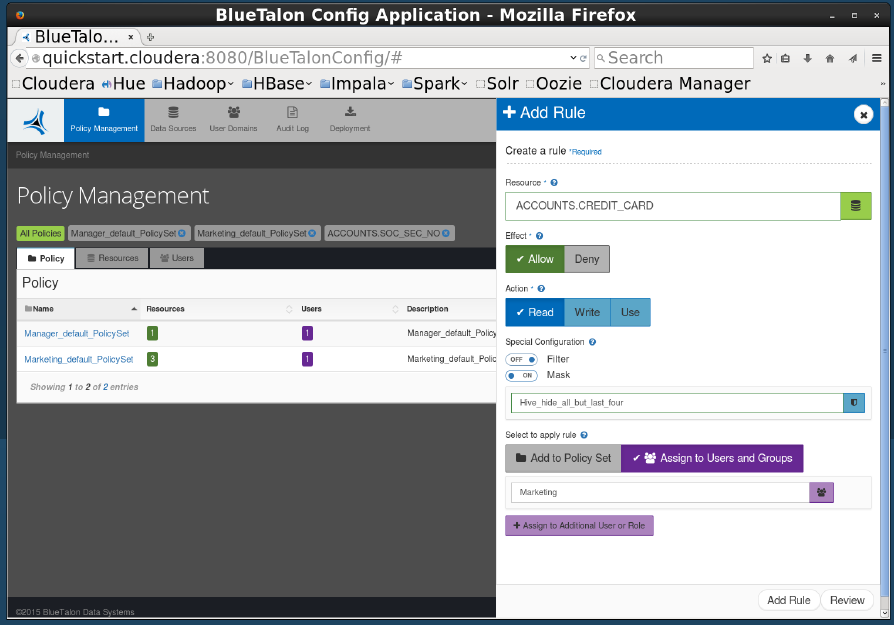

BlueTalon’s software gives administrators the capability to implement fine-grained access control against Hadoop.

While the open source community is making progress, some companies in regulated industries are growing impatient. That’s the number one factor driving the adoption of third-party security solutions, such as the tool that BlueTalon launched yesterday at Strata + Hadoop World.

“What we do is entitlement or fine-grained access control based on who you are and what you do,” Tilenius explains. “We can grant [access to] very specific data that you’re entitled to. That lets you get just the data you need to run your job, and not more data.”

The software implements a security control point against queries executed through Hadoop’s SQL facilities, such as Hive, Impala, and JDBC/ODBC connections; a control point for the HDFS API is in the works. In addition to defining access control policies via LDAP and enforcing them with the runtime (which charges a small processing tax on the Hadoop cluster), BlueTalon also provides auditing and data masking capabilities.

As Tilenius explains, companies are being forced to either scale back their Hadoop ambitions due to threat posed by Hadoop’s wide open permissions, or are taking great pains to manage it manually.

“We have several customers we’re talking to where they’ve put a 360-degree view of the customer in Hadoop, but they can’t roll it out because they don’t have the right access controls,” Tilenius says. “One of the customers has a small number of what sounds like priests who are allowed to access the Hadoop cluster. When they’re loading up a lot of data, the priests are deciding manually who can run what queries with the sensitive data.”

Another firm tackling the Hadoop security question is Centrify, a developer of unified identity management solutions. This week at Strata + Hadoop World, the company announced partnerships with Cloudera, Hortonworks and MapR Technologies to support its latest product, Centrify Server Suite 2015.

The new product controls who can access Hadoop essentially by extending Microsoft‘s Active Directory to Hadoop. This enables Hadoop clusters to use Kerberos for authentication and the open LDAP standard for fine-grained access control. Centrify has worked with the three Hadoop distributors to ensure compatibility with their Hadoop versions.

“Over the past year or so we have had dozens of our enterprise customers begin to embark on their big data journey, and in doing so they saw immediate significant value in their Centrify identity management solution being applied to their new Hadoop deployments,” the compamy’s chief product officer, Bill Mann, says in a press release.

These aren’t the only vendors tackling Hadoop security, of course. Dataguise has a respected encryption and data masking offering that’s being used by many large Hadoop users, including banks, while Zettaset provides security capabilites, such as fine-grained access control and encryption, in its flagship Hadoop management product.

Whatever approach a Hadoop customer takes, the writing is on the wall: Hadoop security must get better. Not using Hadoop is not an option. And priests do not scale.

“We’re in a situation where we have an unstoppable force, which is the growth of Hadoop, hitting unmovable object, which are security regulations,” BlueTalon’s Tilenius says. “A lot of sophisticated customers are well aware of this. Some are trying to write their own software. But I think most of them would rather buy it than build it.”

Related Items:

Taming the Wild Side of Hadoop Data

Big Data Breach: Security Concerns Still Shadow Hadoop