Taming the Wild Side of Hadoop Data

Organizations are attracted to Hadoop because it lets them store huge amounts and different types of data, and worry about structuring it later. But that “anything goes” philosophy has a downside, and can threaten to turn a big data lake into bottomless pit. Today Hortonworks unveiled a plan to give data management on Hadoop just a bit more structure.

Dubbed the Data Governance Initiative (DGI), Hortonworks aims to lead the development of new open source software that help organizations track exactly what data goes into Hadoop, how it’s transformed, who accessed it, and where it ultimate ended up. The DGI project will touch multiple disciplines, from metadata tagging and privacy settings to compliance reporting and access control.

There is a huge demand for data governance within Hadoop, says Hortonworks product manager Tim Hall. “There’s this need, want and desire for auditors and compliance officers to come check on who touched the data, when did they touch it, is there a chain of custody issue, is there transparency in terms of data privacy and protection,” he tells Datanami. “These are the sorts of things that need to be addressed. The more data you land in Hadoop, the harder the problem becomes.”

Several of Hortonworks’ customers will work with the Hadoop distributor on DGI, including Merck, Aetna, and Target. The data analytics giant SAS, which already sells a data governance package, will also be a first-level member of the project. If all goes as planned, DGI will eventually become an open source project at the Apache Software Foundation and be offered as a core component of the Hadoop stack.

Hortonworks is not the first company to tackle the problem of data governance, either inside Hadoop or in the wider ecosystem. But it decided to go down this road because it felt that the Hadoop ecosystem would benefit from having its own data governance framework that was designed from the beginning to be open and transparent on the one hand, and to handle complex polystructured data on the other.

Openness is key to DGI, Hall says. “There are a large number of data governance solutions on the market today and we have absolutely no intention to overrun the positon those vendors have and the capabilities they’re currently delivering,” he says. Instead, Hortonworks is looking to “enrich and augment” the existing data governance solutions on the market and to allow them to integrate with DGI through open APIs.

However, vendors that are currently targeting Hadoop with their data governance wares may be threatened. The idea that Hadoop users will be OK with having run all of their YARN workloads managed through a single data governance tool that’s controlled by a third party will not get much traction in the long run, Hall says.

“There are two chances of that happening: slim and none,” he says. “What we’re doing is very consistent with the corporate culture of Hortonworks. We’re going back to innovate at the core. [We’re asking], how do we make sure that as we mount these new engines in YARN that we’re looking at consistent audit, security and metadata tagging so that you can ensure that, regardless of where your entry point is–third party vendor tooling, direct tooling from Hadoop– that you get consistent audit informant, and that your security policies are applied consistently across all those engines.”

It’s not an easy problem to address, and there are many aspects that need to be looked at. For example, while a given person or application may have been granted role-based access to a certain data set, they may be barred from mixing that data with other data, even if the data has been anonymized. Such data mixing can result in “re-personalization” of datasets, and lead the Hadoop user afoul of laws and regulations. Companies dealing with the data of EU residents, in particular, have to follow very strict rules covering the movement of that data outside of geographic zones.

Hortonworks aims to help address these challenges by adopting so-called attribute-based access control (ABAC) capabilities within the DGI deliverable. ABAC would complement existing role-based access control (RBAC) capabilities, and work all the way down to the column level.

Metadata tagging is another challenge that will be addressed in DGI. Existing tools, such as Apache Falcon, enable users to apply metadata tags to data arriving at a certain frequency. For incoming data that is more ad hoc, “there will be capability for the data governance czar or the data wrangler … to tag that dataset and pull from existing data classification schemes and leverage those,” Hall says.

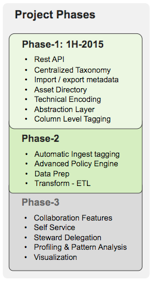

Hortonworks hopes to have a working prototype of DGI available soon, and to have a technology preview available before the end of the first quarter, with general availability of the first release targeted for the second half of the year. The company intends that development of DGI will follow a three-part plan, much like its Stinger Initiative for improving Hive. More information on features and functinlity will be shared at the upcoming Strata+Hadoop World conference taking place next month in San Jose, California.

Hortonworks hopes to have a working prototype of DGI available soon, and to have a technology preview available before the end of the first quarter, with general availability of the first release targeted for the second half of the year. The company intends that development of DGI will follow a three-part plan, much like its Stinger Initiative for improving Hive. More information on features and functinlity will be shared at the upcoming Strata+Hadoop World conference taking place next month in San Jose, California.

Backed by a large open source community, Hadoop has evolved quickly but somewhat erratically. It’s a relatively young but very promising code base that needs hardening and maturation before it will fit comfortably into the corporate enterprise. It was inevitable that the DGI project, or something else like it, came along to take some of the edge off the places where Hadoop was meeting resistance. But it sounds like Hortonworks will be mindful not to take too much metal off the blade.

“You know that drawer in your kitchen?” Hall asks. “The junk drawer. We don’t want Hadoop to turn into that. Nobody wants that… It doesn’t need to be the Wild West.” While nobody is looking to make Hadoop as rigid as an RDBMS, there needs to be some more structure applied to the data stored in Hadoop, he says.

“It gets to the point where data is ingested into Hadoop, and then it becomes like a black box,” Hall continues. “Product X says ‘Yes the data has landed within the Hadoop infrastructure.’ But who touched it, how did they massage it through the quality processes, how did they cleanse it, how did they curate data sets? They lose visibility into all those intermediate and interim steps. We’d like to shine a bright light on all of that stuff and make sure that those existing products get that level of visibility, which is why the integration APIs we’re building for this initiative are fundamentally baked into the core.”

Related Items:

Hadoop Hits the Big Time with Hortonworks IPO

Hortonworks Hatches a Roadmap to Improve Apache Spark

Hortonworks Goes Broad and Deep with HDP 2.2