How GE Drives Big Machine Optimization in the IoT

The folks at General Electric Intelligent Platforms get to play with big toys. Whether it’s a 200-foot tall wind turbine, a $10-million jet engine, or a giant industrial rock crusher, the object of their professional attention is easy to see. What’s harder to grasp is how they transform the terabytes of data streaming off the big machines’ sensors in ways that save their owners millions of dollars.

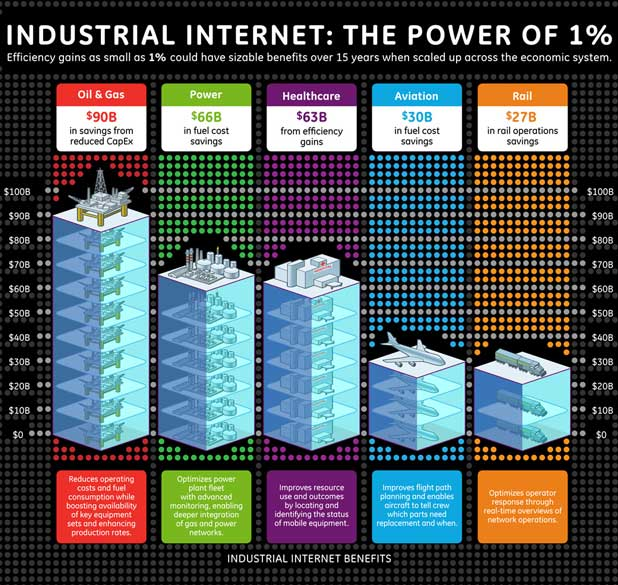

The Intelligent Platforms branch of General Electric uses a combination of data science, massively parallel computing, and the Internet of Things (IoT) to optimize big machines in ways that were never before possible. They call what they do “recombinant innovation,” and it’s largely about rethinking how traditional processes work at industrial levels. Small changes made to traditional business processes and maintenance schedules may cut costs or improve efficiency by a relatively small amount. But spread out over a large fleet, these changes can turn into big savings over time.

“What we can do now are things that required so much compute power before that it would take 20 to 30 days to run, if you could even run it,” says Rich Carpenter, Chief Technology Officer of Software at GE Intelligent Platforms. “There was too much data, there was not enough compute power, and these queries would run for 20 or 30 days and then fail. It just wasn’t physical possible.”

While the industrial giant did use traditional high performance computing (HPC) resources to research industrial processes, the time and expense requirements were too great to put that sort of deep analysis into production. That is changing now in the big data era.

“When we put it into a big data architecture, using standard commercial cloud technology like Hadoop, we can run those types of queries in four to five minutes by scaling up the right CPU and distributing the data,” Carpenter tells Datanami. “We get those answers back very, very quickly. Now we can run those kinds of complex analyses every five minutes as opposed to once a year. Then we can be proactive about emerging fleet-level problems long before customers even see that, and get back and plan the maintenance and do the changes that we need to avoid some pretty significant problems for the customer and for us.”

Big Machines, Big Data

GE Intelligent Platforms monitors 30,000 assets, which are collectively worth about $11 billion. Some of these assets are built by GE itself, which has more than a quarter million gas turbines, medical devices, jet engines, and locomotives in production, and some are built by other companies and just managed by GE.

Among the managed assets are more than 1,600 gas turbines, the sort that are used to power electric plants. According to GE, each turbine can be equipped with more than 100 physical sensors and another 300 virtual ones, and can generate terabytes of data per day, which is also about what a single jet engine generates.

Among the managed assets are more than 1,600 gas turbines, the sort that are used to power electric plants. According to GE, each turbine can be equipped with more than 100 physical sensors and another 300 virtual ones, and can generate terabytes of data per day, which is also about what a single jet engine generates.

Wind turbines are also generating big data that is ingested by GE. The company helps clients run them efficiently by continuously monitoring conditions and adapting the turbines up to 400 times per second to match conditions. Changing the angle of turbine blades can make the turbine run better depending on whether it’s a high-wind speed or low-wind speed situation. The company, which recently became the leading supplier of wind turbines, is expected to introduce new turbines that use lasers to detect wind gusts up to 100 meters out, according to a 2013 New York Times story.

Building smart trains is also a focus at GE. Inside each of its 218-ton Evolution-class locomotives are 250 sensors that generate millions of data point every hour. The data collected helps to optimize speeds and can help squeeze the most mileage out of its massive fuel tank.

The oil and gas industry is another area that GE Intelligent Platforms has enjoyed early success in. Instead of having employees drive the length of a hundred-mile pipeline to take measurements, for example, sensors can relay that information instantaneously via satellite, cell, or land-line networks, eliminating the need for manual data collection.

Operational Vs. Process Analytics

CTO Carpenter splits the analyses that GE Intelligent Platforms does into two buckets: operational analytics and process analytics. Operational analytics is typically about detecting signals in the data that would trigger a change in the maintenance schedule of a piece of equpment, such as washing a jet engine that’s been operating in dusty regions, or lubricating a mining truck that’s been running continuously for weeks on end.

“We’re finding that, rather than have five to 10 minutes of warning [before something breaks], that we can give them three weeks or three months of warning,” Carpenter says. “Sometimes you can get a relatively inexpensive piece of equipment that if it fails, shuts down a very expensive piece of equipment.”

Only after a company has stabilized their equipment with operational analytics can they can take the next step into process reliability. “There’s no reason to optimize any process if you’re equipment is not reliable because you’re optimizing an unstable process essentially,” he says.

Only after a company has stabilized their equipment with operational analytics can they can take the next step into process reliability. “There’s no reason to optimize any process if you’re equipment is not reliable because you’re optimizing an unstable process essentially,” he says.

The mining industry provides a good example of process analytics can be deceptively complex. “What’s the goal of rock mining in Australia? Get rocks on ships. Dig them out, grind them up, then haul them to ships,” Carpenter says. “But if we can crush the rocks in a very particularly way, we can get 2 to 3 percent more flow from the mine to the ships.”

The first step in a process reliability engagement is to identify and eliminate variability. “You may not be looking for the best size, but a consistent size” of rock, he says. “Then you start changing your process a little bit to get it to the optimal size. So we reduce variations, then move the center point to the optimal point, and then we monitor it from a distance to make sure that it stays that way.”

Data Science at GE IP

GE Intelligent Platforms processes all this data using a collection of Hadoop, NoSQL databases, an extensive fleet of sophisticated algorithms, and analytic software developed in-house and by third parties. The company runs a large Hadoop cluster at its three-year-old laboratory in San Ramon, California. After first starting with Cloudera, the company switched to the Hadoop distribution from Pivotal, which isn’t surprising considering the $105 million stake its parent company GE took in the EMC/VMware spin-off.

While the company has the latest big data infrastructure, the human element is arguably more important for big data analytics. GE Intelligent Platforms employs more than 3,000 people around the world, including a good number of data scientists and data analysts, who are tasked with the tough job of separating informational chaff from potential signals. “As we go on this Internet of Things journey, we had to add a different set of skills to the company that didn’t exist before,” Carpenter says. “Data science is a skill set that we’ve identified as people with specialized skills. We’ve hired a few of them and brought them into GE.”

In support of the data scientists are data engineers, who are experts at manipulating data into a form that data scientists can use. “Then we have to bring in some analytic experts that know how to develop these complex neural networks, and fuzzy logic and principal component analysis and other techniques, and do that in a way that we can put them into operational production on a very fast basis,” Carpenter says. “Then we had to bring in cloud experts who know how to work in a massive parallel computer processing environment. That mindset is not quite there in a lot of industrial businesses because they’re thinking more traditional client- server. So we’ve absolutely had to bring in a different skills set to GE in order to make this work.”

Up to this point, GE Intelligent Platforms has mostly catered to large industrial outfits. But as industrial Internet and IoT use cases become more widely known, smaller companies will begin to benefit too.

Related Items:

It’s Sink or Swim in the IoT’s Ocean of Bigger Data

Real-Life Miners Find Gold with Data Analytics

Shell Drills into Big Data Analytics