Why Hadoop on IBM Power

In the quest to achieve data-driven insight, Hadoop running on Intel X86-based processors has emerged as a defacto standard. But X86 is not the only game in town, and before the book on Hadoop is written, IBM would like to say a thing or two about the virtues of running Hadoop on its Power processor.

Unlike most enterprise software markets over the past 30 years, the combination of hardware architecture and operating system is almost completely absent from the Hadoop discussion. It goes almost without saying that if you’re running Hadoop, you’re almost certainly running it on a Linux OS and X86 iron. As Hadoop makes the transition from open source to commercial software, this fact begins to stand out.

But there are alternatives to Linux and X86. Microsoft, for instance, sells a Windows-based version of Hadoop, called HDInsight, that it offers either as service hosted in its Azure cloud, or as an on-premises, X86-based product. But Microsoft, which developed HDInsight in concert with its business partner Hortonworks, doesn’t appear to have gained much traction with the offering outside of its Azure cloud.

And then there is Microsoft’s old rival, IBM, which sells a version of its Hadoop offering, called InfoSphere BigInsight, designed to run on the Linux OS running on its 64-bit RISC Power architecture. With Hewlett-Packard‘s PA-RISC, Oracle/Sun’s Sparc, and HP/Intel‘s Itanium architectures either dead or dying, IBM is the last remaining counter to Intel and its X86 architecture when it comes to general purpose chip architectures for running commercial and scientific workloads.

With Hadoop sales and deployments poised to grow quickly in the next few years, Intel and the X86 server makers are poised to benefit from increased hardware sales. But IBM is also positioning itself to capture a share of this emerging market with Power. IBM continues to invest billions of dollars every year in Power, and the rollout of the new Power8-based servers offers us an opportunity to see what IBM has to offer prospective Hadoop customers.

IBM’s Linton B Ward, who holds the title Distinguished Engineer and Chief Engineer Power Workload Optimized Systems, recently spoke with Datanami about the benefits of running big data analytics on IBM’s latest Power8 servers. As Ward explains it, the new Power chips have certain advantages over the latest Intel Xeon processors, but there are other aspects of IBM’s history in system architecture and software design that will come into play as well.

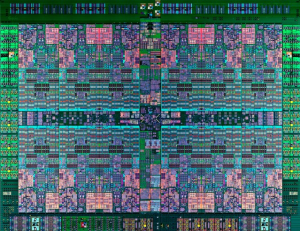

On a chip-to-chip basis, IBM’s Power8 chip has advantages over Intel Xeon IvyBridge in the categories of memory capacity, memory I/O, the size of the caches, and the number of threads in the processor itself, Ward says. “The memory bandwidth [in Power8] is well beyond that of the current [Intel] IvyBridge,” he says. “We believe the capacity of the chip is more capable for just the raw processing for data-centric workloads.”

The total I/O bandwidth of the chip is another area where IBM feels good about Power8. “We have large memory capacity, and scalable capacity as well. But the second step is really good I/O bandwidth, or the ability to get I/O in and out of the box for data centric workloads,” Ward says.

The entire Power chip is equipped with 96 MB of L3 cache, compared to 15 MB on the Ivy Bridge Core i7 Extreme chip. The on-chip Power8 memory controllers can handle up to 1 TB of RAM and drive 230 GB per second sustained memory bandwidth. “The I/O bandwidth of these servers is pretty immense, supporting up to 16 wide Gen 3 PCI. And as I mentioned, with the PCI on the chip, that takes cost out as well,” he says.

IBM’s Power Systems servers have historically been more expensive than standard Intel-based servers. Big Blue has always taken the long view with its servers, emphasizing the three- or five-year total of cost ownership (TCO) as a better expense metric than up-front acquisition costs. In recent years, as its share of the market has slipped, IBM dropped its Power server prices to make them more competitive with Intel-based servers. That trend continues with the current Power8 lineup, where IBM is debuting the smaller and cheaper entry-level servers first, before rolling out the big honking symmetric multi-processing (SMP) machines, expected later this year and early 2015.

IBM maintains that customers can save money by running Hadoop clusters on Power. (It doesn’t currently have any long term TCO studies on this, but you can bet they’re in the works.) The performance advantages in hardware mean users can get by with buying less hardware up front, Ward says. It also makes the case that its software can help customers save money, such as with its Platform Symphony job scheduler, which came out of the HPC world and can replace MapReduce. If you’re looking for a better file system than HDFS, IBM may sell you on General Parallel File System (GPFS), which also came out of the HPC world and which also works with IBM’s BigInsights.

IBM supports its BigInsight Hadoop distro running on the X86 and Power versions of Linux. That means users can run BigInsight on commodity Intel X86 cluster or on IBM’s Power servers. Should customers worry about vendor lock-in if they choose the Hadoop-on-Power Linux approach? No, Ward says.

“Because a lot of Hadoop runs in Java anyway, the Jaql layer, it really is very insulated from the user,” he says. “We are developing best practices in Redbooks and whatnot to help with the various tune-ables and so forth. But in general, we’re seeing it doesn’t affect the users code.”

IBM is hoping to take a bite out of Intel’s dominance in the big data market with its Open Power Foundation. Launched in conjunction with its Power8 servers earlier this year, the Open Power Foundation brings together a group of partners to build “white box” servers built on Power8 chips. Google, Nvidia, Mellanox, and Tyan have all signed up.

As big data workloads become more common, you can expect IBM to try to bend the cost curve in other ways, too. With the Power 8 rollout, that means a stronger emphasis on the role of networking and clustering, and swinging the storage needle away from a de-centralized architecture back towards a centralized approach, according to Ward.

“The other piece of system design that has been widely held convention in the Hadoop world is that you have to put disks inside the server to get the performance out of the disk,” he says. “That was true when Hadoop was invented, but that was back in the 2005-2006 timeframe, when many people were still running 100Mb Ethernet.”

While the phrase storage area network (SAN) conjures bad images of expensive Fibre Channel gear, the SAN concept will prove indispensable going forward, Ward says. Network speeds have improved tremendously with today’s 10Gb and 40Gb Ethernet setups, and with 100Gb Infiniband networks on the horizon.

IBM is counting on its partner Mellonox to help drive extreme performance from clusters of Power8 servers. Two weeks ago, Mellonox committed to building a series of network interconnects based on 40Gb Ethernet, remote direct memory access (RDMA) technology, and RDMA over Converged Ethernet (RoCE) technologies. The hardware will be used to drive NoSQL databases and in-memory data grid solutions, the companies said.

“We believe the next round of disruption is in clustering,” Ward says. “As you know, the bandwidth of disk doesn’t change much over time because of the inherent rotational nature. So when you’re using 3.5 inch SATA drives for big data storage, the performance of those drives hasn’t changed much over the last eight to 10 years. In fact the performance of drives over the last 50 years has been slow incremental growth. So we believe Ethernet then changes the way you begin to think about system design elements around big data. So we’re pursuing that type of design which allows us to have the best of both worlds–a low cost and network centralized data store.

Related Items:

Five Steps to Drive Big Data Agility

IBM Finds the Need for (SQL on Hadoop) Speed

What Can GPFS on Hadoop Do For You?

This article doesn’t actually answer the question of why Hadoop on Power. The article is completely absent any benchmark or supporting data.