Microsoft’s SQL Server 2014 Adds In-Memory, Updates Columnar

Everybody wants their databases to retrieve and store information faster, and with SQL Server 2014, which is released to manufacturing today, Microsoft is tweaking its columnar data store as well as integrating its first in-memory database technology to boost transaction processing and data warehousing workloads, respectively.

Back in the dawn of time, Microsoft partnered with Sybase to get its initial database engine, but over time SQL Server has been tweaked and changed so much that it is almost unrecognizable as a Sybase variant. The delivery of the new “Hekaton” in-memory database engine, which is completely new and marks the first time in 15 years that the database engine has been updated, will widen the gap even further.

Back in the dawn of time, Microsoft partnered with Sybase to get its initial database engine, but over time SQL Server has been tweaked and changed so much that it is almost unrecognizable as a Sybase variant. The delivery of the new “Hekaton” in-memory database engine, which is completely new and marks the first time in 15 years that the database engine has been updated, will widen the gap even further.

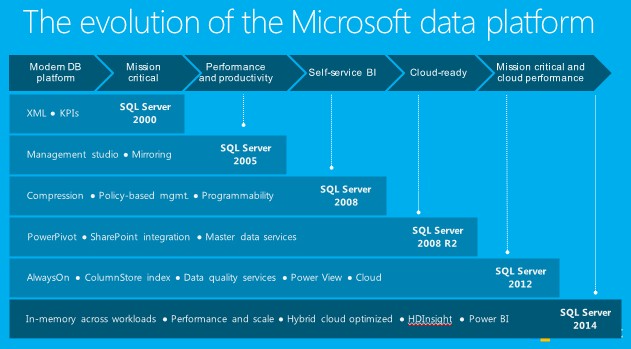

The journey for Microsoft has been a long one, explained Tracy Daugherty, principal group program manager lead for SQL Server 2014 and Windows Azure SQL Virtual Machines, one of its two cloud variants, at a meeting last week in Microsoft’s offices in Bellevue, Washington. “We became more and more about enterprises as we went along,” he said, “and in the last two releases, we did a real hard play to differentiate.”

The big changes on the analytics front started with the PowerPivot in-memory technology that Microsoft created for Excel 2010 and 2013, which integrated with SQL Server 2008 to allow for the manipulation of datasets with over 1 million rows. This is more of a data exploration tool and dashboard creator than an in-memory database. With SQL Server 2012, Microsoft added the first iteration of its Columnstore Index, a columnar data store that significantly speeds up complex queries in data warehouses while at the same time reducing the capacity requirements for databases thanks to clever compression techniques.

With SQL Server 2014, two new technologies to speed up queries are coming out: the Hekaton in-memory database feature and SSD Buffer Pool Extension. The columnar data store is also getting some performance tweaks with a Clustered Columnstore Index variant.

The Hekaton in-memory database engine represents the largest change with SQL Server 2014. We have gone through this feature in fine detail over at EnterpriseTech, since this feature is aimed at transaction processing instead of analytics. But the interesting bit about in-memory transaction processing is that it enables such speedy queries against production databases that have live rather than summary data that in many cases putting in-memory databases underneath ERP, CRM, and other back-end systems obviates the need for data warehouses to do separate queries. This, in fact, is precisely what application software maker SAP has found out as it has deployed its own HANA in-memory database underneath its own ERP and CRM systems as well as the data warehouse it uses to steer its business.

The Hekaton in-memory database engine represents the largest change with SQL Server 2014. We have gone through this feature in fine detail over at EnterpriseTech, since this feature is aimed at transaction processing instead of analytics. But the interesting bit about in-memory transaction processing is that it enables such speedy queries against production databases that have live rather than summary data that in many cases putting in-memory databases underneath ERP, CRM, and other back-end systems obviates the need for data warehouses to do separate queries. This, in fact, is precisely what application software maker SAP has found out as it has deployed its own HANA in-memory database underneath its own ERP and CRM systems as well as the data warehouse it uses to steer its business.

Unlike HANA, Microsoft’s Hekaton in-memory database does not require that customers convert all of the tables, stored procedures, and other database features to the in-memory format. Rather, explained Daugherty, customers can use a migration tool to find which database features are being hammered on most by applications and convert them from disk storage to in-memory. Customers can, of course, convert all of their tables and stored procedures to in-memory if they so choose. At the moment, Hekaton has some limitations in that it does not support large objects (LOBs), triggers, sparse columns, constraints, foreign keys, and a number of other features. The Hekaton engine similarly does not scale well over more than two processors and has a limitation of 256 GB for the total size of the database. This is a V1 product, and the Hekaton engine will be updated over time, adding support for more database features and upping the size of the databases supported.

The good news for Microsoft customers is that Hekaton can run on any server – not on pre-certified machines like SAP HANA – and it comes bundled with SQL Server 2014 Enterprise Edition at no additional cost. Hekaton works best on applications that code applications close to the database, using stored procedures and with hot data fitting inside of main memory. Legacy OLTP applications running on Windows Server and accessing SQL Server databases see a speedup of somewhere between 4X and 7X, and applications that have periodic bulk updates and heavy random reads can see a speedup of 10X. Web-style applications that take full advantage of SQL Server can see as much as a 25X improvement in performance, according to Microsoft, and even a workload derived from the disk-tuned TPC-C online transaction processing benchmark saw a factor of two boost going in-memory.

You don’t have to go in-memory to boost OLTP performance, by the way. You can instead add flash memory to your systems and use the new SSD Buffer Pool Extension feature of SQL Server 2014. The buffer pool for the plain-vanilla SQL Server engine holds hot data and indexes and is usually loaded into main memory. Microsoft had been coding this buffer pool extension for SQL Server 2012, but it did not make the cutoff, says Kevin Liu, principal lead program manager for SQL Server.

With the buffer pool extension, customers can create a much larger buffer pool using flash memory inside of servers. Either PCI-Express flash cards or solid state drives hanging off disk controllers can be used to create this extended pool, but Liu says obviously PCI-Express flash works best because it has much lower latency. The rule of thumb with the SSD Buffer Extension Pool feature is to have somewhere between four and 10 times the amount of memory allocated to databases available on flash for SQL Server to play with. In its internal testing, the extended buffer pools boosted the performance of OLTP workloads by as much as a factor of three. This feature has been added to SQL Server 2014 Standard Edition and Enterprise Edition, and again is bundled into the base price. Incidentally, the SSD buffer pools cannot be used with the Hekaton in-memory database and it is not optimized for data warehouse workloads, where table scans are so large it would wipe out the cached pages stored on the SSDs.

For data warehousing workloads, the new Clustered Columnstore Index is important because unlike the original implementation of columnar data store used in SQL Server 2012, this one is directly updatable by applications. Before, customers had to create the columnar format from a base table, and if the information changed, they had to do it in that base table and then recreate the columnar format on which to run queries. This was obviously a pain in the neck, and with the Clustered Columnstore Index , you can create the columnar data store and have it as well as the base table both updated as information changes.

The second rev of the columnar store has improved data compression, ranging from a factor of 5X to 10X, depending on the data, and queries run against it run anywhere from 5X to 25X faster, on average.

One last thing on the analytics front. Microsoft does not currently have its own Hadoop distribution, but it does partner with Hortonworks to deliver its Windows Azure HDInsight Hadoop service out on its public cloud. HDInsight is integrated with the Power Pivot and Power View analytics tools that also hook into SQL Server to extract data. This service went commercial back in October 2013. In conjunction with the SQL Server 2014 launch today, Microsoft is supporting the Hadoop 2.2 stack underneath the HDInsight service, including support for the YARN scheduler and Stinger Phase 2, the latest interactive query engine from Hortonworks that gooses the performance of Apache Hive. The Phase 2 update to Stinger can boost the performance of queries by as much as 40X and can push data compression as high as 80 percent, says Microsoft. Finally, HDInsight is now available in all major Windows Azure geographies with the exception of Greater China.

Related Items:

Can Microsoft Become the McDonald’s of Hadoop?

Microsoft Pushes Hadoop up Windows Hill