Spectra Looks to Drive Tape Storage Into Hadoop

Spectra Logic gave organizations a way to store Hadoop data on tape today with the launch of its new BlackPearl line of storage appliances. The company also said that the new DS3 object storage interface that underlies BlackPearl could become a part of the open source Hadoop codebase by late 2014, bringing to Hadoop a simple REST-based interface to read and write into tape.

Deep Simple Storage Service (DS3) is a special version of the Simple Storage Service (S3) storage protocol that Amazon Web Services developed to enable users to store massive amounts of data on the Web. The S3 protocol is already REST-based, which has done wonders for Amazon by making it easy for application developers to hook into S3 and use it as a back-end data store.

Deep Simple Storage Service (DS3) is a special version of the Simple Storage Service (S3) storage protocol that Amazon Web Services developed to enable users to store massive amounts of data on the Web. The S3 protocol is already REST-based, which has done wonders for Amazon by making it easy for application developers to hook into S3 and use it as a back-end data store.

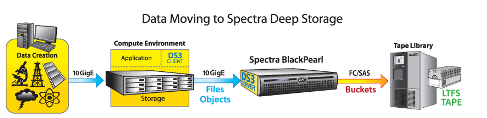

Now, Spectra Logic is looking to take that simplicity one step further by, in effect, enabling S3 to talk tape–specifically, to talk to Spectra’s new BlackPearl appliances, which serve as a front-end to Spectra’s massive T-Series, LTO-based tape libraries.

DS3 builds on the original S3 spec, which was designed to talk to memory or spinning disk, by adding the capability to move “buckets” of S3 objects onto tape. It also brings new Bulk PUT and Bulk GET commands that can replicate large numbers of objects.

As TPM reported today in Tabor Communication’s new Enterprise Tech publication, the initial targets for Spectra’s new storage gear will be the big data center customers in the life sciences, media and entertainment, and oil and gas industries. These types of customers want the cost advantages that tape can provide, but don’t want to deal with the slow and tedious process of finding and restoring specific pieces of data from tape. DS3 can help in this regard.

Spectra is also hoping to sell its DS3 and BlackPearl technology to organizations with hyperscale Web initiatives, including those based on Hadoop. Spectra executives shared some of their Hadoop plans with Enterprise Tech during the company’s Forever Data 2013 conference, which took place this week in Denver, Colorado.

For starters, the company announced that it has developed a DS3 client for Hadoop, giving customers the capability to move data from Hadoop onto Spectra’s tape libraries. While Hadoop is seen as a cheap way to store massive amounts of data, it still costs more than tape, which can cost just pennies per GB.

The executives also said that Spectra has reached out to at least two of the major Hadoop distributors with the idea of partnering them on making DS3 part of the Hadoop codebase. The relationships are being formed now, and it would probably take at least a year before the DS3 Hadoop client actually made it into Apache’s Hadoop project.

“What we’re really looking for is somebody who can sponsor us,” said David Trachy, Spectra Logic’s senior director of emerging storage technologies, in an interview with TPM and HPCwire editor Nicole Hemsoth at Forever Data 2013. “We’ve been talking with some companies in California who are Hadoop distributors. We really need to work through them because we have nobody in our company who’s been a Hadoop distributor.”

“What we’re really looking for is somebody who can sponsor us,” said David Trachy, Spectra Logic’s senior director of emerging storage technologies, in an interview with TPM and HPCwire editor Nicole Hemsoth at Forever Data 2013. “We’ve been talking with some companies in California who are Hadoop distributors. We really need to work through them because we have nobody in our company who’s been a Hadoop distributor.”

Hadoop customers are a small subset of the potential market for Spectra right now, but executives with the company see that number increasing as Hadoop implementations move out of the proof of concept (POC) stage into production.

“One of the things that we see with Hadoop, specifically, is it’s still very early in the adoption,” said Molly Rector, chief marketing officer and executive vice president of product management and marketing for the Boulder, Colorado company. “There are a couple of really big Hadoop environments, and then a whole bunch of POCs. So lots of companies are figuring out what are they going to do with Hadoop.”

Spectra’s position as a provider of tape-based storage for very high-end enterprises and research institution gives it a good view into the evolving Hadoop landscape, Rector said. “We can work with the big guys who actually have enough data that they need it,” she said. “Most of the Hadoop clusters don’t hold much data. People call it big data because they’re figuring out how to use it, how to get data in there. Once they get it into production, they’ll have a lot of data.”

Spectra has its eyes on today’s smaller Hadoop clusters, which may be tomorrow’s biggest big data clusters. “There’s just a lot of new clusters out there that people are expanding every year, and they’re expanding them just because they don’t have a place to put raw data,” Trachy said. “As you get into the 50-, 100-node [Hadoop] clusters–and there’s a lot of those–it becomes cost prohibitive to keep buying disk year after year.”

Yahoo, in particular, is one Hadoop shop (if it can be called that, since it helped create it) that is definitely interested in DS3. The company worked with Spectra on the DS3 Hadoop client because it needed a faster way of getting data that was archived on tape back into a production system. Yahoo, of course, has an exceptionally large Hadoop environment. But as the technology soars in adoption in the coming years, enterprise-level capabilities–the such as the storage virtualization layer that DS3 basically enables–will go far in helping to reduce some of the obstacles standing between organizations and Hadoop.

Related Items:

Yahoo, NASCAR Intrigued by Spectra DS3 Object Storage for Tape

The Big Data Market By the Numbers

Gartner: Internet of Things Plus Big Data Transforming the World