This Week’s Big Data Big Ten

Welcome to the Big Data Big Ten for the week ending Friday, April 27th.

It’s been a news-heavy week with a few key items about new funding for established startups, some expected players entering the Hadoop buzzword gauntlet, some sports analytics buffs reshaping team decisions, not to mention some select tidbits from the SAS Global Forum, which just wrapped up in Orlando.

Without further delay, let’s get started as we unwind the week in big data news.

Next — Tackling Rugby Woes with Analytics >>>

Tackling Rugby Woes with Analytics

Sports is increasingly becoming a technical and scientific business. Like any commercial organization, Leicester Tigers, the nine times champion of English rugby union’s Premiership and two times European champion, is faced with challenges around growing and retaining talent, measuring performance, optimizing tactics and detecting risk.

The rugby team today announced that it is using IBM predictive analytics software to assess the likelihood of injury to players and then use this insight to deliver personalized training programs for players at risk.

Unlike spreadsheet-based statistical solutions, IBM predictive analytics is designed to enable Leicester Tigers to broaden and deepen the analysis of both objective and subjective raw data, such as fatigue and game intensity levels. Hence, Leicester Tigers can rapidly analyze such physical and biological information for all 45 rugby players in its squad in order to detect and predict patterns or anomalies.

Using IBM predictive analytics, Leicester Tigers aims to get more insight into which data is important to predict injuries on an individual basis and when an individual is likely to reach that threshold so appropriate action can be taken. For example, if a player has a statistically significant change in one or more of his fatigue parameters and the current intensity of training is likely to be high, the analytics software may show that this player is likely to become injured in the near future. Thus, Leicester Tigers would implement strategies to reduce fatigue or alter his training accordingly.

Predictive analytics has become an integral part of the sports world. The project between the Leicester Tigers and IBM is part of a growing trend among all types of organizations to uncover hidden patterns in data in order to predict or prevent outcomes for competitive advantage.

Next — Investors Take a Shine to Lustre >>>

Investors Take a Shine to Lustre

Terascala, Inc., which focuses on accelerating big data applications through storage I/O optimization, today announced that it has closed a $14 million Series B funding round.

Terascala’s approach to high throughput, high capacity storage combines the Lustre file system, analysis and optimization tools, as well as integration and services into one package that they claim accelerates big data applications running on large interconnected server installations while optimizing data access.

“Storage I/O optimization is rapidly becoming a core requirement for big data applications,” said Terri McClure, senior analyst at Enterprise Strategy Group. “Terascala is a leader in the development of solutions that address this requirement, and the fact that its product runs on an open source file system and on industry-standard platforms makes for a very compelling value proposition in a price sensitive market.”

The Terascala Integrated Storage Information System (TISIS) provides appliance management and workload-driven I/O technology for open source Lustre. TISIS runs on industry-standard storage appliances in a wide range of market segments, including life sciences, financial services, energy, academic research, computer-automated engineering (CAE), and government/defense.

Steve Butler, CEO of Terascala says. “Our pioneering work in developing a software stack that delivers optimized, scalable I/O on industry-standard storage appliances enables organizations to accelerate big data applications, and clearly, industry leaders are taking notice.”

Terascala will use the funding to significantly expand research and development, marketing, customer support, to develop strategic alliances, and to fuel international expansion.

Next — Sears Buys into Hadoop…>>>

Sears Gets into the Big Data, Hadoop Game

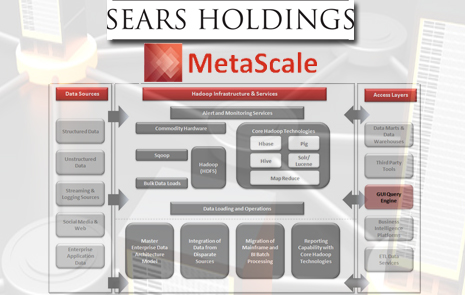

This week Sears Holdings announced the addition of a wholly owned subsidiary that it says will reshape the big data landscape as businesses try to manage, store, process and move their mission-critical information.

The new subsidiary, MetaScale, is essentially a provider of technology managed services and data solutions. MetaScale specializes in solutions for traditional brick-and-mortar enterprises across all industry verticals looking to efficiently establish and grow their big data expertise, experience immediate tactical success and begin to build out their fundamental big data capability in an organized and precise fashion.

MetaScale provides managed services related to the widely adopted Apache Hadoop data management framework, enabling enterprises to drive new business value from their data with end-to-end solutions through a secure, private cloud environment.

MetaScale’s services include building and configuring the Hadoop cluster; operating, managing and monitoring the cluster; integrating with other data management technologies; running data-cleansing and other data management projects; writing and developing MapReduce applications; and custom solution design and system integration.

“MetaScale is uniquely positioned to serve the needs of large-scale enterprise projects,” said Keith Sherwell, CIO for Sears Holdings. “From a competitive standpoint, MetaScale has identified a gap in the market to provide a superior managed-services model to other large-scale traditional enterprises that haven’t taken advantage of or investigated the potential of Apache Hadoop.”

With an emphasis on data management infrastructure and associated services, MetaScale was developed over the past two years and expects to leverage its Hadoop expertise to partner with analytics tools and service providers, as well as system integrators that lack Hadoop-based infrastructure capabilities.

NEXT — Teradata Gets Sassy >>>

Teradata Gets Sassy

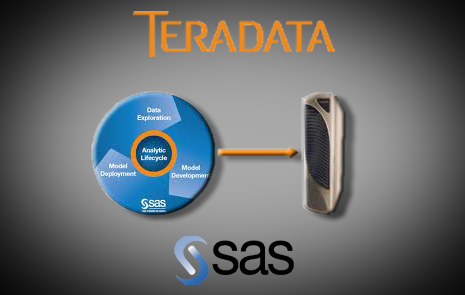

This week at the SAS Global Forum in Orlando Teradata announced a new appliance for those running SAS High Performance Analytics.

The Teradata 700 appliance is part of an integrated offering, called “SAS High-Performance Analytics for Teradata.” The new offering leverages in-memory analytics, which distributes complex analytics in parallel across a vast pool of memory, giving organizations high-speed tools to tap into patterns and insights hidden in large volumes of data.

Teradata says that for customers in finance with dynamic pricing and risk modeling needs, those in telco with customer analytics segmentation, churn and acquisition requirements and others in retail, for example, can see improvement by leveraging an in-memory platform.

The Teradata 700 appliance, an expansion of the database platform family, is pre-loaded with SAS High-Performance Analytics software – a single purpose system designed exclusively to leverage SAS advanced analytics.

“SAS High-Performance Analytics for Teradata leverages a purpose-built, dedicated, and scalable Teradata 700 appliance to accelerate the model development cycle. It’s designed for organizations that require a finer set of answers for immediate action and quick business value,” said Scott Gnau, president of Teradata Labs.

Teradata says that in a recent engagement with an existing customer, in-memory analytics on Teradata improved modeling and complex query performance from a week down to less than 2 minutes. New customers can expect similar results.

Next — Supporting Data-Intensive Science >>>

Supporting Data-Intensive Science

The U.S. Department of Energy’s Energy Sciences Network, or ESnet, provides reliable high-bandwidth network services to thousands of researchers tackling some of the most pressing scientific and engineering problems, such as finding new sources of clean energy, increasing energy efficiency, understanding climate change, developing new materials for industry and discovering the nature of our universe.

To support these research endeavors, ESnet connects scientists at more than 40 DOE sites with experimental and computing facilities in the U.S. and abroad, as well as with their collaborators around the world. ESnet is managed for DOE’s Office of Science by Lawrence Berkeley National Laboratory.

As science becomes increasingly data-intensive, the ESnet staff regularly meets with scientists to better understand their future networking needs, then develops and deploys the infrastructure and services to address those requirements before they become a reality.

One example of this is the Advanced Networking Initiative, a prototype 100 gigabits-per-second networking connecting the DOE Office of Science’s top supercomputing centers in California, Illinois and Tennessee, and an international peering point in New York. This 100 Gbps prototype is now being transitioned to production and will be rolled out to all other connected DOE sites in the coming year.

In order to help these research institutions fully capitalize on this growing availability of bandwidth to manage their growing data sets, ESnet is now working with the scientific community to encourage the use of a network design model called the “Science DMZ.” The Science DMZ is a specially designed local networking infrastructure aimed at speeding the delivery of scientific data. In March 2012, the National Science Foundation supported the concept by issuing a solicitation for proposals from universities to develop Science DMZs as they upgrade their local network infrastructures.

Leading the development of the Science DMZ effort at ESnet is Eli Dart, a network engineer with previous experience at Sandia National Laboratories and the National Energy Research Scientific Computing Center. An in-depth interview with Eli about the project is available at HPCwire this week.

NEXT — New Notch in IBM Big Data Belt…>>>

IBM Adds Notch to Acquisition Belt

IBM is set to make another investment in the future of its big data strategy with the announcement this week of a definitive agreement to acquire Vivisimo for an undisclosed sum.

The privately-held Pittsburgh-based company is one several options IBM could have tapped for federated discovery and navigation software to aid data access and analyze big data across the enterprise.

IBM says Vivisimo’s software excels in capturing and delivering quality information across the broadest range of data sources, no matter what format it is, or where it resides. The software automates the discovery of data and helps employees navigate it with a single view across the enterprise.

They also say that the combination of software offerings will further IBM’s efforts to automate the flow of data into business analytics applications, helping clients better understand consumer behavior, manage customer churn and network performance, detect fraud in real-time, and perform data-intensive marketing campaigns.

“Navigating big data to uncover the right information is a key challenge for all industries,” said Arvind Krishna, general manager, Information Management, IBM Software Group. “The winners in the era of big data will be those who unlock their information assets to drive innovation, make real-time decisions, and gain actionable insights to be more competitive.”

Vivisimo has more than 140 customers in industries such as government, life sciences, manufacturing, electronics, consumer goods and financial services. Clients include Airbus, U.S. Air Force, Social Security Administration, Defense Intelligence Agency, U.S. Navy, Procter & Gamble, Bupa, and LexisNexis among others. Upon the closing of the acquisition, approximately 120 Vivisimo employees will join IBM’s Software Group. IBM will incorporate Vivisimo technology into its big data platform.

Next — A Flash Forward for Gridiron >>>

A Flash Forward for Gridiron

Sunnyvale-based GridIron Systems calls itself a big data acceleration company due to its focus on SSDs to improve data center performance.

This week the company announced their OneAppliance, an all-flash appliance line that they claim that tackle big data efficiently with an appliance that can deliver over 4 million IOPS and 40GB/s throughput for handling structured databases like Oracle and SQL Server as well unstructured, NoSQL frameworks like Hadoop.

The company says this is a big data appliance out of the package. It is based on engineered systems that match the unique workload characteristics of Big Data to Multi Level Cell (MLC) Flash for optimal performance. They also that for the first time users can tap the same infrastructure to seamlessly combine structured, unstructured or mixed big data a processing.

The OneAppliance suite is targeted at users running clustered applications that often struggle to deliver performance under concurrent workloads and fail to utilize the full capabilities of hundreds, or even thousands, of physical servers and storage devices. They claim that in contrast, GridIron’s OneAppliance provides up to twenty times the bandwidth “of even the fastest flash solutions available today and can reduce space and power consumption by up to 60% while reducing the total cost of ownership for big data infrastructure by 75%.”

More about GridIron and the OneAppliance suite can be found at their site. See how many times you can count the words “big data” across their site. Whew.

NEXT — LASR Over SAS Forum…>>>

SAS Extends In-Memory Analytics

It’s been a week laden with news about SAS, following their global forum this past week in Orlando. Among the most broadly important announcements this week was their response to customer demand to extend the new SAS LASR Analytic Server across its portfolio.

SAS LASR Analytic Server, part of the new SAS Visual Analytics, will be integrated with forthcoming versions of many SAS horizontal and industry-specific offerings. The company says that providing in-memory versions addresses increasing needs to derive insights from masses of data and tackle complex questions in seconds instead of minutes or minutes instead of hours.

“The foundation of SAS software is market-leading analytics and a broad collection of algorithms developed over 36 years,” said SAS CEO Jim Goodnight. “We’re moving all SAS algorithms to our in-memory server architecture so customers can benefit from its blazing speed and game-changing results.

There are already a select number of analytics products available with in-memory versions, including those for risk management, retail planning and retail markdown optimization. In-memory applications to come are regular price optimization for retail, retail forecasting, promotional price optimization for retail, marketing optimization and others.

Running on industry-standard blade servers, SAS LASR Analytic Server quickly reads data into memory for fast processing where the data becomes available for visualization. SAS LASR is free of the limitations of most relational database systems. As with all SAS technology, it works with all commonly used data structures.

Users can explore all data, execute analytic correlations on billions of rows of data in just minutes or seconds, and visually present results. This helps them identify patterns, trends and relationships in data that were not evident before.

NEXT — Analytic Superhero Unmasked >>>

Analytic Superhero Unmasked

Last week we discussed how SAS is recognized data science leadership through its superheroes approach. This week the company announced the first inductee to the League of Analytic Superheroes.

The first League of Analytic Superheroes member, “Illumino” (aka Rick Andrews), was revealed Monday at SAS Global Forum. One of many to be recognized for powerful analytical skills and accomplishments.

Rick Andrews’ evolution to analytic hero Illumino was depicted during SAS Global Forum in a stylized tale of his exploits. He will receive his own superhero action figure based on an illustration created in his likeness by a professional graphic novel artist.

Andrews, who works in the Office of the Actuary for the Centers for Medicare and Medicaid Services (CMS), has been a programmer-analyst for nearly 20 years in roles including senior data analyst, engineering analyst, systems designer, team leader and mentor. He is experienced in mainframe, UNIX and Windows environments, and his strongest technical skills are SAS programming and SQL. Andrews has trained hundreds of users and meets monthly to keep CMS economists, statisticians, actuaries and health specialists on the top of their fields.

Andrews’ predictive insights quickly deliver useful information, producing superhero-sized results.

Analytic professionals and data scientists using SAS and Teradata can apply for superhero status on the Analytic Superheroes website by quantitatively reporting efforts to advance real-world business objectives — and thus defeat “the forces of chaos.” SAS and Teradata associates may nominate analysts. Each nomination is reviewed by an expert panel. Superheroes are immortalized in various ways, and may apply to join the International Institute for Analytics.